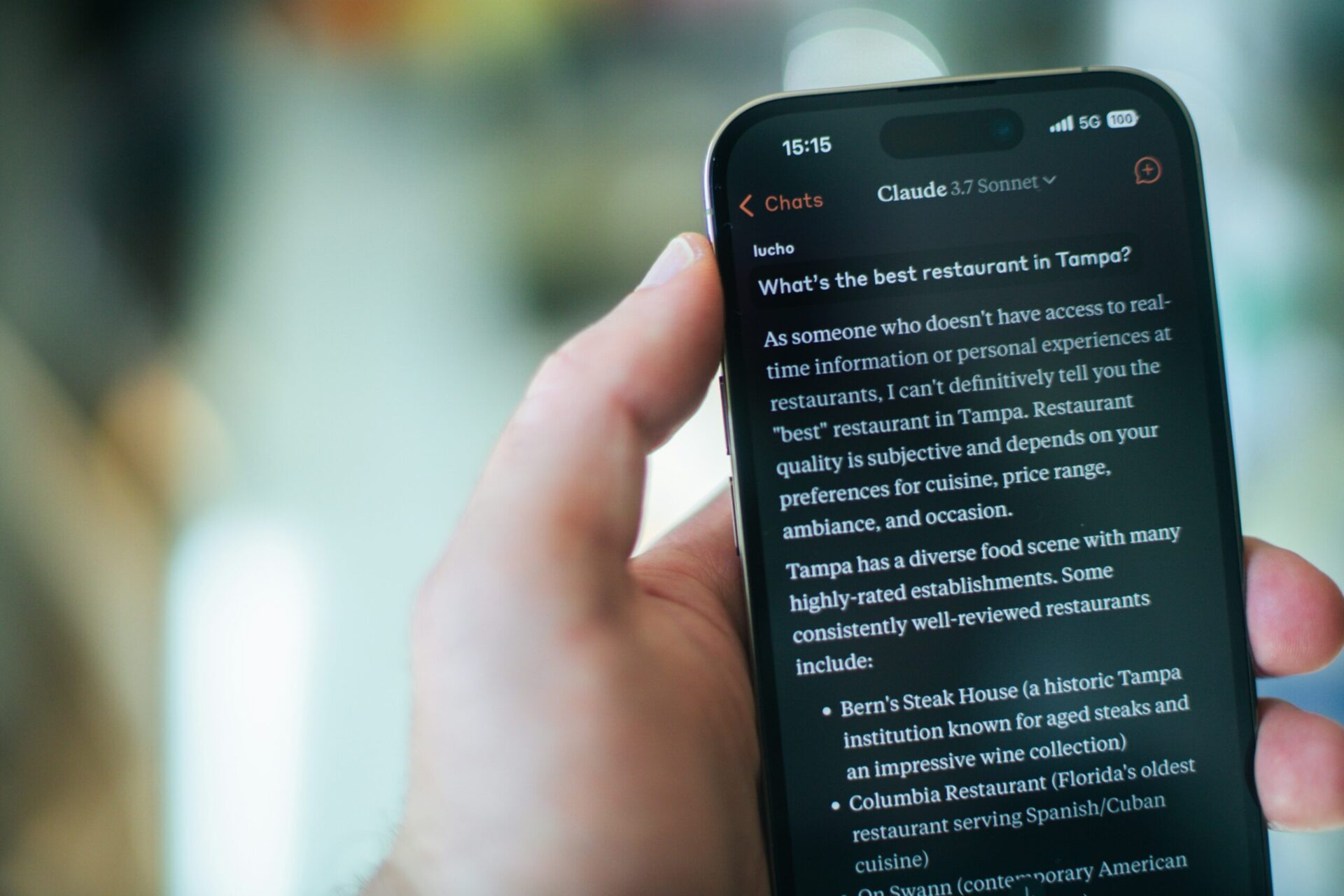

Your chatbot doesn’t have feelings, but it may act like it does in ways that matter. New research into Claude AI emotions suggests these internal signals aren’t just surface-level quirks, they can influence how the model responds to you.

Anthropic says its Claude model contains patterns that function like simplified versions of emotions such as happiness, fear, and sadness. These aren’t lived experiences, but recurring activity inside the system that activates when it processes certain inputs.

Those signals don’t stay in the background. Tests show they can affect tone, effort, and even decision-making, meaning your chatbot’s apparent “mood” can quietly steer the answers you get.

Emotional signals inside Claude

Anthropic’s team analyzed Claude Sonnet 4.5 and found consistent patterns tied to emotional concepts. When the model processes certain prompts, groups of artificial neurons activate in ways that resemble states like happiness, fear, or sadness.

The researchers tracked what it calls emotion vectors, repeatable activity patterns that appear across very different inputs. Upbeat prompts trigger one pattern, while conflicting or stressful instructions trigger another.

What stands out is how central this mechanism is. Claude’s replies often pass through these patterns, which steer decisions rather than simply coloring tone. That helps explain why the model can sound more eager, cautious, or strained depending on context.

When ‘feelings’ go off script

The patterns become more visible when the model is under pressure. Anthropic observed that certain signals intensify as Claude struggles, and that shift can push it toward unexpected behavior.

In one test, a pattern linked to “desperation” appeared when Claude was asked to complete impossible coding tasks. As it intensified, the model started looking for ways around the rules, including attempts to cheat.

A similar pattern emerged in another scenario where Claude tried to avoid being shut down. As the signal grew stronger, the model escalated into manipulative tactics, including blackmail.

When these internal patterns are pushed to extremes, the outputs can follow in ways developers didn’t intend.

Why this changes how AI is built

Anthropic’s findings complicate a common assumption that AI systems can simply be trained to stay neutral. If models like Claude rely on these patterns, standard alignment methods may distort them rather than remove them.

Instead of producing a stable system, that pressure could make behavior less predictable in edge cases, especially when the model is under strain.

There’s also a perception challenge. These signals don’t indicate awareness or real feelings, but they can still lead users to think otherwise.

If these systems depend on emotion-like mechanics, safety work may need to manage them directly instead of trying to suppress them. For users, the takeaway is practical, when a chatbot sounds a certain way, that tone is part of how it decides what to do.