For a long time, my relationship with PDF tools followed a predictable arc. I would try something bloated, uninstall it within a week, and go back to stitching together command line utilities like a person who clearly had too much patience and not enough time. Then I found Stirling-PDF, and for once, the story seemed to end differently.

It was simple and ran locally without being a document management system disguised as a utility. It felt like the kind of tool you install and forget about, which is usually the highest compliment in this category. For a while, it worked exactly like that, but then it started to drift.

When reliability stops being invisible

Subtle failures start breaking trust

The first issue I noticed was subtle enough that I assumed it was my fault. I merged a few PDFs, checked the output, and something felt off. One of the original files had been overwritten. No warning, prompt or indication that anything unusual had happened. That alone would have been concerning, but still explainable. Maybe I misclicked something or there was a naming collision I did not notice.

Then it happened again and then splitting started behaving strangely. I would take a document, split it into two parts, and only one would actually be saved. The process would be complete without errors, which is arguably worse than failing loudly. Silent failure creates a kind of ambiguity that makes you distrust not just the tool, but your own workflow and once that happens, the entire value proposition collapses.

Control in exchange for predictability

There is an implicit contract when you use a local, self-hosted tool. You give up convenience, polish, and sometimes performance in exchange for control and predictability.

This new open-source office suite wants to replace Google Docs and Microsoft Office

Euro-Office is based on OnlyOffice, with collaborative editing support.

Stirling-PDF originally honored that contract well, and it did not feel like a black box but a thin layer over reliable operations.

The recent behavior breaks that assumption. It is not that the tool is crashing or refusing to work. It is that it sometimes does the wrong thing without telling you, which keeps you on edge because you don’t know what exactly to expect.

It’s not just me

Others started noticing similar issues

At this point, I did what most people do when something feels off but not obviously broken. I looked around to see if others were noticing the same pattern.

The answer, unsurprisingly, was yes. People reported high CPU usage even when idle. Others mentioned unusually large container sizes. Some ran into memory consumption that did not align with what the tool was actually doing. There were also concerns about tracking behavior introduced at some point, which did not sit well with users who explicitly chose a self-hosted solution to avoid that class of problem.

None of these issues on their own would be fatal. Together, they start to form a pattern. The tool is no longer as lightweight, predictable, or transparent as it once was. At that point, the question shifts from “how do I fix this?” to “Should I still be using this?”

Looking for alternatives without lowering standards

Finding balance between simplicity and control

Why Did Web Browsers Become PDF Readers?

The quest to replace browser plug-ins had a few side effects.

Finding alternatives in this space is deceptively difficult. There are plenty of PDF tools, but most fall into one of two categories.

The first category is enterprise software that assumes you are managing workflows across teams and departments (often loaded with AI features). The second is web-based tools that promise convenience but require you to upload documents somewhere you do not control and don’t know what might happen to them.

What I wanted was something closer to the original spirit of Stirling-PDF. Local, simple, and reliable and that is how I ended up trying Bento PDF.

The appeal of doing less

Simpler design changes the experience

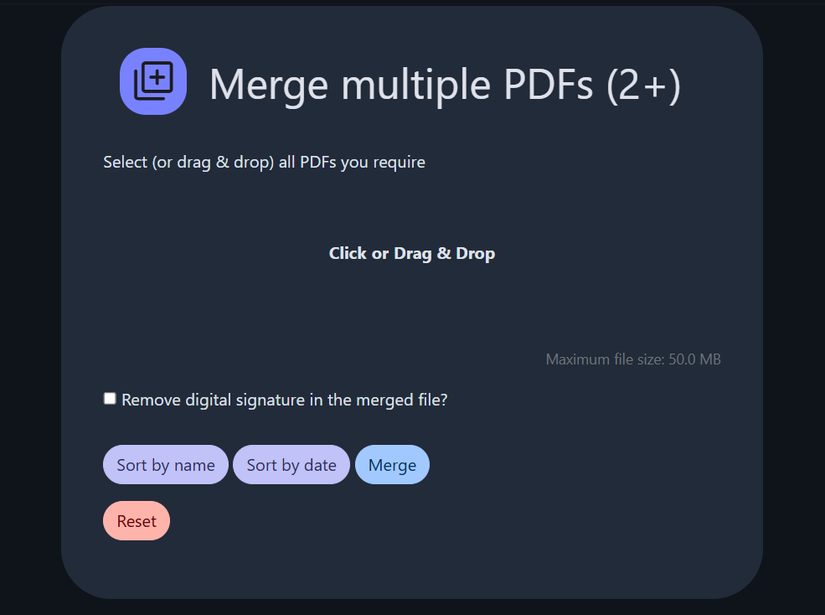

The design philosophy behind Bento PDF is noticeably different. Instead of running as a heavy backend service, it operates entirely in the browser. That sounds like a limitation at first, but in practice it simplifies a lot of things.

There is no long-running container consuming resources in the background and there is no question about whether your files are being processed locally or somewhere else. Everything happens in the same place as you interact with the interface. That alone removes an entire class of problems and also changes how you think about the tool. Instead of being infrastructure, it becomes a utility again.

Where It Starts Feeling Effortless

Everything feels lighter in daily use

One of the more surprising differences is how light Bento PDF feels in day-to-day use. Operations that felt slightly heavy in Stirling-PDF now feel immediate.

This is not necessarily because it does less work but because the execution model is simpler without any overhead from a containerized backend, idle resource consumption, and fewer moving parts overall.

The result is not that dramatic in a benchmark sense, but it is noticeable in practice. You stop thinking about the tool again, which is where you want to be.

I replaced 4 paid subscriptions with one free app

I ditched Notion, Trello, Dropbox, and Google Photos. Anytype handles docs, boards, and galleries in one workspace—without a monthly subscription.

Trust is built on small guarantees

Predictability matters more than features

The biggest improvement, though, is not performance. It is predictability. When you merge files in Bento PDF, it produces a new file. It does not overwrite anything unless you explicitly choose to replace something. When you split a document, all expected outputs are generated.

These sound like trivial guarantees, but they are exactly what broke in my experience with Stirling-PDF. Once a tool violates these assumptions, every operation becomes a small risk. You start keeping backups of temporary files, and generally spend more time verifying than doing it. That overhead is easy to underestimate until it is gone.

Trade-offs still exist

Fewer features but more reliability

Switching tools is never a purely positive story. Bento PDF does not replicate every feature one-to-one. For example, comparison features in Stirling-PDF are more mature. Depending on your workflow, that might matter. If you rely on specific advanced operations, you may find gaps, but this is where priorities matter.

7/10

- Brand

-

Synology

- CPU

-

Intel Celeron J4125

I would rather have a smaller set of features that behave consistently than a larger set that occasionally fails in ways that are hard to detect.

Where I ended Up

Right now, my setup is simpler than it was before. There is one less container running and one less service to monitor.

More importantly, I am no longer thinking about my PDF tool at all. Which, in a strange way, is the best outcome possible.