I use Gemini, ChatGPT, and Claude almost every day, both for work and for my own projects. Each service has its strengths and weaknesses, and you can access a lot of the features for free. The paid plans offer even more features, but if you only want to pay for one, there’s a clear winner.

Gemini is great for research

It’s a good choice if you’re all in on Google services

Unsurprisingly for a chatbot built by Google, Gemini is great for search. In my experience, this is something that Claude in particular struggles with; it can’t read through Reddit threads, for example, claiming that Reddit is blocked from its web fetching tool. When I need to find a particular post or piece of information, I usually use Gemini’s free tier.

Gemini also excels at deep research. All three services have deep research tools, but in my experience, Gemini goes deeper and pulls from more sources than either ChatGPT or Claude.

One of the most unique features is Audio Overview, which turns your research report into an AI-generated podcast, which can be an easier way to digest a complex report. I use deep research and the Audio Overview feature on the free tier, as I rarely need to do more than a few deep dives a month.

Gemini also integrates seamlessly with other Google services such as Gmail, Google Docs, and Google Sheets, although you need a paid subscription to take advantage of some of the more useful features. It also currently has the best image generation of the big three.

ChatGPT is more thorough

It has some useful tools

ChatGPT was the first AI chatbot that I paid for, and I used it exclusively for a long time. There’s a lot that it’s really good at, and it’s packed with useful features.

One of the things I use it for most is proofreading. ChatGPT is great at spotting grammatical errors and typos in my work, as well as spotting anything that’s outdated or inaccurate. Using the same prompt, Claude isn’t nearly as good, missing a lot of the things that ChatGPT catches.

Creating custom GPTs and setting up projects is also really useful, but while you can create projects for free, you need to be on a paid tier to create custom GPTs. Once you’ve built them, however, you can use them even without a subscription.

The desktop app has some useful tools, too. The Work with Apps feature on macOS can be really useful; it lets ChatGPT see the content of apps you have open, so you don’t have to keep copying and pasting the output from the Terminal, for example.

Codex, OpenAI’s coding tool, is also very good. Using models such as GPT-5.3-Codex, it can rival Claude in terms of coding capabilities. As well as coding, you can also use Codex app for more agentic tasks, such as asking it to batch rename a folder full of image files with an alt text description of each image.

Claude is great for coding and connectivity

Computer Use is also wild

One of the big reasons that many people use Claude is for coding. Claude Opus 4.6 is one of the strongest models for coding and reasoning right now. I’m not an avid coder, but I’ve used it to vibe code a few personal projects, and it’s very impressive.

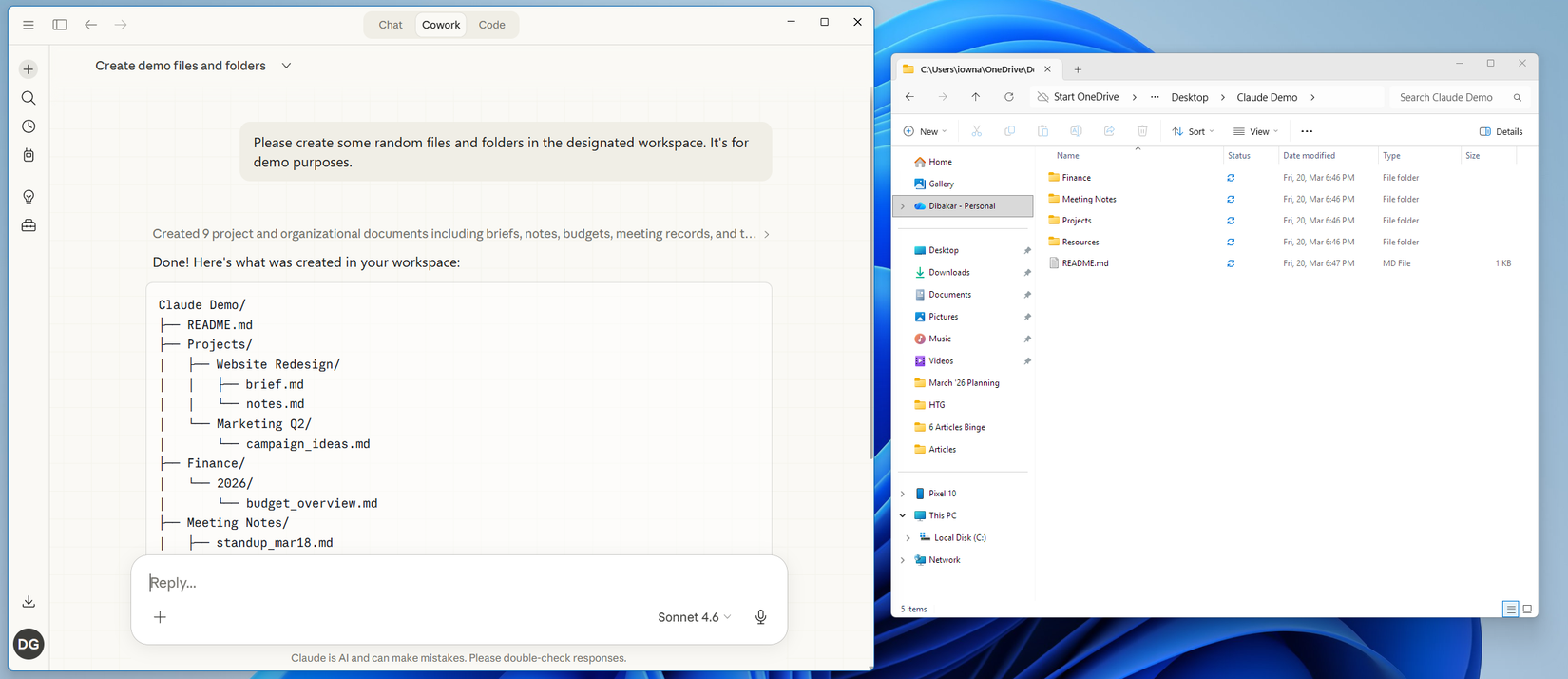

Two very useful features in Claude are Cowork and Computer Use. Cowork lets you give Claude a multi-step task, which it then gets on with, using the local files on your computer. For example, you can point it at a folder of scanned receipts and ask it to turn them into an expense report, and Cowork will use OCR to read the information and compile it all into a report for you.

Computer Use lets Claude take control of your computer, using screenshots to “see” the screen and moving the mouse to click UI elements. It works, if fairly slowly, but handing over the keys to your computer to an AI still feels a little scary.

Claude is the one that’s worth the sub

The best features for your money

To my mind, Claude is the one subscription that’s worth paying for. You can do a lot with the free versions of Gemini and ChatGPT, but there are some key Claude features that you can’t access without a subscription.

Cowork (including Computer Use) and Claude Code are all paid features that you can’t access for free, and each one alone is worth the sub. The Dispatch feature also makes the subscription worth paying. It lets you start a task from your phone, which Claude will tackle on your desktop while you’re away, meaning you can get Claude working on tasks wherever you are.

One of the things that I find most useful about Claude is its connectivity. ChatGPT has several connectors that you can use to connect your chatbot to services such as Notion, Spotify, and TripAdvisor. These are hit-or-miss, however; I’ve never been able to get the Notion connector to work. Gemini’s connectors are mostly focused on Google apps.

Claude is the most versatile when it comes to connectivity. It has a large selection of connectors you can use with powerful features. The Notion connector lets you read and write to Notion, for example. It also makes it simple to connect your own custom MCP servers to Claude, allowing you to use Claude to interact with apps and services, although you need a subscription to set up more than one custom connector.

One downside of Claude’s plans is the rate limits. I have a $20 Pro subscription, and I don’t hit the limits too often. Heavier users, however, are finding the limits increasingly frustrating.

You can do a lot with free tiers

Claude, Gemini, and ChatGPT are all very powerful tools, and each excels at specific things. I continue to use Gemini and ChatGPT for free for specific tasks, but for now, Claude is the subscription that I can’t live without.