The Windows desktop is iconic. Some of the backgrounds, the green rolling hills featured in Windows XP, have even become a bit of a meme. However, I do one thing very differently: I have completely removed all desktop icons from my desktop.

Too many icons cluttering things up

A cluttered desktop is a cluttered mind

Most every application you install on Windows will ask you a few things: where do you want it installed, do you want to install it for all users, do you want to add it to the Start Menu, and do you want a desktop icon?

If you don’t disable it manually, most will add a new desktop icon by default. After a few years of this, you’ll find that your desktop has become a cluttered catastrophe of icons, usually not organized in any particular way.

The entire situation is a bit weird. People spend time, and sometimes money, picking out a desktop background that they like. Why cover it up?

It would be like hanging a picture in your home and then covering it in sticky notes.

Moreover, I find the visual clutter pretty distracting. In an effort to make my day-to-day more efficient, I’ve been cutting out unnecessary distractions.

That meant the zoo of icons living on my desktop had to go.

Icons aren’t necessary or efficient

There are faster ways to find apps and files

After spending more than a minute hunting for an icon on my desktop, I realized this entire setup was flawed. Even if I organized them, I already knew which app I wanted. It isn’t like I need to be inspired by the icons on my desktop to do something.

Why waste time moving my mouse around when I already know what I want and there are a half dozen ways to get it without even touching the mouse?

What to use instead of icons

There are two main ways I open and close apps instead of using icons. Both are Microsoft apps, so you don’t need to worry about third-party issues, and both are extremely efficient.

The Start Menu can pin apps, but it also doubles as a search. You don’t even need to click the search bar—just open it, start typing, and you’re off to the races.

For example, if I want to launch Firefox, all I need to do it tap the Windows key, type fi, then press the Enter key. What you have to search depends on what apps you have installed, but in any case, I’ve always found it to be more efficient than hunting around my desktop.

Use Command Palette

Command Palette is one of the best introductions to Windows (via PowerToys) in recent years. It is a little bit like the Start Menu in that it functions like a search, but it also lets you pre-define search parameters with a single key, perform certain operations (like doing math or changing settings), and pretty much anything else you can imagine.

If you’ve used Spotlight Search on a Mac, you’re familiar with the idea.

This Open-Source App Made Me Abandon the Windows Start Menu

Don’t get bogged down by Bing search or ads in the Start Menu anymore.

Command Palette has become my primary—and almost exclusive—interface with Windows over the last several months. You can use almost any shortcut you want to open it.

Once you’re there, you don’t really need the desktop at all. You can access all the files and folders with a keystroke. I’ve always found that searching for files on my desktop is much quicker than trying to look around until I spot the right folder.

If Command Palette doesn’t have what you want natively, you can even make your own extension to build in the functionality.

For the moment, Command Palette isn’t included by default with Windows 11, but I certainly hope it becomes a permanent fixture in Windows 12—it is a major upgrade over the Start Menu.

How do you get rid of your desktop icons?

You could delete all of the icons off your desktop if you want—just press Ctrl+A and then hit the Delete key—but I wouldn’t recommend it.

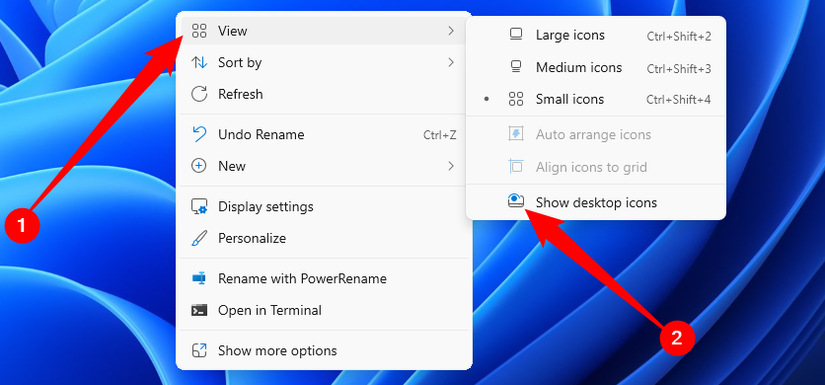

Instead, you should right-click empty space on your desktop, then go to View and toggle off “Show desktop icons.”

That way, if you ever need them for some reason, you don’t have to go hunting around for whatever you had on your desktop.

That feels better, doesn’t it?

With all of the extra icons out of the way, my desktop feels more like the top of a clutter-free desk. I don’t have a random smattering of icons floating everywhere. All I have is one neat little window that disappears when I’m done, and my brain is happier for it.