Europe has spent a decade watching American and Chinese companies capture every major technology wave, cloud, mobile, social, AI. Quantum computing may be the exception. A cluster of French startups, backed by €500 million in government funding and underpinned by some of the world’s strongest physics research, is positioning France as a serious contender in a race where legacy advantages count for remarkably little.

At the centre of the French effort is Alice & Bob, a Paris-based startup whose “cat qubit” technology, named after Schrödinger’s thought experiment, takes a fundamentally different approach to the field’s central problem: errors. Quantum computers manipulate individual particles whose states are so fragile that any interaction with the outside world destroys the computation. Most approaches compensate with massive redundancy, using thousands of physical qubits to produce a single reliable “logical” qubit. Alice & Bob’s cat qubits are designed to autonomously correct certain errors at the hardware level, potentially reducing the number of physical qubits needed by orders of magnitude.

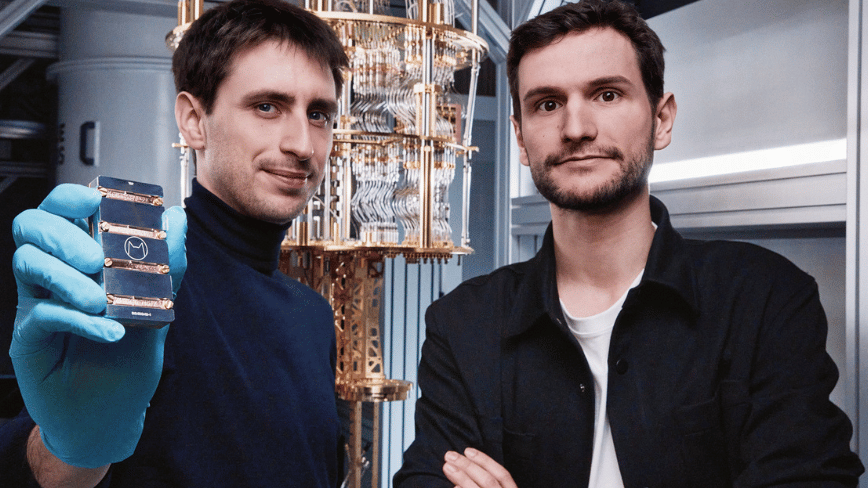

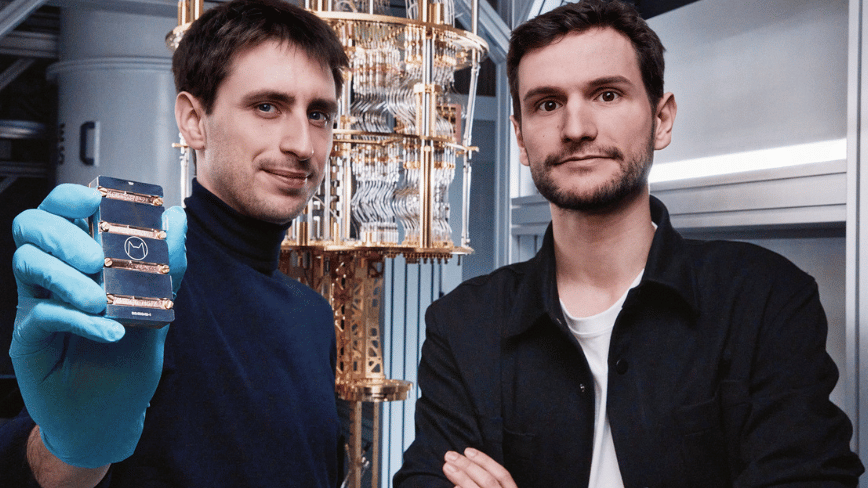

“It’s not about being faster,” says co-founder and CEO Théau Peronnin, who founded the company in 2020 with Raphaël Lescanne. “It’s about being so dramatically faster that you change what is feasible.” The company raised €100 million in a Series B round in January 2025, led by Future French Champions, AXA Venture Partners, and Bpifrance, bringing total funding to €130 million. It is now investing $50 million in a new laboratory north of Paris, with a clean room for in-house chip fabrication and a test facility for progressively larger machines.

Five companies, five qubit architectures

Alice & Bob is not operating in isolation. France’s PROQCIMA programme, a government initiative aiming to deliver a fault-tolerant quantum computer demonstrator with 128 logical qubits by 2030 and a 2,048-logical-qubit commercial system by 2035, has selected five companies for its €500 million first phase: Alice & Bob (cat qubits), Pasqal (neutral atoms), Quandela (photonics), Quobly (silicon spin), and C12 Quantum Electronics (carbon nanotubes). The programme is structured as a competition: after four years, the three most promising approaches advance; after eight, only two remain.

The diversity is the strategy. Rather than betting on a single qubit architecture, as the US has effectively done with superconducting circuits, France is funding parallel approaches, each with distinct advantages. Pasqal, which is planning a public listing at a reported $2 billion valuation, already has neutral-atom quantum computers deployed in high-performance computing installations across Europe. Quobly reached a milestone in December 2025 when its isotopically enriched silicon wafers entered STMicroelectronics’ 300mm production line in Crolles, the first integration into a high-volume commercial semiconductor fab. Quandela has partnered with OVHcloud to make its processors available via sovereign cloud infrastructure by mid-2026.

Olivier Ezratty, an academic whose 1,500-page compendium “Understanding Quantum Technologies” has become a standard reference, notes that the French companies share a common advantage: lower machine and energy costs compared to their American competitors. In a field where cryogenic cooling and error correction drive enormous power consumption, that advantage may prove more consequential than raw qubit counts.

The competitive landscape

France is not the only European country with quantum ambitions. Finland’s IQM announced in February that it would become the first publicly listed European quantum company through a $1.8 billion SPAC merger, with a primary listing on the NYSE and a possible dual listing in Helsinki. IQM has raised over $600 million in total and already deploys superconducting quantum computers. The UK has Oxford Quantum Circuits and Riverlane, the latter focused on quantum operating systems.

The American incumbents remain formidable. Google, which acquired cat-qubit-adjacent startup Atlantic Quantum in October 2025, IBM, and a constellation of well-funded competitors have deeper pockets and larger engineering teams. But Peronnin argues the playing field is more level than it appears. “At the end of the day, it’s a maths challenge,” he says. “There is no unfair advantage from legacy technology like classical computing, so there is no reason to be shy.”

The physics talent pipeline supports his confidence. France has produced three Nobel Prize-winning physicists in recent years, Serge Haroche (2012) for quantum optics, Alain Aspect (2022) for quantum entanglement experiments, and Albert Fert (2007) for spintronics, all from institutions like École Polytechnique and École Normale Supérieure that feed directly into the country’s quantum ecosystem. Both Alice & Bob’s founders are products of that pipeline.

The gap between promise and product

Peronnin is candid about where the technology stands. “At the moment, the machine we have is no more powerful than your telephone,” he says. “We’re on the flat part of the exponential curve.” The quantum computers that Alice & Bob and its French peers have placed in companies like Air Liquide are not yet delivering on the technology’s transformative promise. Their purpose is to train a community of specialists who will be ready when the hardware catches up.

The applications, when they arrive, are potentially enormous. Peronnin describes drug development, currently dominated by trial and error, as a field where quantum simulation of molecular interactions could transform what is feasible. Materials science, cryptography, financial modelling, and logistics optimisation are all candidates for quantum disruption.

The race will be “winner-takes-all,” Peronnin predicts, comparing it to IBM’s dominance in classical computing. That framing may be optimistic — quantum computing could fragment along application-specific lines rather than consolidating around a single platform. But it captures the strategic stakes for Europe: after decades of building world-class research and watching the commercial value migrate to Silicon Valley, quantum represents a technology where the science and the business might, for once, stay in the same place.

“We have what it takes to win it,” Peronnin says. “It’s about believing in ourselves.” Coming from the CEO of a company whose quantum chip is currently less powerful than a smartphone, that is either delusion or the kind of conviction that turns exponential curves into market share. The €500 million in government funding suggests France, at least, is betting on the latter.