Google has quietly altered one of the most reliable promises in consumer tech: 15GB of free cloud storage. For years, signing up for a Google account meant getting 15GB of free storage, shared across Gmail, Drive, and Photos. However, that’s changed.

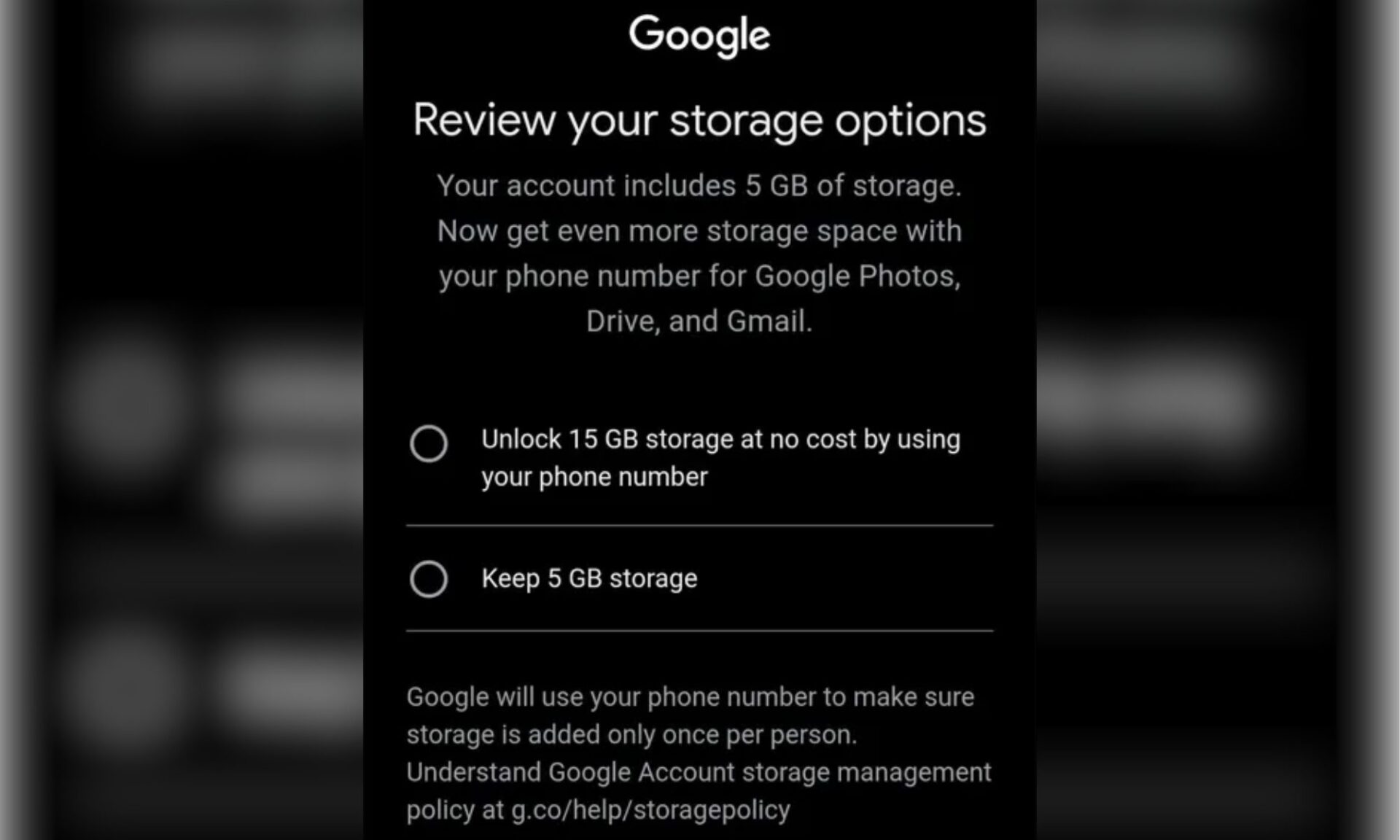

New accounts are now defaulting to 5GB (same as iCloud), with the full 15GB available only if you have entered your phone number during setup. The prompt users are seeing reads: “Your account includes 5GB of storage. Now get even more storage space with your phone number.”

What exactly changed?

The policy change took effect sometime around March 18, 2026 (via 9To5Google). That’s when the company updated its support page language from definitive to conditional. Initially, the support page read “Your Google account comes with 15GB of cloud storage at no charge.”

Now, it has been updated to say “up to 15GB of cloud storage at no charge.” And Google didn’t announce the change via a tweet or a blog post, as it does for every other update that comes out for consumer-centric products.

It is during the account setup that users are now seeing two explicit choices: link a phone number to get 15GB of storage or keep 5GB.

Why is Google doing this?

Google wants to make sure that the 15GB storage is offered to users only once, and not as many times as they create a new account. Linking the free storage to users’ phone numbers is, I’d say, a smart move, as it’s much more difficult to get a new number than to create a new Google account.

So, the company is positioning the change as an anti-duplication measure rather than anything else. A Google spokesperson has also confirmed to Endgadget that this is a regional test, which is why some users are still able to access the 15GB free storage without verifying their phone number.

At the same time, I’d also like to draw your attention to the timing of this change. Only recently did Google expand the available storage for AI Pro subscribers from 1TB to 5TB, and now, it’s enforcing a tighter space for free users. Ultimately, we should all prepare for slimmer free storage margins.