The green checkmark, rolling out over the coming weeks, requires consistent listener engagement, platform policy compliance, and a real-world, identifiable presence. Content farms and AI-generated artist profiles are explicitly excluded at launch.

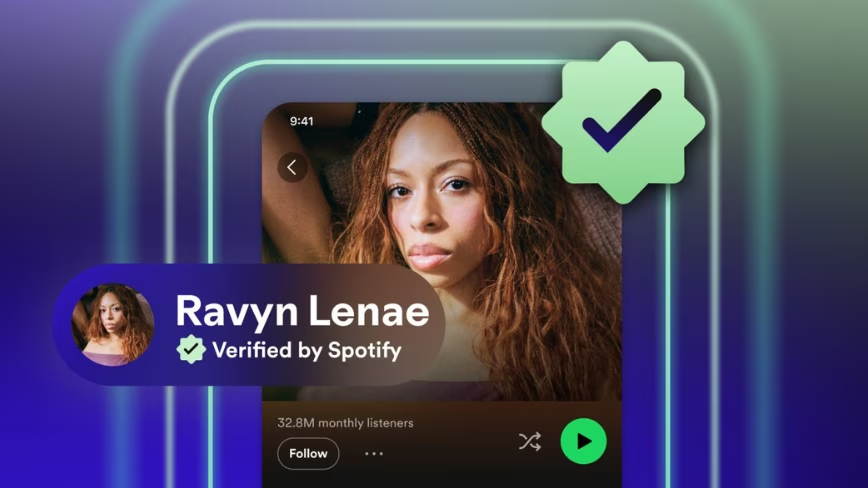

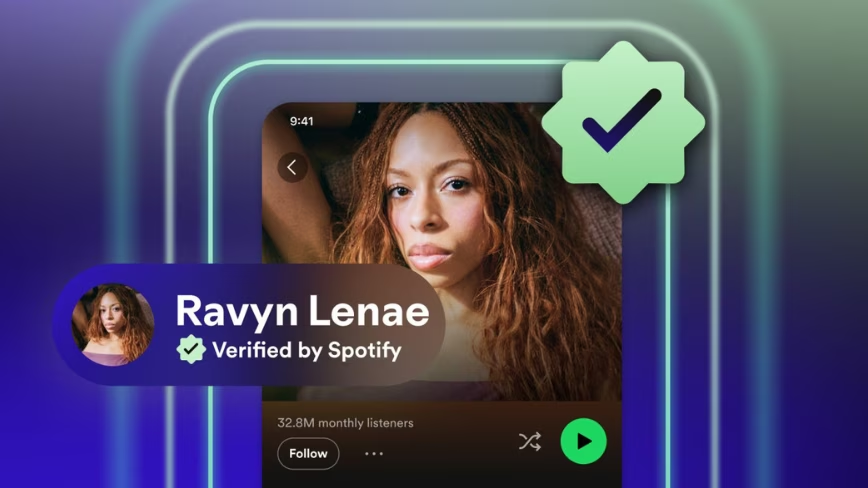

Spotify has introduced a Verified by Spotify badge, a green checkmark that will appear on artist profiles and next to artist names in search, signalling that the account has passed the company’s review for authenticity and trust. The feature was announced on the Spotify Newsroom and will roll out progressively over the coming weeks.

The timing is not accidental. Spotify has faced sustained criticism over the past year for allowing AI-generated music to accumulate on the platform under fake or misleading artist profiles.

As we wrote in June 2025, The Velvet Sundown, an AI-generated band whose tracks appeared on users’ Discover Weekly playlists, had no label distinguishing it from a human act. The following month, we covered further outrage after AI-generated songs appeared on the official Spotify pages of deceased artists, including artists murdered decades ago, uploaded without consent from their estates.

While rivals like Deezer introduced AI-generated content tagging, Spotify stayed quiet. The Verified badge is its most substantive response to date.

To receive the badge, artist profiles must meet three criteria: consistent listener activity and intentional engagement over a sustained period (not one-time spikes); compliance with Spotify’s platform policies; and signals of a real-world artist presence, including concert dates, merchandise, and linked social accounts.

Critically, profiles that “appear to primarily represent AI-generated or AI-persona artists are not eligible for verification” at launch. Spotify says it will pair algorithmic standards with human review to identify “real artists behaving in good faith, not just filtering out bad actors.”

At launch, Spotify says more than 99% of artists that listeners actively search for will be verified, representing hundreds of thousands of artists, the majority independent, spanning genres, career stages, and geographies. T

he company is explicitly deprioritising “functional music creators and content farms whose content is primarily designed for passive or background listening.”

Alongside the badge, Spotify is introducing artist detail sections (in beta) across all profiles regardless of verification status, surfacing career milestones, release activity, and touring history, described as “nutrition facts” for music, giving listeners context about an artist’s authentic activity on the platform.

Artist Profile Protection, also in beta, gives artists greater control over what appears on their own profiles.

Not seeing the badge on an artist profile at launch does not mean they will be permanently excluded; Spotify says verification will happen on an ongoing basis across its catalogue of millions of profiles.