Follow ZDNET: Add us as a preferred source on Google.

ZDNET’s key takeaways

- Codex plugins aim to standardize repeatable AI workflows.

- Marketplace plans signal a broader ecosystem and sharing strategy.

- OpenAI moves to compete more directly with Claude Code.

OpenAI is introducing plugins for its Codex development tool. This move is important because it gives Codex some features that have already been available to Claude Code users for a while.

Also: I got 4 years of product development done in 4 days for $200, and I’m still stunned

I have used both Claude Code and OpenAI’s Codex to augment my software development efforts. These tools have been astonishing productivity boosters.

While both have subtly different personalities and capabilities, I can’t generally say I prefer one over the other. Both are solid members of my development team. It’s not like I’d prefer hanging out after work with one and not the other.

Also: Claude Code made an astonishing $1B in 6 months – and my own AI-coded iPhone app shows why

I suppose I do slightly prefer Claude Code, but that’s because I can get my work done on the $100/month Max plan, whereas Codex begins at $200/month.

There is one other factor, though, and I’m sure OpenAI feels it. Every programmer I talk to uses Claude Code. So far, of all the programmers I’ve talked to in the general programming populace, not one has said they’re a Codex user.

In fact, a few times when I’ve mentioned I use Codex, I’ve gotten pushback that I should use Claude. The reality is that I use Claude for Apple development and Codex for WordPress development. I need to write about both. Dividing them by project type makes it easier to manage.

What’s been announced

Skills, in both Claude Code and Codex, are essentially prompts with names. Think of them almost as batch commands. You pre-write a series of instructions, and you call those instructions by a given name, the name of the skill. Codex introduced skills as a feature back in December.

Also: OpenAI’s new GPT-5.3-Codex is 25% faster and goes way beyond coding now – what’s new

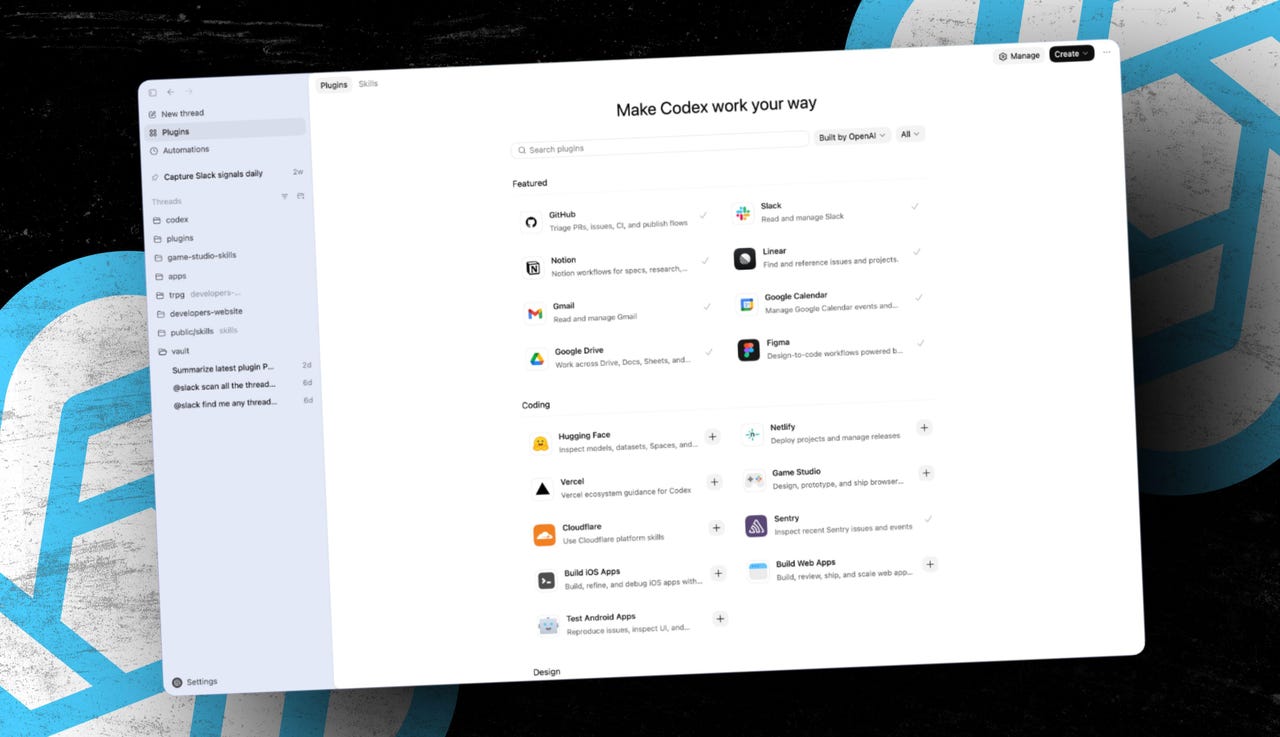

Plugins are more in-depth solutions, available to a wider audience. While you can share skills, many developers use them locally and design them just for their own use. Plugins are full solutions with skills, resources, and connectors in a shareable package.

I think the name “plugin” is confusing. Historically, plugins have been add-on pieces of code that extend the capabilities of the base product. Anthropic started packaging solutions kits (like AI for HR folks or AI for wealth management) using the name “plugins.” OpenAI, clearly in a keeping-up-with-the-Joneses push, decided to call their packaged solutions “plugins” as well.

Expanding Codex

In any case, OpenAI’s plugins package skills and integrations together so that various solutions work as turnkey capabilities. This approach helps OpenAI position Codex as something that goes beyond mere coding. It expands Codex as the agentic force behind planning, research, coordination, and post-development workflows.

Also: I did 24 days of coding in 12 hours with a $20 AI tool – but there’s one big pitfall

The key idea is that plugins help make Codex more useful across a broader range of real-world tasks. The plugin architecture used by Codex bundles repeatable workflows with app integrations, providing a more complete workflow solution inside the Codex environment.

OpenAI differentiates between the times to use skills or plugins:

|

Use skills when |

Use plugins when |

|

|

OpenAI recommends, “Start local, then package the workflow as a plugin when you are ready to share it.”

Standardizing how work gets done

According to OpenAI, “Users can install the workflow they actually want, instead of stitching together separate integrations and capabilities themselves.” Developers can extend Codex for personal use, for their teams, or for public sharing. Those extended offerings can help provide a more unified experience, especially among development teams.

I can see the value in this approach for larger teams, and even for solo developers like myself. One of the gotchas with AI is the potential for ad hoc outputs, because AIs produce results based on inference rather than an algorithm. That process means that AI-powered solutions aren’t as repeatable or predictable as many of us need.

Also: 10 ChatGPT Codex secrets I only learned after 60 hours of pair programming with it

By packaging solutions with a combination of skills and integrations, OpenAI and Anthropic are now offering a better way to standardize high-value processes without having to rebuild individual setups one at a time — and without guessing whether what worked once will ever work again.

The marketplace paradigm

In OpenAI’s announcement blog post, the company mentions the word “marketplace” 41 times. That’s because OpenAI considers a marketplace to be any cataloged connection of plugins, whether they’re installed locally on your computer, on a server used by your team, or in a more official app store-style marketplace run by OpenAI.

OpenAI said, “Adding plugins to the official Plugin Directory is coming soon.” It’s not clear whether the official Plugin Directory will be an extension of the ChatGPT apps directory or something altogether different.

According to OpenAI, “Plugins are discoverable in the directory in the Codex app, where builders can browse and install a curated set of plugins. There are currently more than 20 plugins available in the Codex app, CLI, and VS Code extension, including Figma, Notion, Gmail, Google Drive, Slack, and more.”

Also: I used GPT-5.2-Codex to find a mystery bug and hosting nightmare – it was beyond fast

When I opened my Codex app while writing this article, I did not find a separate plugins directory. There is a Skill section, but when I searched it for Slack, I didn’t find an entry. I’m guessing more integrated plugin discovery will be added to the Codex app in the coming days. I’ve reached out to the company for more details. I’ll update this article when they get back to me.

Platform ambitions take shape

OpenAI has clearly taken notice of how Claude Code has become more than just a coding tool — it’s becoming an overall agentic workhorse across multiple disciplines. With plugins, OpenAI is moving Codex away from being solely a developer tool to becoming a broader work platform that integrates tools and workflows.

Additionally, OpenAI is strengthening its team and enterprise offerings by incorporating Codex into a long-term ecosystem strategy built around agents, discovery, and reuse.

What about you? Have you tried using AI coding tools like Codex or Claude Code? If so, how do they fit into your workflow? Do you see value in packaging repeatable workflows as plugins, or do you prefer keeping things more flexible and ad hoc? How important is a marketplace of shared tools and integrations to you? Would you trust workflows created by others? Finally, do you think tools like Codex are evolving beyond coding into full work platforms, or is that a step too far? Let us know in the comments below.

You can follow my day-to-day project updates on social media. Be sure to subscribe to my weekly update newsletter, and follow me on Twitter/X at @DavidGewirtz, on Facebook at Facebook.com/DavidGewirtz, on Instagram at Instagram.com/DavidGewirtz, on Bluesky at @DavidGewirtz.com, and on YouTube at YouTube.com/DavidGewirtzTV.