OpenAI is exploring possible legal action after users did what they wanted with Apple’s ChatGPT integrations and didn’t sign up for enough paid accounts, instead of doing what CEO Sam Altman expected.

A May 14 Bloomberg report says OpenAI has enlisted outside legal counsel and discussed options that could include sending Apple a breach-of-contract notice. OpenAI reportedly expected deeper ChatGPT integration across iOS, iPadOS, and macOS to drive large subscription growth through Apple’s ecosystem.

Executives at OpenAI now believe the partnership has been financially disappointing and far more limited than anticipated. Apple and OpenAI entered the agreement with distinct priorities.

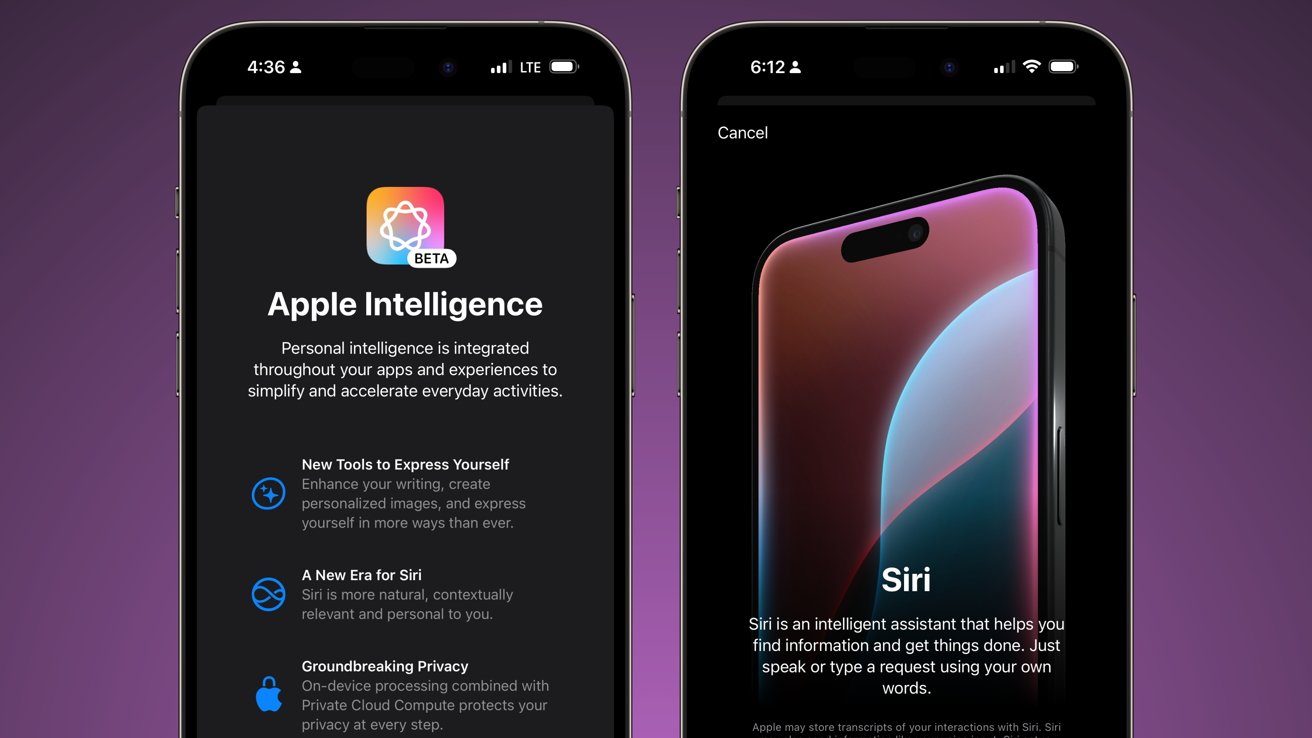

Apple needed a recognizable AI partner while major Siri upgrades and Apple Intelligence features were still under development. OpenAI sought access to hundreds of millions of Apple users, believing the iPhone could become a significant source of recurring ChatGPT subscriptions worth billions annually.

Internally, the companies reportedly viewed the OpenAI agreement as a potential counterpart to Apple’s enormously profitable Google Search deal in Safari. The ChatGPT partnership never came close to generating that level of revenue or strategic value.

Neither company structured the partnership around large direct payments. Instead, both sides expected strategic benefits. OpenAI expected customer growth and subscriptions, while Apple gained an interim AI solution as it continued building its own generative AI systems.

Apple kept ChatGPT available, but tightly controlled

Despite Apple’s high-profile WWDC 2024 announcement, ChatGPT inside Apple’s software exposes fewer features than OpenAI’s standalone app and operates within much tighter limits.

Users often need to explicitly invoke “ChatGPT” inside Siri prompts before requests route to OpenAI’s systems. Responses also appear inside smaller interface windows with less information than the standalone app typically provides.

OpenAI’s internal studies found users overwhelmingly preferred the standalone ChatGPT app over Apple’s built-in integrations.

Apple’s version resembles a tightly managed Siri extension rather than a deeply integrated AI layer across the operating system. The standalone app offers features unavailable in Apple’s implementation, such as persistent memory, wider model access, advanced voice tools, custom GPTs, and direct subscription management.

OpenAI executives reportedly expected broader integration across Apple apps and more prominent placement inside Siri. Apple instead kept the implementation relatively narrow while continuing development of its own Apple Intelligence systems.

Apple reportedly worried internally about OpenAI’s privacy standards while negotiating the partnership. The company built Apple Intelligence around on-device processing and Private Cloud Compute to keep more user data inside Apple-controlled infrastructure.

That tension over data control goes to the heart of why the two companies were never fully aligned. OpenAI’s cloud-focused systems operate very differently, leaving Apple with less direct control over how outside AI models process user information.

OpenAI increasingly looks like a future Apple competitor

The relationship between the companies has also become more complicated outside software. OpenAI acquired Jony Ive’s AI hardware startup and has aggressively recruited Apple engineers for its growing device ambitions.

OpenAI offered some Apple recruits compensation packages worth millions more than Apple provided. Those moves increasingly position OpenAI as a competitor rather than a partner.

Apple is also building new hardware efforts tied to Jony Ive‘s AI startup while preparing for a future where outside AI providers become interchangeable services inside iOS.

Apple plans to introduce an “Extensions” system in iOS 27 that will let users choose among multiple outside AI models. ChatGPT, Anthropic’s Claude, and Google Gemini are all expected to be part of the system.

Outside AI models are becoming interchangeable services inside iOS rather than central platform features. ChatGPT appears set to operate alongside Siri and Apple Intelligence instead of becoming a dominant layer across Apple’s ecosystem.

Any legal case could still prove difficult. Large platform agreements often give companies like Apple broad control over implementation details, placement, and product design decisions. And, users will do what they want, regardless of Silicon Valley expectations.

OpenAI still hopes to resolve the disagreement privately and may wait until after its legal fight with Elon Musk concludes before taking formal action against Apple. A lawsuit isn’t guaranteed at this stage.