Smart thermostats are a great way to save money. They give you control over your heating, allowing you to change the target temperature based on the time of day or turn off the heating when you’re away from home. While my smart thermostat was saving me money, I was still using more energy than I needed to.

Do You Really Need a Smart Thermostat?

Are smart thermostats really that special? For the average person, a $40 programmable thermostat might be a better option.

The placement problem that’s costing you money

Your thermostat may be in the wrong place

Many of the most popular smart thermostats on the market are a single device with a temperature sensor built in. You stick the device on a wall somewhere, and the temperature is measured at this location.

The problem is that we tend to place the smart thermostat where it’s most convenient to use. For example, you might place it on the wall in a hallway so it’s easily accessible and doesn’t ruin the look of your living room.

The trouble with this placement is that your smart thermostat will heat your hallway to the perfect temperature, since that’s where the temperature sensor is located. Your hallway may be smaller than other rooms, so it may heat up more quickly, or it might heat up more slowly if it’s open-plan or opens onto the stairway.

This means that the rooms that you spend the most time in, such as your living room, aren’t being heated optimally, and this can cause you to turn the heating up or run it for longer, wasting energy.

7/10

- Integrations

-

Google Assistant, Apple HomeKit, Amazon Alexa

- Connectivity

-

C, R, G/PEK, Y1, OB*, W1 (* accepts heat pump OB wire, W2, or Y2)

The Ecobee Smart Thermostat Essential provides built-in energy savings and easy temperature control on its sleek touchscreen and convenient app.

A temperature node is a cheap fix

Small enough to place wherever you want

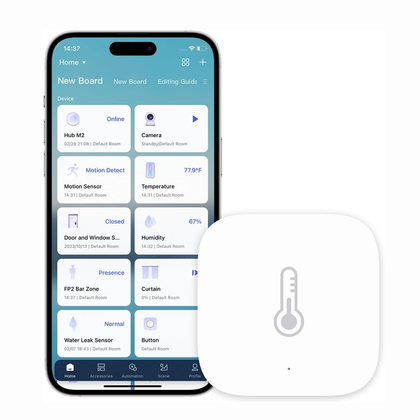

There’s a very simple and inexpensive solution to this problem. You can buy a cheap temperature sensor for as little as $10 that measures the ambient temperature. These devices are usually relatively small and don’t need to be fixed to the wall, so you can place them almost anywhere you want.

Instead of relying on a temperature reading from the sensor in your smart thermostat, you can measure the temperature in a much more useful location, such as your living room. The portable nature of a temperature sensor means that you can avoid some of the problems that can plague smart thermostats, such as being in direct sunlight, close to radiators, or on a cold external wall.

- Dimensions (exterior)

-

36x36x9 mm

- Compatibility

-

Apple Home, Aqara Home, Home Assistant (via ZHA or Zigbee2MQTT)

This small but mighty Zigbee sensor can measure both temperature and humidity. It’s battery-powered, so you can place it almost anywhere. You’ll need an Aqara hub to use it with the native app, or you can connect it directly to Home Assistant using integrations such as Zigbee2MQTT.

Offset your temperature in standard smart home systems

Make your thermostat match your temperature node

Your temperature node will tell you the current temperature at its specific location. How you use that information will depend on the smart home system that you’re using.

If you’re using a popular closed-source smart home system such as Alexa, Google Home, or Apple Home, your options are fairly limited. With the basic automation options available in some of these systems, you may struggle to build an automation that can control your heating based on the reading from the temperature node instead of the smart thermostat itself.

However, you can still use this information to your benefit. For example, if you want to heat your home to 68°F, your smart thermostat will turn off when the temperature in your hallway reaches 68°F. Using your temperature node, you can see that when your hallway reaches 68°F, your living room is only reaching 64°F.

You can then set a temperature offset of 4°F in your smart thermostat app. This will ensure that when temperature reading from the hallway shows as 68°F, it’s actually the living room temperature that will be close to this value. It may not match exactly, but it should allow you to get your living room much closer to your target temperature.

Use a temperature node as the source of truth

Home Assistant makes things more accurate

Things are a lot better if you use a more capable smart home system, such as Home Assistant. Home Assistant lets you create much more complex automations, so that you can take control of your heating rather than relying on the smart thermostat to do everything.

This is exactly what I did. Instead of relying on the temperature measured at my smart thermostat, I added a cheap temperature node to Home Assistant and created my own automation to control my heating.

My smart thermostat still does the work of turning the heating on and off, but when this happens is now determined by the temperature from my Zigbee sensor, which is in a much more optimal location. My living room now heats to the temperature that I want, regardless of what the smart thermostat is measuring in the hallway, and it works far more efficiently.

The beauty of this method is that you can add further temperature nodes to your home and take an average across all of them to make things even more accurate. You can also place them in different rooms and have your heating dependent on your living room temperature during the day and your bedroom temperature during the night.

Save even more money with your smart thermostat

Smart thermostats can save you money, but the all-in-one design of popular models isn’t the most efficient. Some models offer additional remote temperature sensors, but these are often fairly expensive. With the addition of a cheap temperature node, you can make your heating more accurate and save yourself even more money.