Microsoft is rolling out a useful feature for Office users this week. The company has introduced Agent Mode inside Word, Excel, and PowerPoint, a more powerful version of the Copilot experience that Microsoft calls “vibe working.”

This is now the default experience for Microsoft 365 Copilot and Microsoft 365 Premium subscribers. It is also available on Microsoft 365 Personal and Family plans.

What’s Agent Mode in Microsoft Copilot, and how is it different?

Until now, Copilot within Office apps has been largely a passive assistant. It could answer questions, but struggled to take direct action inside your documents.

Sumit Chauhan, President of the Office Product Group at Microsoft, acknowledged this gap. She noted that when Copilot first launched, the underlying AI models simply weren’t capable enough to command the applications directly.

Models have shown significant improvement in instruction following and multi-step reasoning over the past year. Agent Mode is built on those improvements and can now execute complex edits without losing your original intent.

What can Copilot Agent Mode actually do in Word, Excel, and PowerPoint?

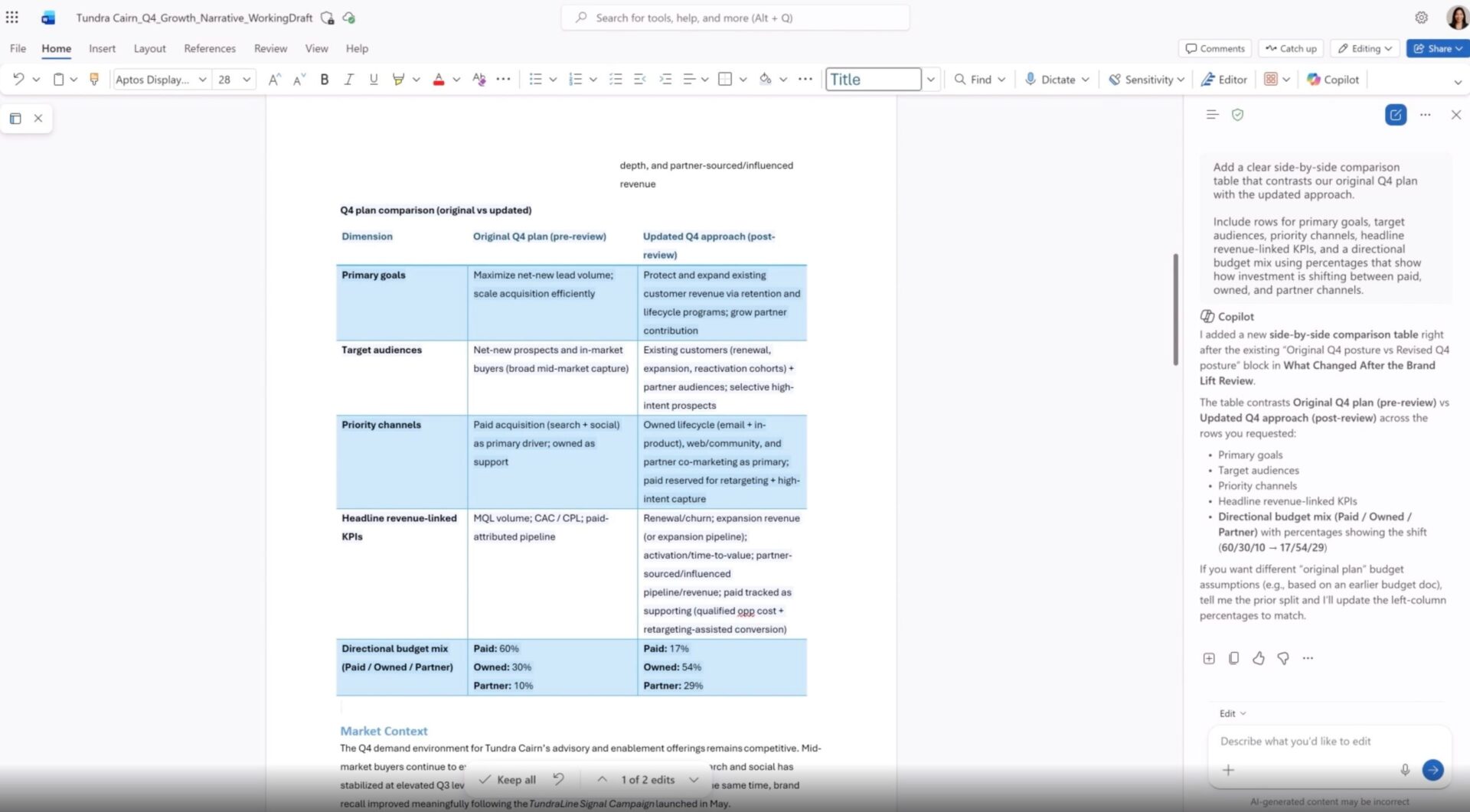

Quite a lot, actually. A sidebar shows you every step Copilot is taking in real time, so you’re never left guessing what it changed. In Word, it can draft, rewrite, restructure, and adjust tone. In Excel, it makes changes directly inside your workbook, adding formulas, tables, and visuals to turn raw data into actionable insights.

In PowerPoint, it can update existing decks with fresh information while respecting your company’s template styling. In fact, early data from Microsoft shows engagement in Excel jumped 67%, satisfaction rose 65%, and new user retention increased 50%.

Microsoft says deeper editing for complex workflows and more transparency around changes are next on the roadmap. The company has been making several Copilot-related moves lately, from launching smarter research tools in Copilot Cowork to cleaning up its presence in Windows 11 apps and doubling down on its positioning as a serious productivity tool.