In short: IBM and Arm announced a strategic collaboration on 2 April 2026 to enable Arm-based software to run on IBM Z and LinuxONE mainframes, the platforms that process the bulk of the world’s regulated enterprise transactions. The partnership targets three areas: virtualisation to host Arm software environments on IBM hardware, security and compliance for regulated industries, and long-term ecosystem interoperability. The goal is to bring the Arm-native AI software stack, frameworks built for cloud platforms from AWS to Google to Microsoft, closer to enterprise data that IBM Z customers cannot move to the public cloud. IBM gave no shipping date. Both companies describe the collaboration as future direction and intent, not products that exist today.

IBM and Arm are joining forces to close the gap between the world’s most widely used AI software stack and the world’s most reliability-critical enterprise hardware. On 2 April 2026, the two companies announced a strategic collaboration designed to let Arm-based software run on IBM Z and LinuxONE mainframes, systems that anchor the transaction processing infrastructure of banks, governments, and regulated enterprises that cannot simply lift their data to the public cloud. The announcement is an acknowledgement from both sides that the enterprise computing market has reached a point where the two architectures must coexist on a single machine.

The problem the partnership is trying to solve

IBM Z and LinuxONE mainframes run on IBM’s s390x architecture. The AI and cloud-native software ecosystem, PyTorch, TensorFlow, llama.cpp, ONNX Runtime, the container workloads built for Kubernetes, has been developed primarily for x86 and, increasingly, for Arm. By Arm’s own estimates, close to 50% of compute shipped to major hyperscalers in 2025 was Arm-based, with AWS Graviton, Google Axion, and Microsoft’s own AI infrastructure strategy all centred on Arm silicon. Arm has integrated its Kleidi AI libraries directly into PyTorch, ExecuTorch, ONNX Runtime, and a range of other leading frameworks. The result is an ecosystem of AI tooling that runs natively and efficiently on Arm but requires porting to run on s390x, a process that is time-consuming, expensive, and lags behind the upstream development pace.

For enterprises running IBM Z as their system of record, processing transactions, holding customer data, running compliance-sensitive workloads, the practical consequence is a widening gap. AI inference needs to happen close to the data; the data lives on the mainframe; but the AI frameworks are built for a different architecture. The partnership’s stated purpose is to close that gap without forcing enterprises to choose between their existing infrastructure and access to the modern AI software stack.

Three focus areas, one caveat

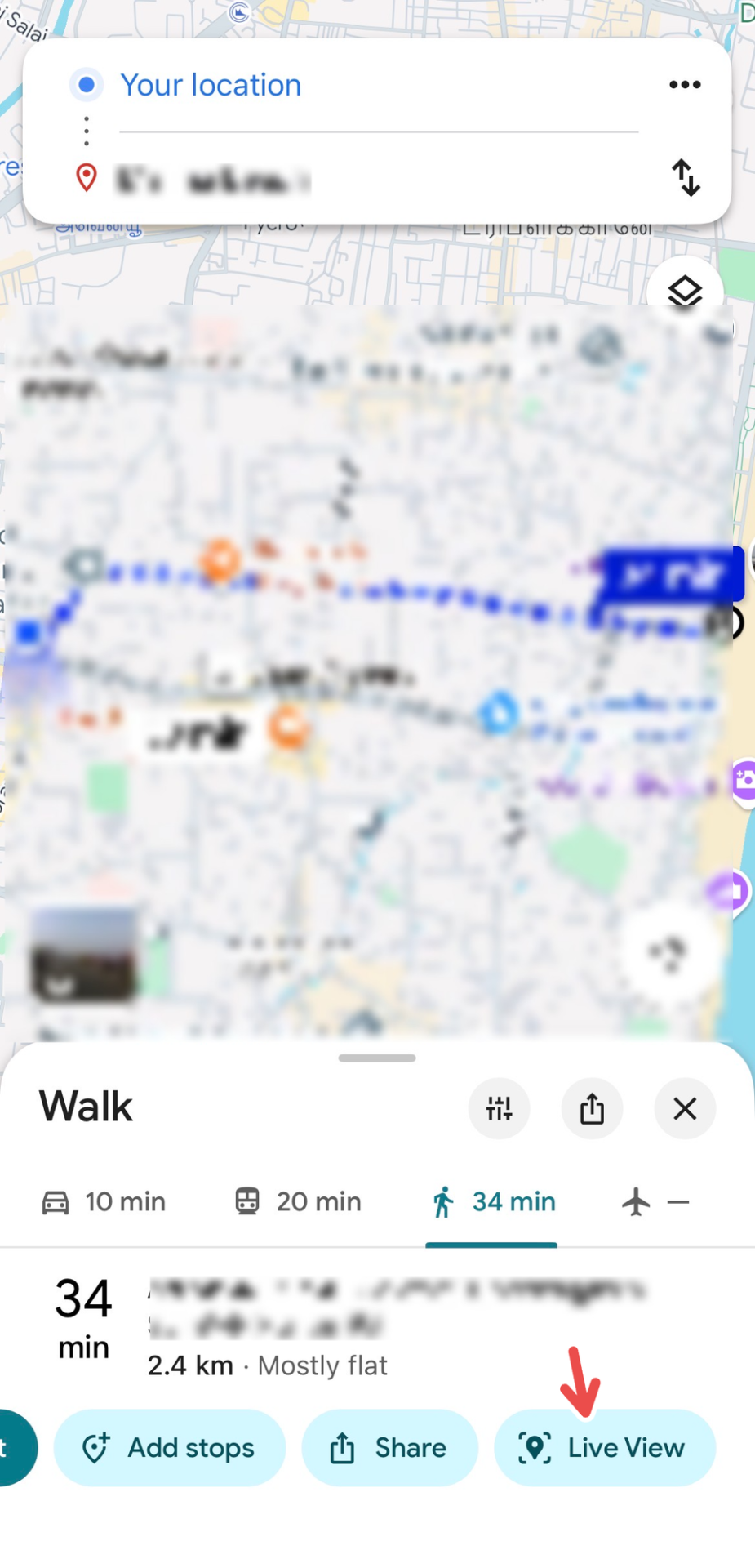

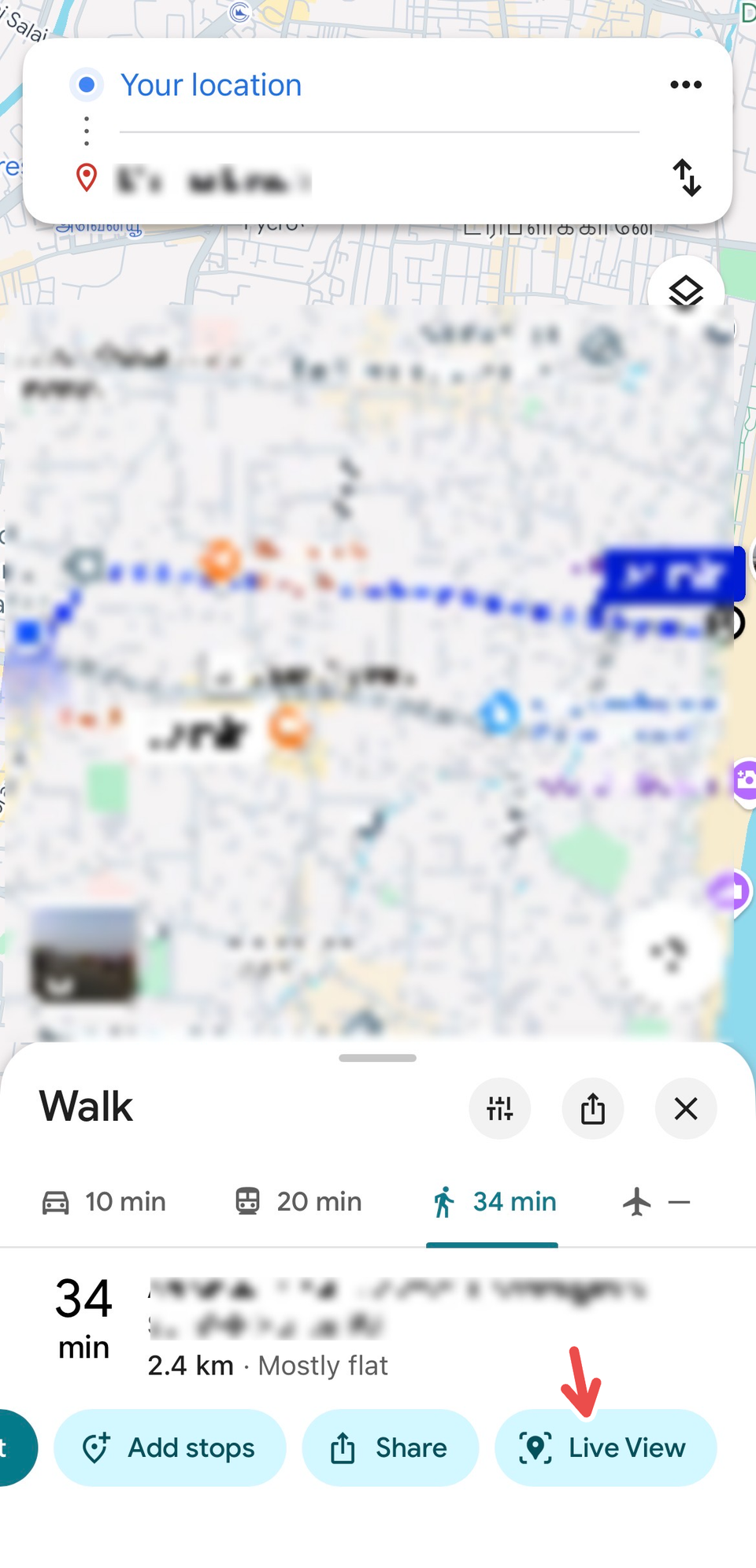

IBM and Arm have organised the collaboration around three workstreams. The first is virtualisation: building tools that allow Arm-based software environments to run within IBM Z and LinuxONE platforms without requiring applications to be ported to s390x. The second is security and compliance: ensuring that Arm workloads running on IBM hardware meet the data residency, encryption, and availability standards that regulated industries, banking, government, healthcare, are required to maintain. The third is long-term ecosystem interoperability: creating shared technology layers so that enterprises have more software options across both platforms as the collaboration matures.

The important caveat, stated plainly in IBM’s press release, is that none of this is shipping yet. IBM said: “While it’s early days to share specifics, our intent is that the same features and qualities such as security, performance, resilience and cost-effectiveness that distinguish IBM Z and LinuxONE will be available to Arm64 workloads.” No shipping date was given. No technical specifications were published for the planned dual-architecture systems. The statements from both companies represent goals and intended direction, not products available for procurement.

The hardware IBM is working with

The announcement lands against a hardware backdrop that IBM has been building for several years. The IBM z17 mainframe, which reached general availability in June 2025, is built around the Telum II processor: eight cores running at 5.5GHz, 360MB of L2 cache, and a 50% improvement in AI inference throughput over its predecessor, the z16. IBM says the z17 is capable of handling more than 450 billion AI inference operations per day. The IBM Spyre Accelerator, which became commercially available for z17 and LinuxONE 5 systems on 28 October 2025, adds 32 AI-optimised cores per card with support for int8 and fp16 data types, up to 1TB of memory across the system, and a maximum power draw of 75W per card, designed to run large language models directly on-premises without the latency and data-transfer costs of cloud-based inference.

The Arm collaboration is, in effect, the software layer being built on top of that hardware investment. IBM has spent years engineering a mainframe that can run AI at scale. The question the partnership addresses is whether the AI software that enterprises actually want to run will be available for it. The scale of investment flowing into AI infrastructure in 2026 has made the Arm ecosystem the default environment for AI development; IBM’s partnership with Arm is its answer to that reality.

What each side gets

For IBM, the collaboration addresses a strategic vulnerability. As AI inference workloads grow and enterprises look to run models closer to their transaction data, IBM Z’s inability to run the native Arm software stack directly creates a friction point that cloud providers, with their natively Arm-optimised environments, do not share. Bringing Arm software to the mainframe keeps IBM Z relevant in the AI era rather than relegating it to a backend system that does transactions but cannot participate in inference.

For Arm, the partnership extends the ecosystem into the one major enterprise computing environment it does not yet natively serve. Arm chips power AWS, Google Cloud, Microsoft Azure, Apple’s Mac line, and most of the world’s smartphones. IBM Z, deployed in the vast majority of the world’s largest banks and governments, has been the significant exception. The enterprise expectation in 2026 is that AI deployments meet the same security and compliance standards as the systems they run alongside, and IBM Z’s regulated-industry credentials give Arm a route into that environment that cloud deployments alone cannot provide.

What the executives said

Tina Tarquinio, chief product officer for IBM Z and LinuxONE, framed the collaboration as a continuation of a long pattern: “This collaboration is a natural extension of IBM’s leadership in hardware and systems innovation. It continues IBM’s pattern of anticipating enterprise needs well ahead of market inflection points. Our aim is to expand software choice and improve system performance while maintaining the reliability and security our clients expect.” Christian Jacobi, CTO and IBM Fellow in IBM Systems Development, added that the partnership “marks the latest step in our innovation journey for future generations of our IBM Z and LinuxONE systems, reinforcing our end-to-end system design as a powerful advantage.” Mohamed Awad, executive vice president at Arm, said the collaboration “extends the Arm ecosystem into mission-critical enterprise environments,” providing organisations with greater flexibility in deploying AI workloads.

The longer read

The surge in cloud AI infrastructure investment over the past two years has been overwhelmingly Arm-first, and the software tooling has followed: every major AI framework now has optimised kernels for Arm, and cloud-native development workflows assume Arm compatibility as a baseline. IBM Z customers have, until now, operated in a parallel world where the same applications require separate porting efforts and where new AI tooling often arrives on s390x months after it is available on Arm and x86. The IBM-Arm collaboration is a structural attempt to collapse that gap, to make IBM Z a first-class citizen of the Arm software ecosystem rather than a platform that catches up later.

Whether it succeeds depends on execution that has not yet been specified. The announcement of intent is the easy part; the virtualisation layer that makes Arm binaries run reliably on s390x-based hardware at enterprise scale, with the security and availability guarantees that IBM Z customers expect, is considerably more difficult. IBM’s track record in delivering backward compatibility and architecture migration tools is strong, the z series has maintained software compatibility across decades of hardware generations, but running a foreign instruction set architecture at production performance is a different order of challenge. A year in which enterprise AI moved from experimentation to deployment across every major platform has raised the stakes: IBM Z customers are no longer asking whether they will run AI workloads; they are asking when, and on what software. The IBM-Arm collaboration is IBM’s answer to that question. The timeline remains open.