When you delete a file, is it really gone? On a traditional hard drive, often not. But on a solid-state drive, the answer is far more complicated — and for most people, far more final.

Why can’t you recover data from an SSD?

SSDs actively erase data the moment it’s deleted

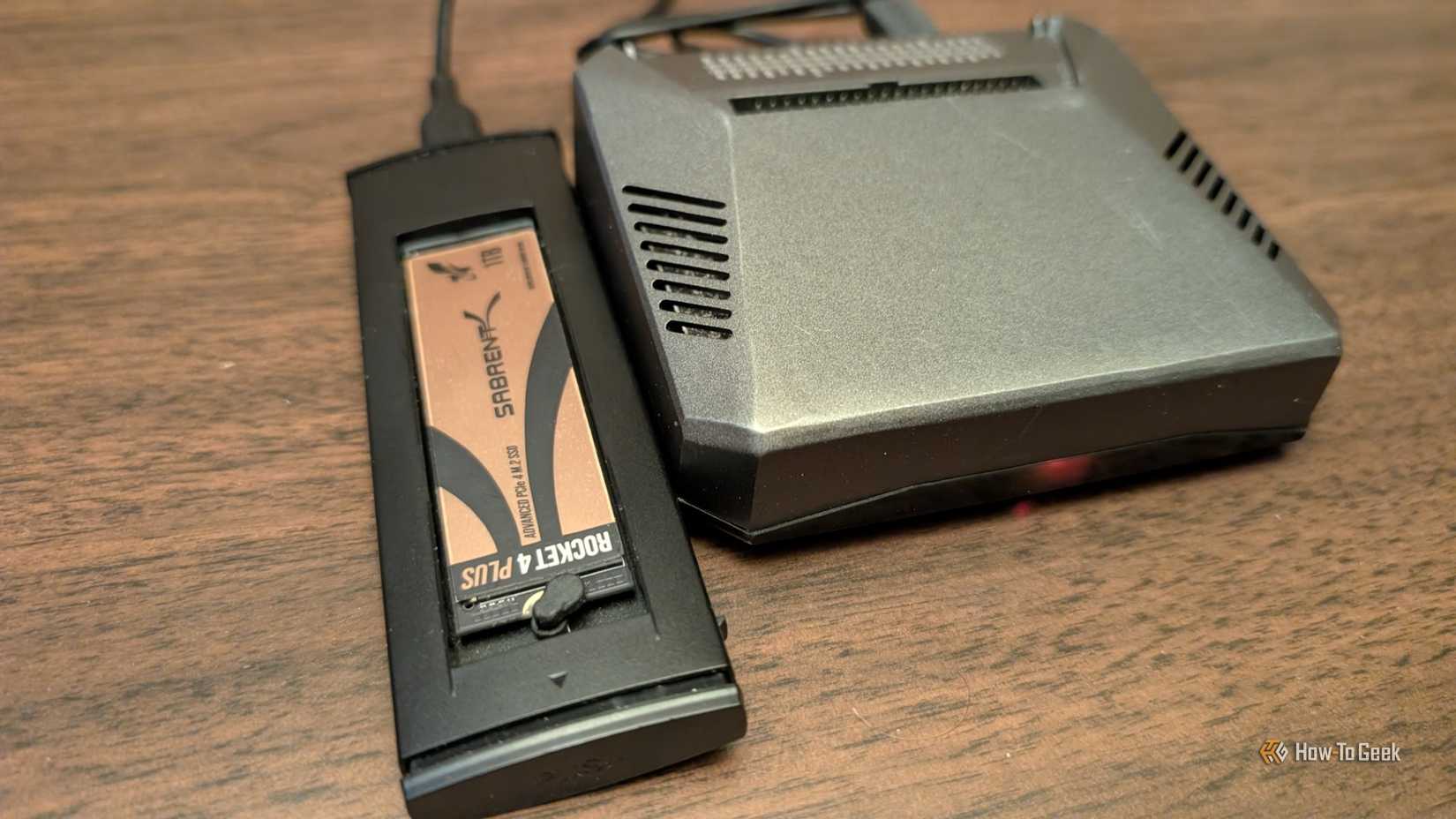

To understand why SSD recovery is so difficult, you first need to understand how these drives handle storage at a fundamental level. Unlike older storage technologies, SSDs store data in NAND flash memory cells, which are organized into pages and blocks. The critical detail here is that while data can be written to an empty page directly, it cannot be overwritten in place. To write new data to a cell that already contains something, the entire block containing that cell must first be erased. This is a physical constraint of the technology itself.

To deal with this limitation efficiently, modern SSDs use a background process called TRIM, which is supported by virtually every operating system released in the past decade. When you delete a file, the operating system doesn’t just mark that space as available. Rather, it immediately notifies the SSD via the TRIM command that those specific blocks are no longer needed. The drive then goes ahead and erases those blocks proactively, often within seconds or minutes, so they are clean and ready for new data when needed. By the time you realize you deleted something important and reach for a recovery tool, the data is frequently already gone at the hardware level. There is no ghost of the file lingering on the drive. The cells have been cleared and are, from a data-recovery standpoint, blank.

Data recovery methods and technology

Trivia challenge

From failed hard drives to corrupted SSDs — see how much you really know about saving lost data.

Hard DrivesSSDsSoftwareRecoveryHardware

What is the term for the physical component inside a hard drive that reads and writes data to the spinning platters?

Correct! The read/write head floats nanometers above the spinning platters on a cushion of air, magnetically reading and writing data. If this head crashes into the platter surface — a so-called ‘head crash’ — it can cause severe physical damage and data loss.

Not quite. The answer is the read/write head. The actuator arm holds and positions the head, but the head itself is the component that magnetically interacts with the platter surface to read and write data.

When a file is ‘deleted’ on a traditional hard drive running Windows, what typically happens to the data immediately?

Correct! Deleting a file on a standard Windows HDD simply marks that space as available in the file system — the actual binary data stays put until new data overwrites it. This is exactly why data recovery software can often retrieve ‘deleted’ files.

Not quite. The correct answer is that the file system entry is marked as free while the data remains. This is why tools like Recuva can recover deleted files — they scan for orphaned data that hasn’t yet been overwritten.

Why is data recovery significantly harder on SSDs compared to traditional hard drives?

Correct! The TRIM command, introduced to improve SSD performance, tells the drive to proactively wipe blocks marked as deleted. This means that unlike HDDs, deleted data on a TRIM-enabled SSD may be gone almost immediately, leaving recovery tools with little or nothing to find.

Not quite. The answer is TRIM. When your OS deletes a file on an SSD, TRIM signals the drive to erase those data blocks right away to keep performance high — unfortunately, this also makes traditional data recovery methods far less effective.

Which open-source tool is widely used by professionals to create sector-by-sector disk images before attempting data recovery?

Correct! GNU ddrescue is a command-line tool that copies data from a failing drive, intelligently skipping bad sectors and retrying them later. Creating an image first is critical best practice — it means recovery attempts are made on the copy, not the fragile original.

Not quite. The answer is ddrescue. While TestDisk and Recuva are excellent recovery tools, ddrescue specializes in the crucial first step of imaging a damaged drive sector by sector, preserving whatever data remains before further recovery work begins.

What is a ‘cleanroom’ and why is it essential for physical hard drive data recovery?

Correct! Hard drive platters are precision-engineered to such tight tolerances that even a single dust particle can cause catastrophic read/write head damage. Professional data recovery labs use ISO-certified cleanrooms with HEPA-filtered air to safely open and repair drives.

Not quite. A cleanroom in data recovery refers to a controlled physical environment with ultra-filtered air. Hard drive internals are so sensitive that a speck of dust the size of a fingerprint can destroy platters — opening a drive anywhere else risks permanent data loss.

What does S.M.A.R.T. stand for in the context of hard drive health monitoring?

Correct! S.M.A.R.T. is a built-in monitoring system in most modern hard drives and SSDs that tracks health indicators like reallocated sectors, spin-up time, and read error rates. Tools like CrystalDiskInfo can read these values and warn you of impending failure before you lose data.

Not quite. S.M.A.R.T. stands for Self-Monitoring, Analysis, and Reporting Technology. It’s a vital early-warning system — keeping an eye on your drive’s S.M.A.R.T. data with free tools can give you critical advance notice to back up before a failure occurs.

Which partition recovery tool, often paired with PhotoRec, can rebuild lost or damaged partition tables and repair boot sectors?

Correct! TestDisk is a free, open-source tool specifically designed to recover lost partitions and fix non-booting disks by rewriting partition tables and boot sectors. It’s a go-to in the recovery community and works on Windows, macOS, and Linux.

Not quite. The answer is TestDisk. Developed by the same creator as PhotoRec, TestDisk focuses on structural recovery — fixing the partition table and boot sector — rather than file-by-file recovery, making it uniquely powerful for disks that won’t even mount.

What SSD failure mode occurs when NAND flash cells can no longer reliably hold a charge, causing data errors even without physical damage?

Correct! NAND flash cells degrade over program/erase cycles, eventually losing their ability to retain electrons that represent stored bits — a process called cell wear-out or bit rot. This is why SSDs have a rated TBW (terabytes written) limit, and why long-term unpowered storage can also cause data loss on SSDs.

Not quite. The answer is bit rot or cell wear-out. Unlike HDDs which suffer mechanical failures, SSDs degrade at the electrical level as NAND cells lose charge retention capacity over time and repeated write cycles — making TBW ratings and regular backups especially important for SSD users.

Your Score

/ 8

Thanks for playing!

What’s the difference with a hard drive?

Hard drives delete data very differently from SSDs

On a traditional spinning hard drive, deleting a file is largely a bookkeeping exercise. The operating system removes the file’s entry from the file system index—essentially the table of contents that tells the drive where everything lives—but it leaves the actual data sitting exactly where it was on the magnetic platter. The space is marked as available for future use, but nothing physically changes until new data happens to be written over that same location. This is why hard drive data recovery has historically been so effective. As long as the deleted sectors haven’t been overwritten, the original data remains perfectly intact on the platter, and recovery software can read it directly by scanning the raw disk surface.

The contrast with SSDs is stark. Because hard drives use magnetism to store data on physical platters, the information has genuine physical persistence. It stays there passively until something actively replaces it, which could be days, weeks, or even months later, depending on how heavily the drive is used. This gave rise to an entire industry of data recovery services that could reliably retrieve deleted files, formatted partitions, and even data from mechanically damaged drives. SSDs changed this equation dramatically. The combination of TRIM, active garbage collection, and the wear-leveling algorithms SSDs use to distribute writes evenly across cells means that deleted data is not just logically removed—it is physically scrambled and erased far faster than any recovery tool can intervene. The underlying architecture that makes SSDs so fast and durable is, by its very nature, hostile to the kind of passive data persistence that makes hard drive recovery possible.

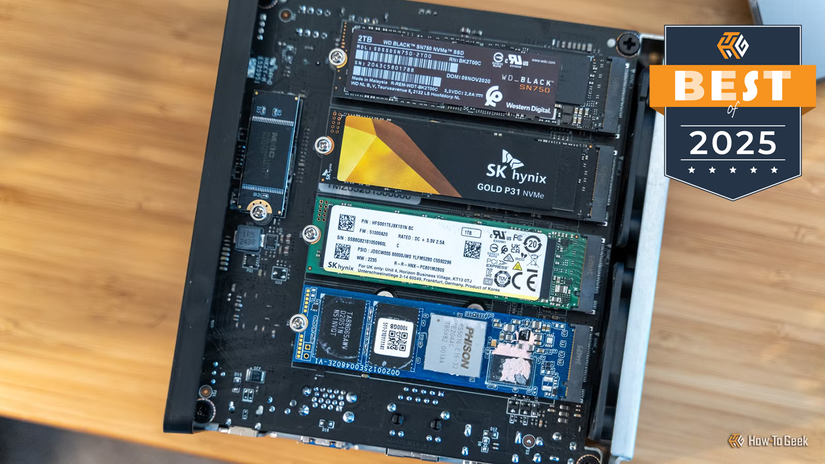

- Storage capacity

-

1TB

- Hardware Interface

-

PCIe Gen 4 x4 NVMe

This WD Black drive has Gen 4 speeds and it’s perfect for your gaming rig or even your PlayStation 5.

You said “almost,” so is there a way?

Recovery is rare, but not always impossible

In a small number of very specific circumstances, partial recovery from an SSD is technically possible, but the window is narrow, the conditions must align almost perfectly, and success is never guaranteed. The most favorable scenario is one where TRIM was never executed. This can happen on older systems running outdated operating systems that lack TRIM support, or on drives connected via certain interfaces, such as older external USB enclosures, where TRIM commands are not always passed through correctly from the operating system to the drive. In these cases, the SSD behaves a little more like a hard drive, leaving deleted data sitting in place until garbage collection gets around to erasing it.

Some enterprise-grade SSDs and certain older consumer models also implement garbage collection less aggressively, or allow it to be disabled, which can create a short recovery window immediately after deletion. Forensic specialists working in law enforcement contexts occasionally exploit these gaps using specialized hardware tools that interface directly with the drive’s controller chips, bypassing the file system entirely to read raw NAND memory. This kind of chip-off forensic recovery is expensive, technically demanding, and rarely available to ordinary consumers.

Even when some data is recovered under these conditions, it is almost never complete. Wear leveling constantly shuffles data around the drive’s cells to extend its lifespan, meaning that even intact files may be fragmented across physical locations in ways that make reassembly difficult or impossible. The realistic takeaway for most people is simple: if data is deleted from a modern SSD on a modern operating system, treat it as gone. The best recovery strategy is one you implement before disaster strikes: regular, reliable backups stored somewhere entirely separate from the drive in question.

Deleted on an SSD means deleted for good

On an SSD, deletion is fast, thorough, and almost always permanent. The same architecture that makes these drives quick and efficient makes recovery extraordinarily difficult. Your best protection against data loss is not a recovery tool—it’s a backup made before anything goes wrong.