Chromebooks have been the go-to cheap, basic computers for over 15 years, but it’s no secret that Google has been preparing for something new. We finally have the details on what the next generation looks like: Meet the Googlebook.

Android laptops, for real this time

On the surface, the Googlebooks software doesn’t look much different than ChromeOS. The company didn’t give a specific name for the OS in the press release, but it appears to be Aluminium OS—a.k.a. Android for desktop. The interface matches what we saw earlier this year, and Google said these devices run Android apps “on their own.” Chromebooks heavily supported Android, but it was always a tacked-on experience. Android apps on ChromeOS ran in a virtual machine, for example.

Googlebooks are designed to work seamlessly with other Android devices. In addition to installing Android apps on the laptop itself, you’ll be able to directly use apps from your phone on the Googlebook screen. So, when a notification from your phone pops up on the laptop screen, you can take action on it right then and there with the actual app from your phone without skipping a beat.

Gemini takes over the cursor

As with everything Google announced today, Gemini is a big part of Googlebooks, too—maybe even the biggest. These laptops will be “designed from the ground up for Gemini Intelligence,” and it all starts with the “Magic Pointer.”

The Magic Pointer is the cursor, but it’s not like any cursor you’ve used before. Imagine having “Circle to Search” on a laptop, but it can do much more than search. Whenever you wiggle the cursor, Gemini jumps into action with contextual suggestions based on what you’re pointing at on the screen. If you’re pointing at a date in an email, it could bring up a meeting shortcut.

Android’s Gemini-generated widgets are coming to Googlebooks, too. This feature allows you to use natural language to have Gemini build custom widgets for your home screen.

Googlebooks coming later this year

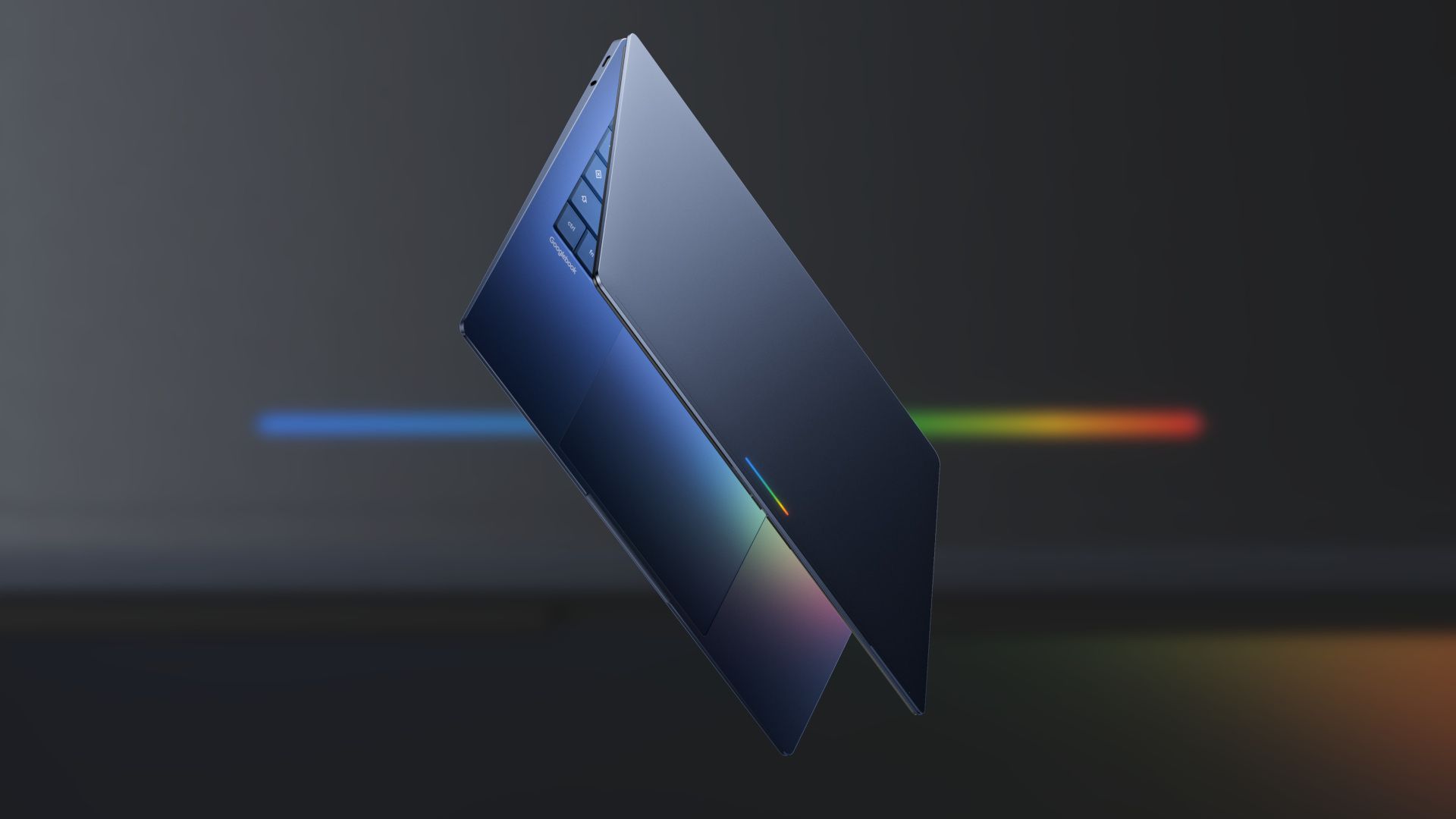

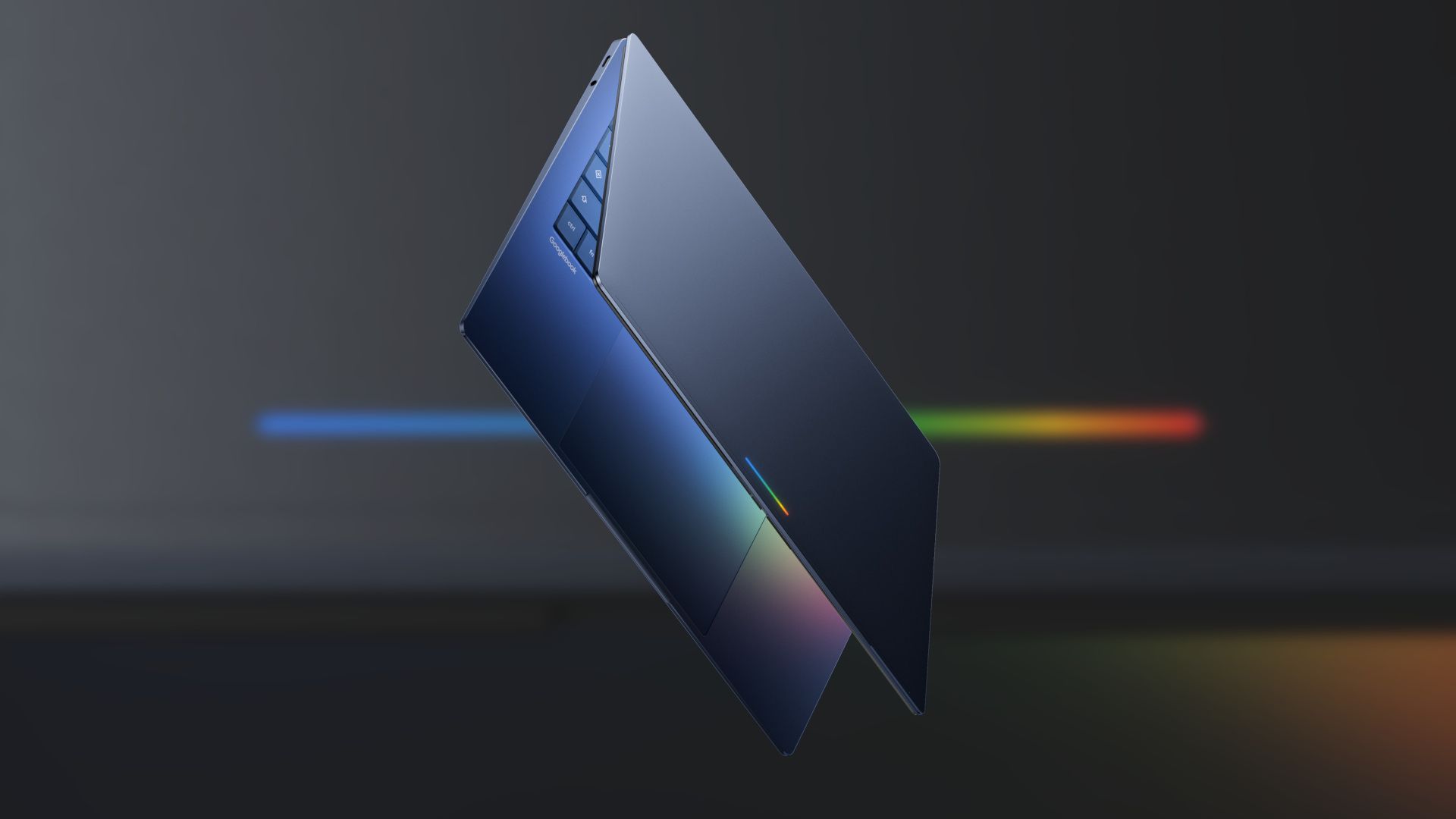

Right now, Google is only giving us a sneak peek at Googlebooks. Later this year, there will be more information and hardware. Google says it’s working with Acer, ASUS, Dell, HP, and Lenovo to make the first Googlebooks, and they’ll be “built with premium craftsmanship and materials.” This alludes to higher-end devices than Chromebooks. They’ll be identifiable by an illuminated “glowbar” on the lid.

Source: Google