Google Gemini can do a lot of cool stuff for free. Things like “Personal Intelligence” and ordering food from GrubHub. However, if you don’t pay for an AI subscription, you’re missing out on one of Gemini’s most useful features.

First and foremost, this is not an ad to get you to pay for Gemini. I’m writing this as someone who uses it for free and doesn’t plan on upgrading any time soon. However, when researching Gemini’s abilities, I stumbled upon Scheduled Actions—a feature that I genuinely think would be useful to have. It’s a shame Google put it behind a paywall.

What are Scheduled Actions in Gemini?

AI prompts meet automation

When we talk about automations and routines, it’s generally the tried and true “if this, then that” method. You choose a trigger, then pair it with some actions that follow. This is great for smart homes and on-device actions, but it can be too rigid for more creative ideas.

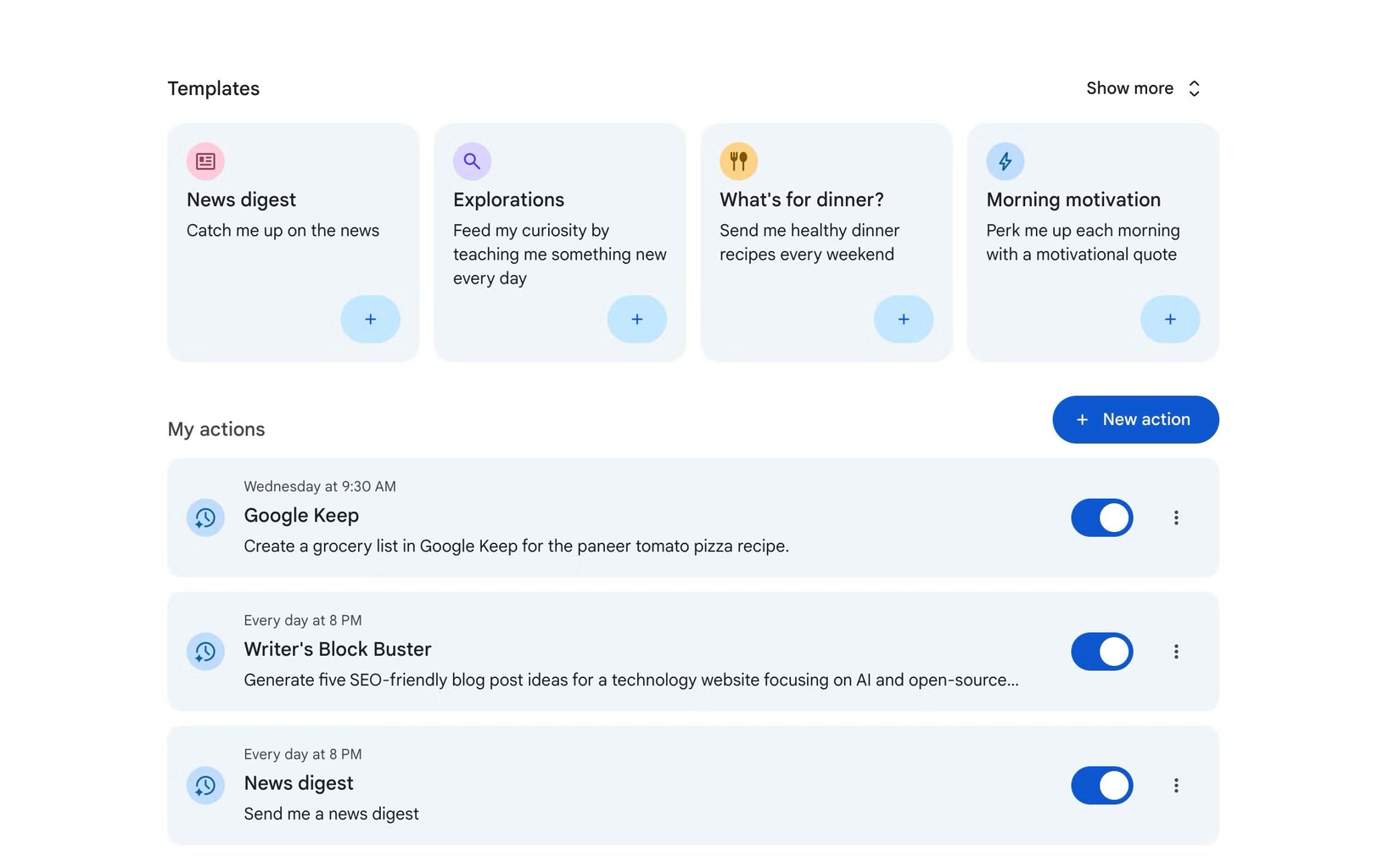

Scheduled Actions follow a similar format, except the automation’s “action” is a Gemini prompt. Rather than manually giving the same commands to Gemini over and over again, you can schedule them to automatically run at specific times on a daily or weekly routine.

When the designated time comes, it’s as if you personally gave a command to Gemini in that moment. Except it happened without you having to do anything, which is very handy. Also, since this is happening with Gemini, the Scheduled Actions will sync to any device with your Google account.

Examples of Scheduled Actions

The cool stuff you have to pay for

Google obviously thinks this feature is good enough that people will pay for it, so let’s talk about what Scheduled Actions can do. Don’t think about it in the same way you might approach Google Home routines. Yes, you could technically schedule telling Gemini to turn on or off some lights, but that’s not using it to its full potential.

Think about all of the apps and services with Gemini integration—that’s the power of Scheduled Actions. Creating these automations is as simple as giving Gemini a command.

Let’s say you want to start your day with a summary of the emails you missed while sleeping. All you have to do is tell Gemini, “Give me a summary of my new unread emails every day at 8 AM.” This will create a Scheduled Action that sends a notification to your devices with a summary every morning.

That same general format can work for a variety of Google services. Gemini could send your Calendar schedule every morning or summarize news stories at the end of the day.

It gets even more interesting if you combine services. Gemini has access to Google Maps as well, so you could ask it to look at your Calendar events and get a notification each morning that says how much time you’ll spend driving that day.

The last idea involves Google Keep. You probably know how tedious it is to constantly find recipes and add items to a grocery list week after week. For some fresh ideas, ask Gemini to “Send me healthy meal prep recipes every weekend.” When the notification arrives, you can easily read it and ask Gemini to add ingredients to a Google Keep list.

Scheduled Actions can be as simple or complex as a regular Gemini command. Maybe you want the morning email summary to only include emails from people in your contacts. You could also skip the manual review and tell Gemini to add ingredients for a healthy recipe to your Google Keep list. It’s up to you.

Google Gemini is a multimodal AI models and an integrated assistant developed by Google. It understands and combines text, images, audio, video, and code. As an AI assistant, it helps with writing, planning, learning, and productivity, integrated into Google Workspace apps (Docs, Gmail) and on mobile devices.

Powerful and expensive

Scheduled Actions is only available as part of the Google AI Pro and Google AI Ultra subscriptions. Google AI Pro is $20 per month ($200 yearly), while Google AI Ultra is an astounding $250 per month. Even if you do decide to pay for AI Pro, you’ll be limited to 10 active Scheduled Actions at a time.

Personally, I can’t justify $20 per month for Scheduled Actions—as cool as it may be. That’s more than every individual media subscription I already pay for. There are plenty of other features included, of course, but Scheduled Actions is a clear headliner for me.

Google will happily let you generate images, draft emails, and manually do all the prompts mentioned above for free, but when it comes to adding value that could improve your day-to-day life, the generosity ends. It’s a harsh reminder that these products exist for one reason: to make money.

I’ve never been good with spreadsheets, but Gemini is changing that

People have created all sorts of amazing things with spreadsheets, but I’ve never been one of them. It’s just one of those things I didn’t think was worth spending time learning. However, I have found myself using Google Sheets more often, and Gemini is to thank.