In short: Apple is testing at least four frame styles for its upcoming AI-powered smart glasses, according to a Bloomberg report by Mark Gurman published 12 April 2026. The designs include a large rectangular style similar to Wayfarer frames, a slimmer rectangular style comparable to those worn by CEO Tim Cook, a larger oval or circular frame, and a smaller oval variant. The frames are being made from acetate rather than standard plastic. The hardware uses the N401 chip, a custom processor based on Apple Watch S-series architecture, alongside two cameras: one for photo and video capture and one for computer vision. There is no display in the first version. Apple is targeting the start of production in December 2026, with a public launch expected in spring or summer 2027.

Four styles, one material

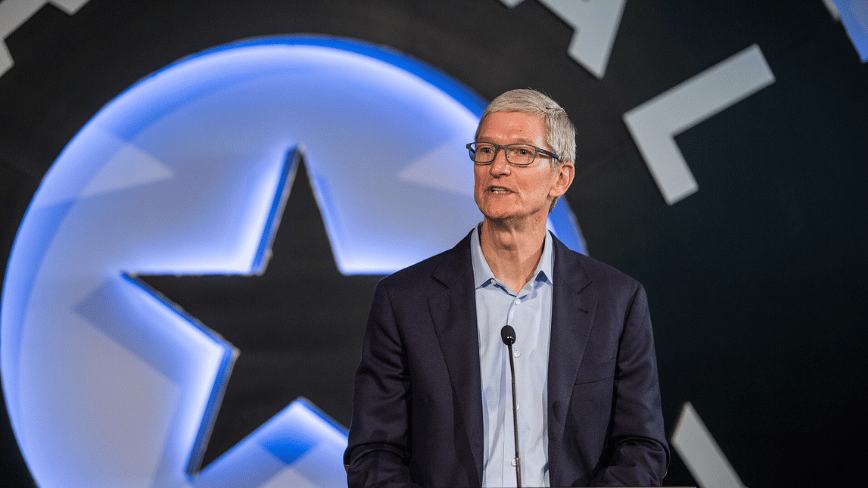

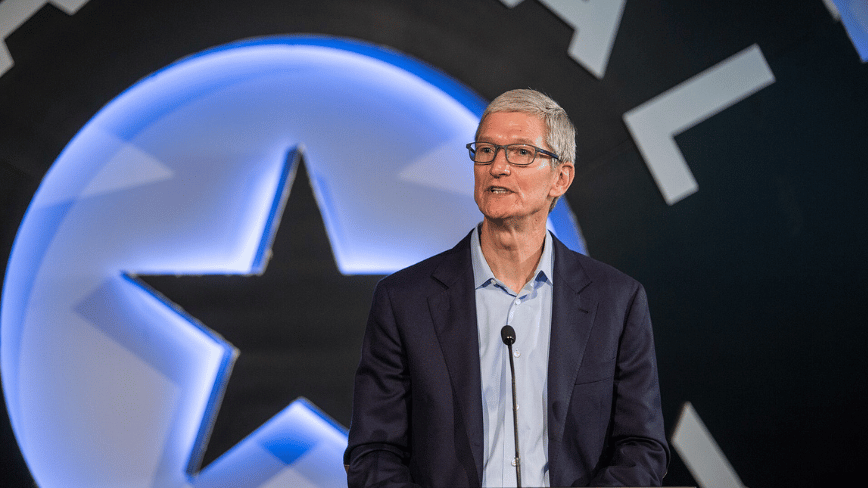

The four frame designs span different aesthetic registers. The large rectangular option resembles classic Wayfarer-style frames, a shape with broad consumer recognition and mainstream wearability. The slimmer rectangular variant is similar to the frames Tim Cook wears, a choice that suggests one option being tested is explicitly calibrated for professional wear. The two oval formats range from a larger, more expressive shape to a smaller, minimal design. The breadth of options being tested indicates Apple is not yet committed to a single visual language for the product and is gauging which combination of shapes attracts the widest set of potential users, consistent with how Apple typically approached its first watch designs before settling on a single enclosure direction.

The material is acetate, described in Gurman’s report as more durable and luxurious than standard plastic, a distinction that positions the glasses against Meta’s existing Ray-Ban line rather than against budget-tier wearables. Colours confirmed in the testing phase include black, ocean blue, and light brown, with many additional options expected by the time a final product is announced. The camera module on the front of the frame uses a vertically oriented oval arrangement with indicator lights surrounding it, a configuration that differs from the horizontal lens placement used in Meta’s Ray-Ban models and is intended to make the device recognisable as an Apple product at a distance. Apple is targeting a sub-50g weight and all-day battery life. A price of approximately $499 has been reported in secondary coverage, though Gurman did not confirm a figure in the April 12 report.

Hardware and intelligence

The N401 chip is a custom low-power processor derived from the Apple Watch S-series architecture, optimised for on-device inference within the thermal and battery constraints a pair of frames can accommodate. The glasses include two cameras: the primary handles photo and video capture; the second is dedicated to computer vision, providing Siri and Apple Intelligence with real-time environmental context without requiring the phone to be raised or unlocked. Microphones and spatial sensors are also integrated. There is no display in the first version, which means all information reaches the user through speakers or through the iPhone screen, and the glasses rely on the iPhone for any computationally intensive processing that cannot be handled on-device.

The primary interface is Siri, which will handle notifications, music playback, phone calls, live translation, and visual intelligence queries about the wearer’s surroundings. The version of Siri these glasses will run is the overhauled assistant Apple announced in January 2026, powered in part by a custom Gemini model developed through Apple’s partnership with Google. The underlying system has been in preparation for some time: Apple Intelligence accidentally launched in China on 30 March 2026 before regulatory approval had been granted from the Cyberspace Administration, a moment that confirmed the readiness of the software while flagging the compliance surface Apple must navigate in markets outside the United States.

The market Apple is entering

The smart glasses category Apple is preparing to enter has been commercially validated by Meta over the past two years. Meta sold more than seven million Ray-Ban and Oakley AI frames in 2025, more than tripling its 2024 volume in a product category that barely existed three years earlier. The most recent iteration of Meta’s strategy extended the product into corrective eyewear: Meta launches prescription Ray-Ban smart glasses to reach billions of eyewear buyers, a direct bid to convert the roughly 69% of the global eyewear market that requires corrective lenses and which standard smart glasses have not addressed. Apple updated the MacBook Air with M5 in March 2026, its most recent major hardware release, continuing a cadence of incremental but commercially significant hardware updates that Apple has maintained across its product lines even as the smart glasses project has moved from research into active engineering prototypes.

Google is also preparing to enter the category in 2026 through its Android XR platform, in partnership with Warby Parker and Gentle Monster, with both audio-only and display-equipped variants targeting a launch ahead of Apple. Apple’s 2027 entry means it will arrive after Meta has established commercial viability and after Google’s first models have reached the market, a position Apple has occupied before: it arrived after BlackBerry in smartphones and after Fitbit in wearables, and in both cases produced a product that shifted what users expected from the category. The scale of Apple’s existing iPhone user base, estimated at more than one billion active devices, gives its glasses a distribution advantage that neither Meta nor Google can replicate from a standing start.

A wider strategy

The four frame styles and technical specifications are part of a three-pronged AI wearable plan Gurman reported in February 2026. In addition to the smart glasses, Apple is developing a camera-equipped AI pendant, roughly AirTag-sized, designed to be clipped or pinned to clothing and to feed visual context to Siri continuously. The company is also developing camera-equipped AirPods. All three devices are intended to work together as ambient input channels for Apple Intelligence, creating a distributed sensory layer that extends Siri’s awareness across different positions on the body rather than concentrating it in a single device. The pendant and camera AirPods are at earlier stages of development than the glasses, with the pendant a plausible candidate for a 2027 release and the AirPods cameras likely arriving in a subsequent year.

The bet Apple is placing on ambient AI hardware reflects a broader pattern in how the technology industry is allocating resources. In March 2026, Meta cut hundreds of jobs across Reality Labs, recruiting, and sales while simultaneously expanding its investment in Ray-Ban hardware and raising its production targets, an indication that the economics of the AI hardware transition require companies to reallocate from traditional categories rather than simply scaling headcount. TNW reported in August 2025 that the next generation of AI unicorns might not hire anyone at all, analysing a structural shift in which companies are built with significantly smaller teams that accomplish more through AI capability. Apple’s wearable strategy is the consumer-facing expression of the same dynamic: hardware that extends individual capability through ambient intelligence, built for an iPhone ecosystem that already provides the processing foundation, and designed to be worn all day rather than carried in a pocket or placed on a desk.