Old Android phones and tablets make better security cameras than cheap IP cameras. You’ll find a lot of apps designed for repurposing phones into live security cams. The apps generate a live stream that you can view inside a browser. However, you can also hook it up to a network video recorder to record or re-stream the feed from the Android device. That way it can seamlessly integrate into your existing security system.

Set up the phone

Getting a live camera feed up and running

Start by installing the Android app for generating the live stream. Most apps I found in this category are either paid, closed-source, or riddled with ads. This is the only decent project I could find. It’s called Android IP Camera. It’s a free and open-source app without ads, and it’s actively maintained.

When repurposing a phone or tablet to be used as a device that runs 24/7, you’ll need to keep it plugged in 24/7. On modern phones, it’s not much of a fire hazard because they have built-in protection features. However, it will degrade the battery faster. You can either plug the phone into a smart plug that turns on and off at scheduled intervals. Or, you can remove the battery entirely and power the device directly (if you know how to solder).

You’ll need to download the installer file from GitHub and install it manually. Open the GitHub repo and go to the Releases tab. Here, pick the latest universal APK file and download it. Install Android IP Camera on your phone or tablet and launch it.

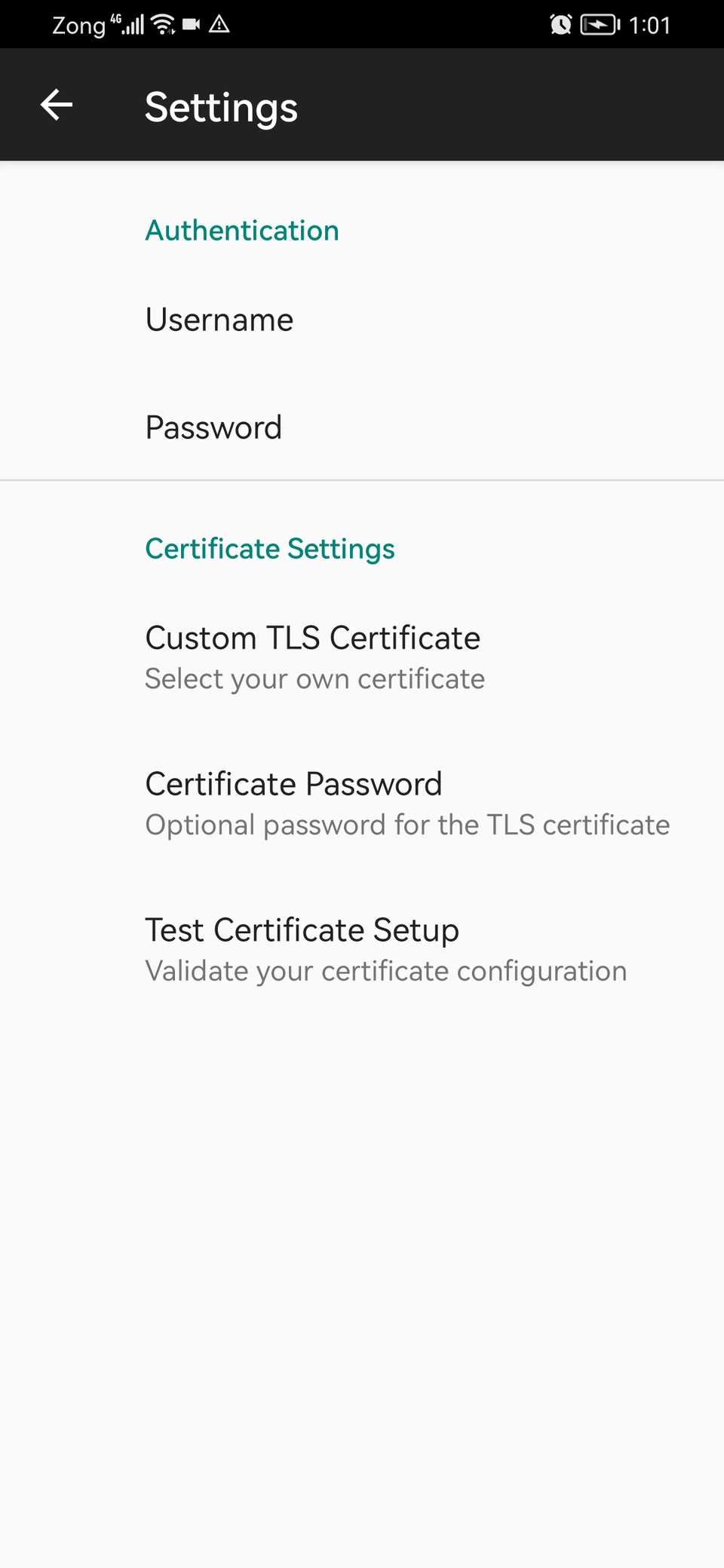

Tap the settings icon and set up a username and password here. The app auto-generates a self-signed HTTPS/TLS certificate to secure the stream. Then go back to the Android IP Camera screen.

You’ll notice it has generated an HTTPS address for you. Enter that address (along with the port) in a browser to view the stream. It’ll ask you to enter the username and password. The feed cannot be accessed without it. For example, I can open the Android IP Camera interface using this URL. Substitute this URL with the one you see on your app.

https://192.168.1.10:4444

You’ll see a warning from the browser. This is normal because the app uses a self-signed cert. Just click the Advanced button and select Continue Anyway.

Once you’ve entered the password, you’ll see the Android IP Camera stream. Here, you can also configure whether to use the front or the back camera. You can turn the flash on and off. Or, you can turn the audio stream on and off. You can also change the image resolution, zoom levels, exposure, contrast, and frame delay in this portal.

Make sure the phone and the computer you’re viewing the stream on are connected to the same Wi-Fi network. You’ll also want to assign a static IP address to your Android device, so you don’t have to reconfigure your setup to match changing IP addresses.

To view the raw live stream, you can add /stream to the URL you’re on.

https://192.168.1.10:4444/stream

The app will keep running in the background and streaming a live feed of the camera, even if you turn the screen off.

Setting up a smart recording server

This converts your Android phone into an actual IP camera system

The Android IP Camera web portal is only for live streams. If you actually want to record the streams or connect Android with your existing security system, you’ll need a network video recorder. I’m using Frigate for that purpose.

Frigate is a free and open-source network video recorder (NVR) designed to be self-hosted. You can run it as a container in Docker.

Frigate can record 24/7 in a retention loop of your choice. Or, you can set it up to only record when it detects motion. Frigate has built-in AI detection to detect people, vehicles, and animals in real time. It can also connect with platforms like Home Assistant. If you already have a security system in place, Frigate can restream the Android device as RTSP, so your phone or tablet camera stream looks like a regular IP camera to any software.

To set up Frigate and connect it with the stream coming from the Android device, open the terminal on your server.

Create a new directory for frigate and then a new docker-compose.yml file.

services:

frigate:

container_name: frigate

restart: unless-stopped

image: ghcr.io/blakeblackshear/frigate:stable

shm_size: "128mb"

volumes:

- ./config:/config # This is where your configuration file will go

- ./storage:/media/frigate # This is where recordings will be saved

ports:

- "5000:5000" # Frigate's web interface

- "8554:8554" # RTSP restreaming (optional)

- "8555:8555/tcp" # WebRTC for low-latency live view

Save the compose file and go back to the Frigate directory. Here, create a new config folder and create a config.yml file.

mkdir config && cd config

nano config.yml

Paste this into the config file.

mqtt:

enabled: false

cameras:

android_phone:

ffmpeg:

input_args: -tls_verify 0

inputs:

- path: https://username:password@192.168.1.10:4444/stream # replace these details with yours

roles:

- detect

- record

detect:

enabled: true

width: 640

height: 480record:

enabled: true

retain:

days: 7

mode: motion

events:

retain:

default: 14

mode: active_objectssnapshots:

enabled: true

retain:

default: 14

You’ll need to replace the username and password on the path: line with the username and password you already set on the Android IP Camera app. Also, substitute the stream URL with the one your phone generates.

With this config, Frigate will automatically start recording when motion is detected and keep clips with any movement for 7 days before overwriting them. For clips where a person, animal, or moving object is detected, it’ll retain them for 14 days. It also generates a snapshot image as soon as it detects an object. If you want it to constantly record, just change the mode line from mode: motion to mode: all.

Now cd back to the Frigate directory. Run the following command to spin up the Frigate container.

docker compose up -d

Wait until the container is ready and then access the Frigate interface at the following address.

http://your-server-ip-address:5000

You should see the live stream from your Android device show up on the Frigate dashboard. You can also configure alerts and detections here.

- Resolution

-

4K Ultra HD

- Power

-

5W Solar panel

- Storage

-

32GB Local Storage HomeBase, Up to 16TB With Hard Drives

- Subscription required?

-

No

Your Android phone can be more than just a passive nanny cam

With a simple camera streaming app, an Android device remains more or less a glorified nanny cam. However, if you run an NVR on your home server and feed the stream into it, your old Android phone will become part of your security system.