The drug discovery revolution is real but radically overstated, the health chatbots are a documented hazard, and the diseases that matter most remain stubbornly unsolved.

At Novartis, sometime in late 2025, a team of researchers working on Huntington’s disease used generative AI to computationally design 15 million potential compounds for a type of molecule called a molecular glue degrader, one that could cross the blood-brain barrier and attack a protein implicated in the illness.

From those 15 million candidates, the team synthesised roughly 60 in the laboratory. They arrived at a promising scaffold now moving forward for further optimisation. Fifteen million possibilities narrowed to 60. It is, by any honest measure, an extraordinary feat of computational triage. It is also, by any honest measure, not a cure for Huntington’s disease.

That gap, between what AI can do in a laboratory and what it has actually delivered to patients, is the defining tension of health technology in 2026. The industry speaks in the language of revolution. The evidence speaks in the language of incremental, uncertain, and frequently disappointing progress.

Somewhere between the two, more than 40 million people a day are typing their symptoms into ChatGPT, and patient safety organisations are warning that this might be the single most dangerous use of the technology in existence.

The 💜 of EU tech

The latest rumblings from the EU tech scene, a story from our wise ol’ founder Boris, and some questionable AI art. It’s free, every week, in your inbox. Sign up now!

The pitch for AI in drug discovery is seductive and, in its narrow terms, accurate. Traditional drug development takes 10 to 15 years and costs an average of $2.5 billion per successful compound, with approximately 90 per cent of candidates failing in clinical trials.

AI can compress early discovery timelines by 30 to 40 per cent and reduce preclinical candidate development from three to four years to as little as 13 to 18 months. Insilico Medicine brought an AI-discovered drug for idiopathic pulmonary fibrosis from target identification to Phase II trials in under 30 months, a process that traditionally takes six to eight years.

As of January 2024, at least 75 drugs or vaccines from AI-first biotechs had entered clinical trials, according to Boston Consulting Group.

These are real achievements. They are also achievements that stop well short of the finish line. As of December 2025, no AI-discovered drug has received FDA approval. Not one. The pharmaceutical industry’s 90 per cent clinical failure rate has not demonstrably improved.

Scientific commentary has noted that AI-discovered compounds appear to show progression rates similar to traditionally discovered ones, meaning the technology is getting us to the starting gate faster without improving our odds of crossing it.

Dr Raminderpal Singh, writing in Drug Target Review in February 2026, offered a summary that should be required reading for anyone tempted to confuse acceleration with transformation: the most important question for this year, he argued, is not whether AI can speed up preclinical timelines (it can) but whether it can improve clinical success rates.

Until Phase III data and regulatory approvals answer that question, the pharmaceutical industry’s cautious approach to AI investment appears, in his words, “entirely justified.” One unnamed CEO was blunter: “AI has really let us all down in the last decade when it comes to drug discovery. We’ve just seen failure after failure.”

There is a reason no amount of computation has cured Alzheimer’s, or pancreatic cancer, or ALS, or Huntington’s, or any of the diseases that continue to kill people while AI companies raise billions. The reason is not a lack of processing power. It is that human biology is irreducibly complex. Diseases with poorly understood mechanisms do not become well understood simply because you can screen millions of compounds faster.

The blockage was never the speed of molecular screening. It was, and remains, our fundamental ignorance of how these diseases work at the cellular level, how animal models fail to predict human outcomes, and how clinical trials must unfold over years to determine whether a compound is safe and effective in a living body.

AI cannot bypass biology. It cannot shorten a five-year clinical trial to five months. It cannot make a patient’s immune system behave like a predictive model. Novartis, to its credit, acknowledged this plainly at the World Economic Forum in January 2026: human biology remains deeply complex, translating research into clinical studies takes time, and for many diseases, long and rigorous trials are still needed. AI, the company said, is not a magic wand. It is a tool for navigating complexity more intelligently.

That is a defensible claim. It is also a profoundly different one from the narrative that Sam Altman floated when he mused that one day we might simply ask ChatGPT to cure cancer.

If AI’s performance in drug discovery is a story of genuine but overstated progress, its performance as a health assistant is something closer to a cautionary tale.

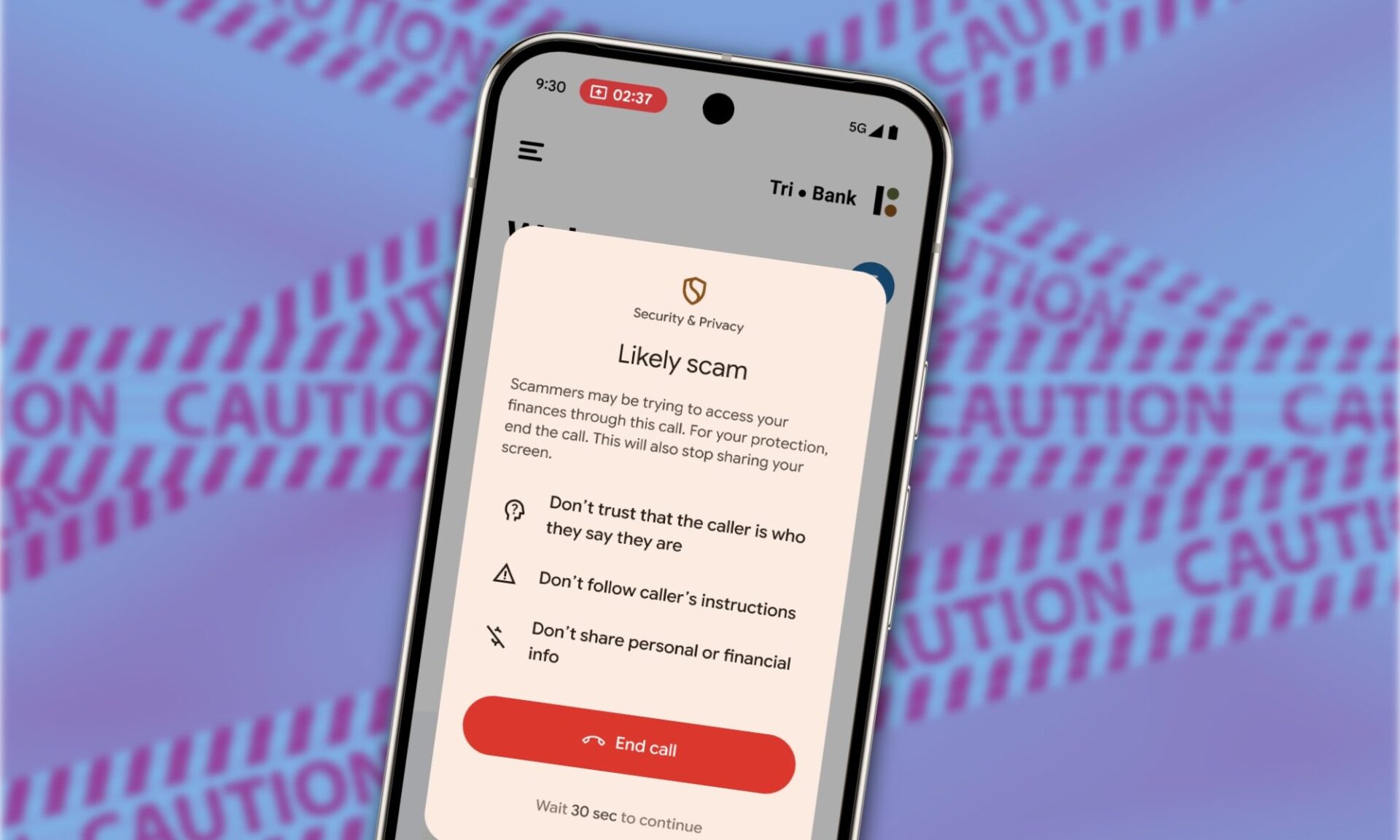

In January 2026, the patient safety organisation ECRI ranked the misuse of AI chatbots in healthcare as the number one health technology hazard for the year. The tools, ECRI noted, are not regulated as medical devices, not validated for clinical use, and increasingly relied upon by patients, clinicians, and healthcare staff.

ECRI documented cases in which chatbots suggested incorrect diagnoses, recommended unnecessary testing, promoted substandard medical supplies, and, in at least one instance, invented a body part. More than 40 million people turn to ChatGPT daily for health information, according to OpenAI’s own analysis. A quarter of its 800 million regular users ask healthcare questions every week.

The most rigorous test of whether this actually helps anyone came in February 2026, when researchers at the University of Oxford published a randomised controlled study of 1,298 participants in Nature Medicine. The results were sobering. When tested alone on medical scenarios, the LLMs performed impressively, correctly identifying conditions in 94.9 per cent of cases.

When real people used the same models to assess their own symptoms, performance collapsed: participants identified relevant conditions in fewer than 34.5 per cent of cases and chose the correct course of action in fewer than 44.2 per cent. These results were no better than the control group, which used traditional resources like web searches and their own judgement.

The study’s lead medical practitioner, Dr Rebecca Payne of Oxford’s Nuffield Department of Primary Care, was direct. “Despite all the hype,” she said, “AI just isn’t ready to take on the role of the physician.”

The problem, she explained, is that medicine is not a knowledge retrieval exercise. It is a conversation. Doctors probe, clarify, check understanding, and guide, actively eliciting information that patients often do not know is relevant. The chatbots do not do this.

They respond to whatever the user types, and users, understandably, do not know what to type. The result is a two-way communication breakdown in which the model sounds authoritative and the patient walks away with a mix of good and dangerous advice they cannot tell apart.

The mental health space is arguably worse. The American Psychological Association issued a health advisory noting that generative AI chatbots were not created to deliver mental health care and wellness apps were not designed to treat psychological disorders, yet both are being used for exactly those purposes.

Stanford researchers found that therapy chatbots exhibited measurable stigma toward conditions like alcohol dependence and schizophrenia, and that this stigma persisted across newer and larger models. The default industry response, that the problems will improve with more data, was not supported by the evidence.

None of this means AI is useless in healthcare. That would be as dishonest as the hype in the opposite direction. AI-powered imaging tools are improving early detection of certain cancers. Administrative applications, transcribing consultations, generating referral letters, summarising patient records, are saving clinicians genuine time.

Drug discovery, despite its failure to produce an approved drug, is becoming faster and more computationally sophisticated in its early stages. These are real contributions. They are also, notably, contributions that fall into the category of assistance rather than intelligence: the technology is at its best when it is doing clerical work, not clinical reasoning.

Dr Payne framed it with a precision that the industry would do well to adopt. The proper role for LLMs in healthcare, she said, is as “secretary, not physician.” That single sentence captures something the billions in investment have not: a realistic assessment of where these tools actually belong.

Alzheimer’s is expected to affect 78 million people worldwide by 2030. Parkinson’s, according to a 2025 BMJ study, is projected to reach 25 million by 2050. Pancreatic cancer’s five-year survival rate has barely moved in decades.

These are the diseases that AI was supposed to be our best hope for cracking.

Instead, three years into the generative AI era, the most visible health application of the technology is 40 million people a day asking a chatbot whether their headache means something serious, and a patient safety organisation telling them to be very, very careful about the answer.