Adobe launched the Firefly AI Assistant, a conversational agent that orchestrates tasks across Photoshop, Premiere, Lightroom, Illustrator, Express, and Frame.io using natural language. Previously codenamed Project Moonlight, it enters public beta in coming weeks, integrates with third-party models including Anthropic’s Claude, and maintains context across sessions. Adobe also announced Firefly Image Model 5, Custom Models, and the node-based Project Graph workflow system. The launch comes as CEO Narayen prepares to step down and Adobe faces competition from Canva (260M MAUs) and Figma.

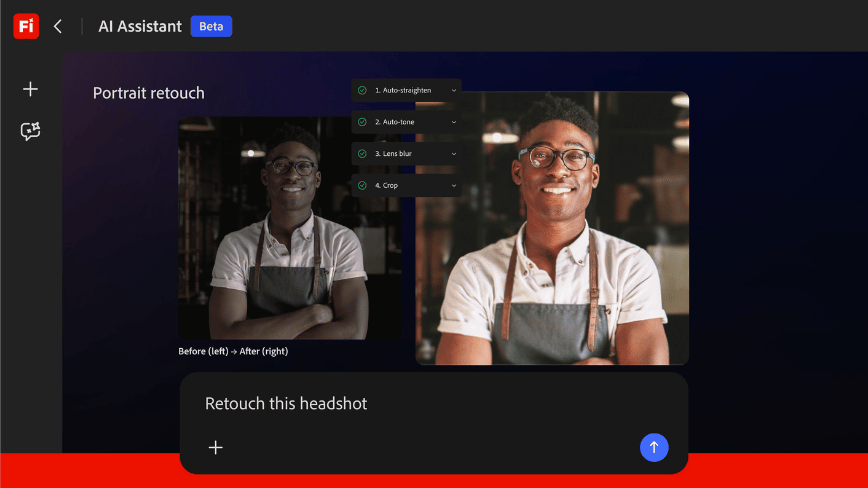

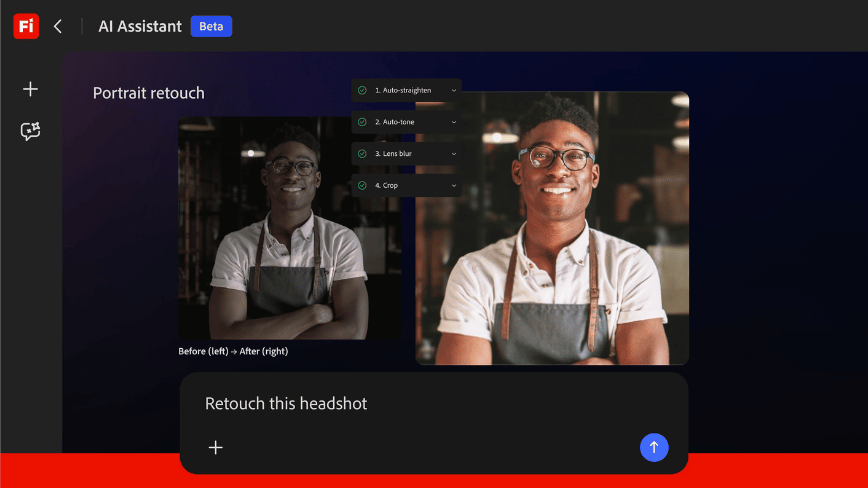

Adobe has launched the Firefly AI Assistant, a conversational agent that can operate across Photoshop, Premiere Pro, Lightroom, Illustrator, Express, and the Firefly web app to complete creative tasks described in plain language. Rather than switching between applications and navigating menus, a designer can tell the assistant what they need, resize a set of images for social media, colour-grade footage to match a brand palette, generate vector variations of a logo, and the system orchestrates the work across whichever Adobe tools the task requires.

The assistant, which enters public beta in the coming weeks, was first previewed under the codename “Project Moonlight” at Adobe MAX in October 2025. It maintains context across sessions, meaning it can remember a project’s parameters, brand guidelines, and previous decisions rather than starting from zero each time. It also integrates with Frame.io, Adobe’s collaborative video review platform, so that feedback and approval workflows feed directly into the assistant’s task pipeline.

Adobe confirmed that the assistant will work with third-party AI models, including Anthropic’s Claude, alongside Adobe’s own Firefly models and partner models from Google, OpenAI, Runway, Luma AI, ElevenLabs, and others. The new video and image editing capabilities announced alongside the assistant are available immediately for customers on Firefly plans.

The agentic turn

The Firefly AI Assistant represents Adobe’s entry into the agentic era of software, the shift from tools that respond to individual commands toward systems that understand intent and execute multi-step workflows autonomously. It is a significant architectural change for a company whose business has been built on selling individual applications, each with its own interface, learning curve, and subscription tier.

The competitive pressure behind the move is visible in Adobe’s financials. The company reported $23.77 billion in revenue for fiscal year 2025, with digital media annual recurring revenue of $19.20 billion, representing 11.5 per cent year-on-year growth. It is targeting $26 billion in revenue for FY2026. Those are large numbers, but the growth rate has slowed, and the stock has declined roughly 43 per cent as investors question whether Adobe’s traditional per-application model can survive a market where every software company is embedding AI and where competitors offer increasingly capable creative tools at a fraction of the price.

Canva now has more than 260 million monthly active users, many of them the small businesses and marketing teams that Adobe’s Express product targets. Figma commands an estimated 80 to 90 per cent market share in UI and UX design, the category Adobe tried to acquire for $20 billion before regulators blocked the deal. Both companies are building their own AI-driven creative assistants, and neither carries the legacy of a product suite designed before large language models existed.

What the assistant actually does

In practical terms, the Firefly AI Assistant works as an orchestration layer sitting above Adobe’s individual applications. A user might ask it to take a raw photograph from Lightroom, apply a specific editing style, generate three variations with different aspect ratios in Photoshop, create a matching set of social media graphics in Express, and prepare the assets for review in Frame.io, all in a single conversational thread. The assistant determines which application handles each step and executes accordingly.

This matters because multi-application workflows are where creative professionals lose the most time. A video editor working in Premiere Pro who needs a title card designed in Illustrator, colour-corrected footage from Lightroom, and audio processing from Adobe’s tools currently manages those hand-offs manually. The assistant aims to collapse that friction into a single interface where the apps become invisible and only the outcome matters.

Adobe is also launching Firefly Image Model 5 in public beta, its latest image generation model, and expanding Firefly Custom Models, which let creators train a model on their own image library to capture a specific visual style, character design, or photographic look. Custom models are private by default and reusable across projects, a feature aimed at enterprise teams and brand-conscious studios that need consistent visual output without exposing their assets to third-party training sets.

Project Graph and the workflow layer

Alongside the assistant, Adobe is developing Project Graph, a node-based visual system that lets creators design, connect, and automate AI-powered workflows across Creative Cloud. Where the Firefly AI Assistant uses natural language, Project Graph uses a visual editor in which users wire together AI models, Adobe tools, and effects into reusable “capsules“, portable workflow templates that can be shared across teams and dropped into individual applications.

Project Graph can access tools from across the Creative Cloud suite, including Photoshop, Illustrator, and Premiere Pro, as well as the 30-plus third-party AI models Adobe has integrated through partnerships. It is still in development but represents Adobe’s longer-term bet: that the value of its platform lies not in any single application but in the connective infrastructure between them.

A leadership transition

The AI push arrives as Adobe navigates a CEO transition. Shantanu Narayen, who has led the company for 18 years and drove its transformation from packaged software to cloud subscriptions, announced in March 2026 that he will step down once a successor is appointed. He will remain as board chair. The successor search, led by a special committee under lead independent director Frank Calderoni, is considering both internal and external candidates.

Narayen’s departure creates an unusual situation: the executive who built Adobe’s subscription empire is leaving just as that model faces its most serious structural challenge. His successor will inherit a company with nearly $24 billion in annual revenue, dominant market share in professional creative tools, and a strategic partnership with NVIDIA on next-generation Firefly models — but also a stock price that suggests the market is not yet convinced the transition to AI-native creative tools will protect the margins that made Adobe one of the most profitable software companies in the world.

The Firefly AI Assistant is the clearest signal yet of the direction. Adobe is betting that the future of creative software is not a suite of applications you learn individually but a conversational partner that knows what you want and which tools to use. Whether creative professionals embrace that vision, or see it as the automation of their craft, will define the next chapter for a company that has shaped how the world makes things for four decades.