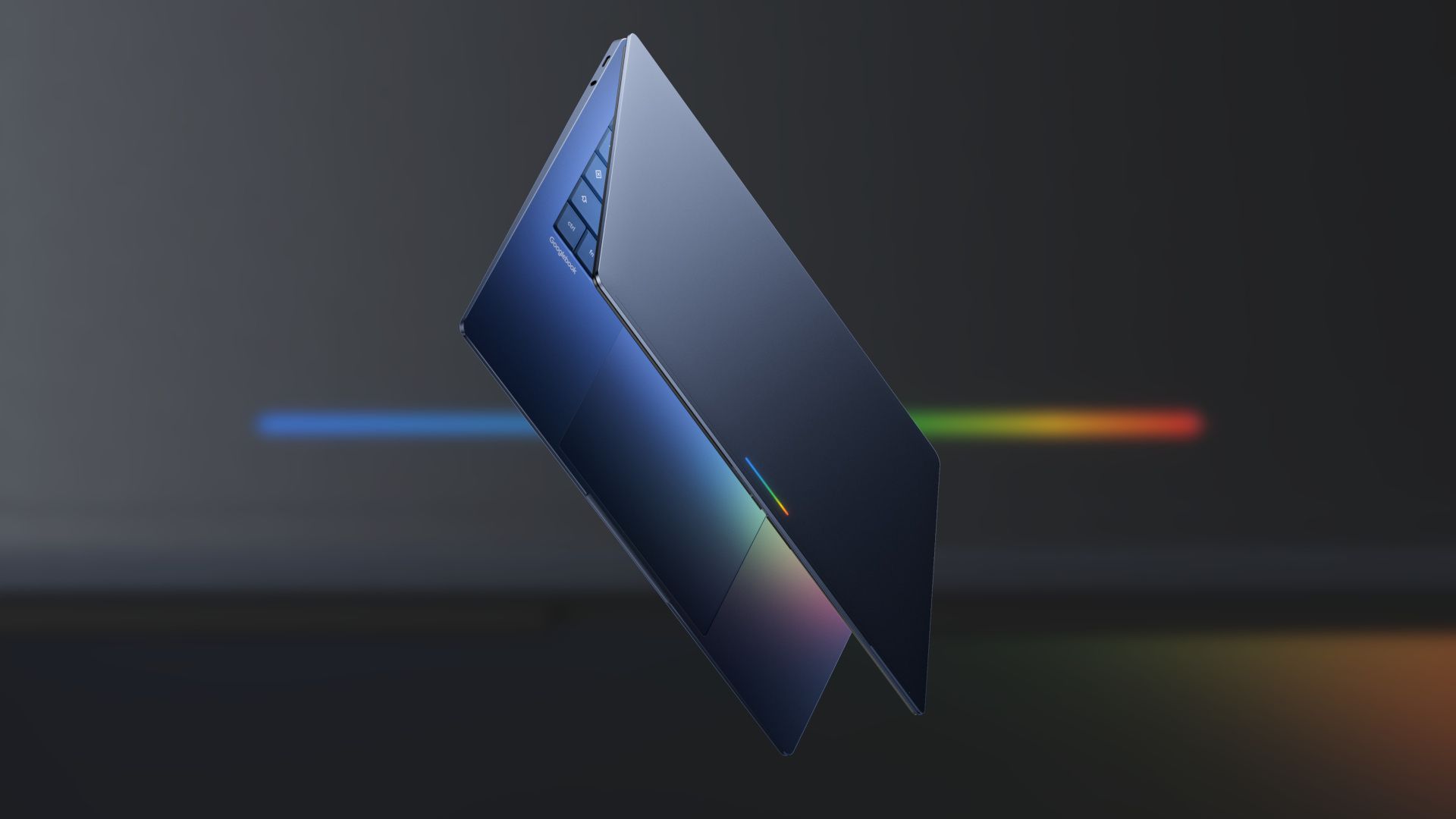

Google wants Gemini to be the brain of your next laptop, and the company has announced a whole new category to make that happen. Dubbed Googlebook, the new laptop platform puts Gemini at the center of the experience, with devices from Acer, Asus, Dell, HP, and Lenovo expected this fall.

What makes it different

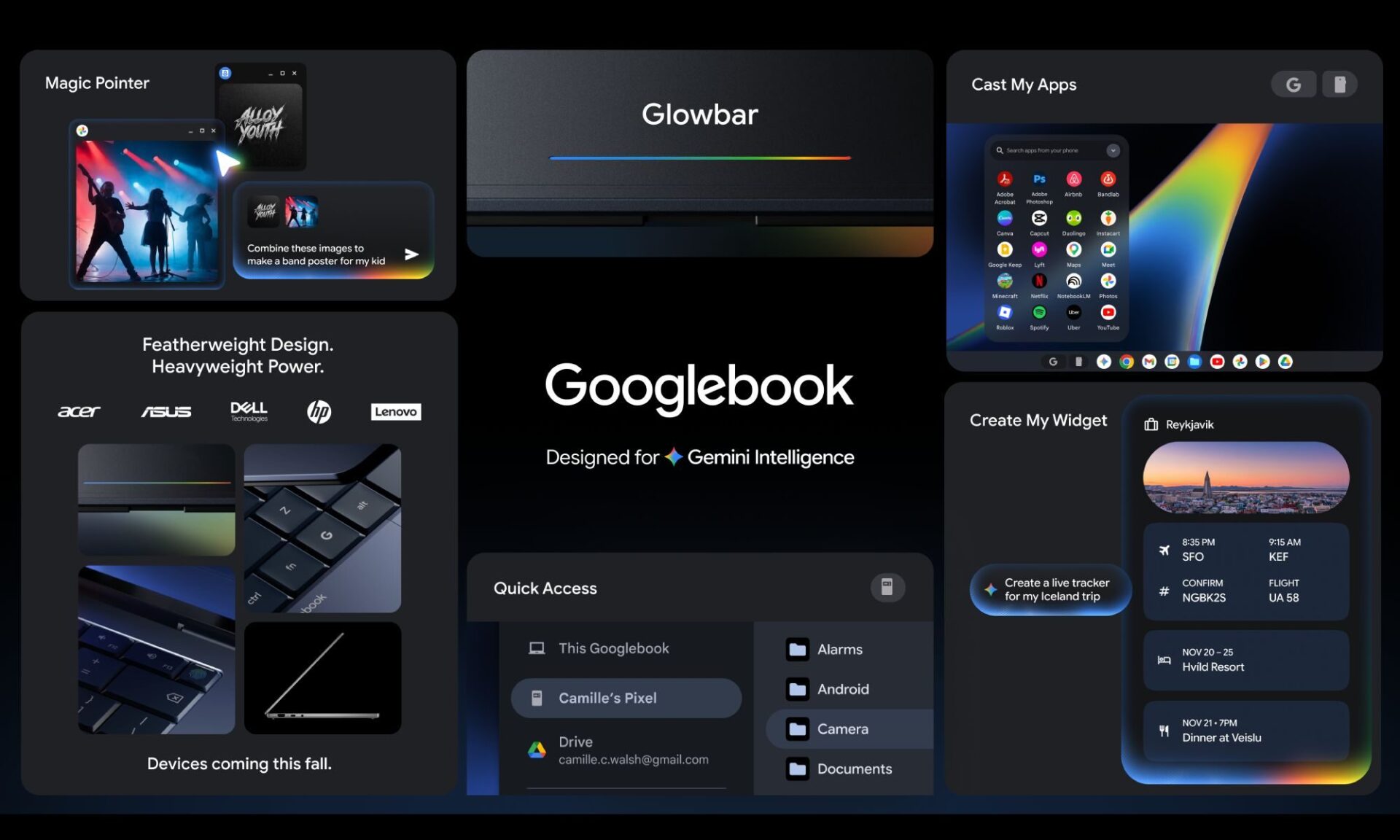

At the core of the Googlebook experience will be Magic Pointer, a feature built with Google DeepMind that brings Gemini directly to your cursor. Wiggle it over anything on screen, and Gemini will surface contextual suggestions.

Point at a date in an email, and it will offer to schedule a meeting. Select two images, and it will be able to composite them together. And the best part: you won’t need to install a separate app or type in a prompt. The idea behind this feature is to make AI assistance available at every point of interaction on the laptop, not just when you go looking for it.

Googlebooks will also include Create your Widget, which will let you build custom desktop widgets by describing what you want in natural language. The tool will pull information from your Gmail, Google Calendar, and the web to assemble a personalized dashboard. Google says you will be able to organize flight details, hotel reservations, and a trip countdown into a single widget on your desktop.

Seamless Android integration

Google says Googlebooks will work seamlessly with your Android phone. A feature called Quick Access will let you browse, search, and insert files from your phone directly into the laptop’s file browser, with no transfers required. You will also be able to run Android phone apps directly on the laptop, without downloading them or dealing with emulated touch controls.

Every Googlebook will include a glowbar, a light strip on the device body that will serve as both a visual identifier and a functional element. Google says that the devices will come in a variety of shapes and sizes and run “a modern OS that’s designed for Intelligence,” most likely a reference to Aluminium OS, the company’s Android-based platform that merges ChromeOS and Android into a single desktop OS. Exact specs and pricing have not yet been announced.

Google has shared few details beyond the software features announced today, so the platform’s real-world appeal will come down to what its manufacturing partners deliver this fall.