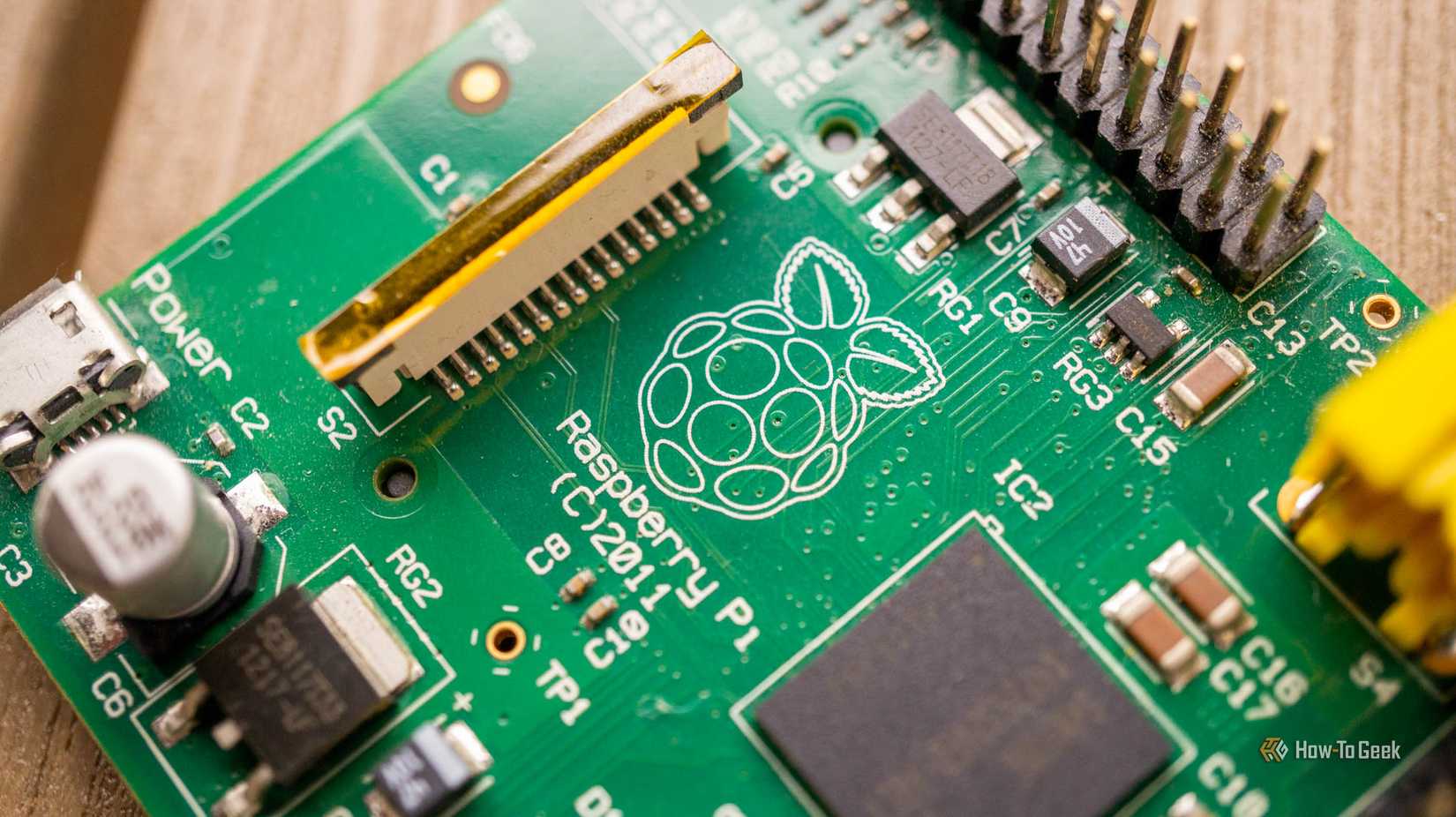

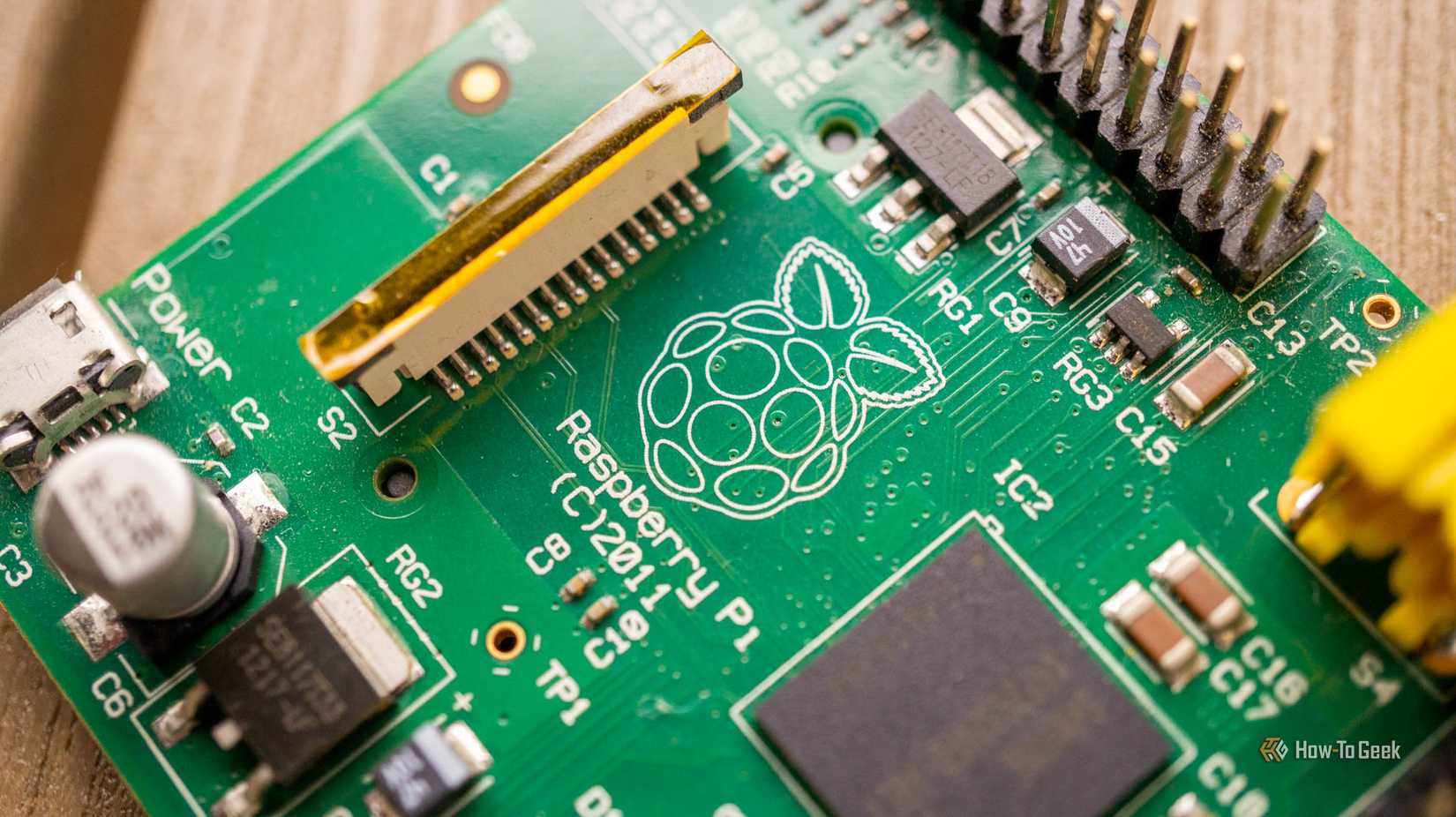

If you’re like me and have a drawer full of Raspberry Pis that are sitting unused, pull them out and turn them into something useful. I chose to create a Docker Swarm cluster with mine, and I think it’d be a great benefit to your homelab, too.

Want an affordable Linux server? Get a Raspberry Pi

They’re compact, can be picked up for cheap, and you might already own a few

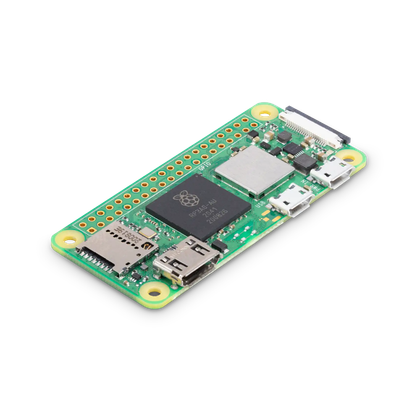

The best part of a Raspberry Pi is that it’s a full-fledged computer in an ultra-small form factor. You’re able to run full operating systems on the fanless and silent single-board computer. This is fantastic considering comparable microcontrollers only execute base code, and don’t run a full operating system.

The Raspberry Pi also uses hardly any power. For example, the latest Raspberry Pi 5 only draws around 2.5W to 5W at idle, and under load (with a SSD attached) it maxes out around 12W. Compare this to the average power draw of around 20W to 40W of a Core Ultra 5 mini PC, and the Pi is so much more efficient. This is especially seen in older Pis like the Pi 4 B, which maxes out around 7W, or the Pi Zero 2 W, which idles at around 0.6W to 1.4W, and maxes out at around 3W under heavy load.

So, when building a low-power Docker Swarm cluster, the Raspberry Pi is really one of the most ideal systems you can choose.

-

- Brand

-

Raspberry Pi

- CPU

-

Cortex-A72 (ARM v8)

With the Raspberry Pi 4 Model B, you can create all kinds of fun projects, and upgrade gadgets around your home. Alternatively, install a full desktop OS and use it like a regular computer.

-

- Brand

-

Raspberry Pi

- CPU

-

Quad-core 64-bit ARM Cortex-A53

The Raspberry Pi Zero 2 W is super tiny and super affordable, but it packs enough computing power for a variety of DIY projects. You can use it to create a handheld retro gaming console, for Klipper/Mainsail, a super compact home or media server, and more.

Docker is fully supported on the Raspberry Pi

Most images come with ARM versions that are perfect for the single-board computer

One of the other major reasons to turn Raspberry Pis into a Docker Swarm cluster is that Docker is fully supported on the Pi. While not every container offers ARM support, a vast majority of them do, which makes using Pis as Docker hosts all the better.

The other thing to keep in mind is that, while many containers do support ARM, not every container is meant to run on ARM. Take Plex as an example. It definitely can run on a Raspberry Pi, but the experience won’t be the best as you won’t have access to hardware transcoding, even on newer Pis.

Before you go all-in on using Raspberry Pis for a Docker Swarm cluster, just do a quick sanity check to make sure that the containers you plan to run work well on Pi. If they don’t, or if they aren’t even available, then a Swarm cluster will do you no good. Thankfully, the containers I planned to run work perfectly on ARM, making my Pis a fantastic option for my Docker Swarm cluster.

How I run my entire homelab on Docker (and why you should too)

All my services, all in neat little boxes.

It’s easy to put multiple Docker systems into a cluster

Docker Swarm doesn’t have to be as complicated as you think

Now, how do you actually turn a drawer of unused Raspberry Pis into a full-on Docker Swarm cluster? It’s not as hard as you may think, though there are definitely several steps involved. Step one is getting Raspberry Pi OS 64-bit Lite installed. Go the route of Lite because there’s no reason for a desktop environment here, terminal-only is just fine.

From there, you need to make sure each Pi is connected via Ethernet, has a proper hostname, and Docker gets installed. You can use Wi-Fi, but it’s definitely recommended to use Ethernet for a setup like this for reliability.

sudo hostnamectl set-hostname pi-swarm-1

curl -fsSL [<https://get.docker.com>](<https://get.docker.com/>) | sh

sudo usermod -aG docker $USER

sudo rebootThe hostname is very important (and also needs to be unique to each Pi in the Swarm cluster). While not necessary, having unique and identifiable hostnames makes it easier to manage the nodes through their hostnames.

Now, before actually creating the cluster, you should mount shared storage to each Pi. The shared storage ideally would be a NAS, but could also be the manager Pi (that we’ll talk about in a minute). The reason you need shared storage is so that when a container stops running on one Pi for whatever reason, another Pi can pick up where it left off with the same configuration files.

sudo apt install nfs-common -y

sudo mkdir -p /mnt/nfs/shared

echo "192.168.x.x:/path/to/nfs/share /mnt/nfs/shared nfs defaults,_netdev 0 0" | sudo tee -a /etc/fstab

sudo systemctl daemon-reload

sudo mount -aNow that there’s shared storage, it’s time to actually set up the Docker Swarm cluster. You’ll run the following command on your primary manager node: docker swarm init --advertise-addr . I say manager node because in a Docker Swarm setup, at least one Docker instance has to be the manager, and then you have worker nodes that join the manager.

Whenever you run that command, you’ll get an output command that has a token in it. Take that join command, and run it on the other Pis to get them to join up to the manager node. It’ll look something like this: docker swarm join --token SWMTKN-... 192.168.x.x:2377 Lastly, once everything is joined up, run docker node ls to make sure that the other Pis are properly joined with the manager node.

Now that you have the nodes and manager set up, you could just start deploying services. But, I’d take a few more minutes to properly set up the NFS share with Docker using a Docker Volume. You don’t have to do this step, and could just bind mount the NFS share in your Docker deploy commands, but packaging it up in a Docker Volume just makes things a bit tidier.

docker volume create \

--driver local \

--opt type=nfs \

--opt o=addr=192.168.x.x,rw \

--opt device=:/srv/nfs/shared \

nfs-volumeOnce you have that volume mounted, you just reference the data as nfs-volume in your deploy commands and Docker handles the rest. Just keep in mind, some services, like MySQL or PostgreSQL, really don’t like NFS storage and prefer local storage or more advanced setups like clustered storage.

Now you can deploy containers however you normally do, through Portainer, Docker CLI, Docker Compose, or any other method. The Pis will now work in a cluster and move services around as needed, without you having to do anything else. It turns your cheap collection of Raspberry Pis into a full-on home server setup.

Raspberry Pis offer a ton of homelab value

Being able to take a handful of Raspberry Pis that were sitting unused in a drawer and deploy a full Docker Swarm setup within just a few minutes is an invaluable skill and feature to any homelab. Once deployed, it’s extremely simple to spin up an instance of anything you need and just have it redundantly fall back between different systems as you maintain or update a Pi down the road.

Plus, having the experience of setting up a distributed, high-availability system like this with cheap hardware helps prepare you for doing the same thing on more expensive hardware down the road. While I used to run three Raspberry Pis in my homelab, now I have three mini PCs which offer way more power and capability, but the setup procedure was exactly the same.