The ESP32 is an inexpensive and versatile microcontroller that is normally associated with cheap smart home devices and small DIY gadgets. But this sliver of silicon can do much more than you probably thought, as evidenced by projects that push the board to its limit.

Host a web server

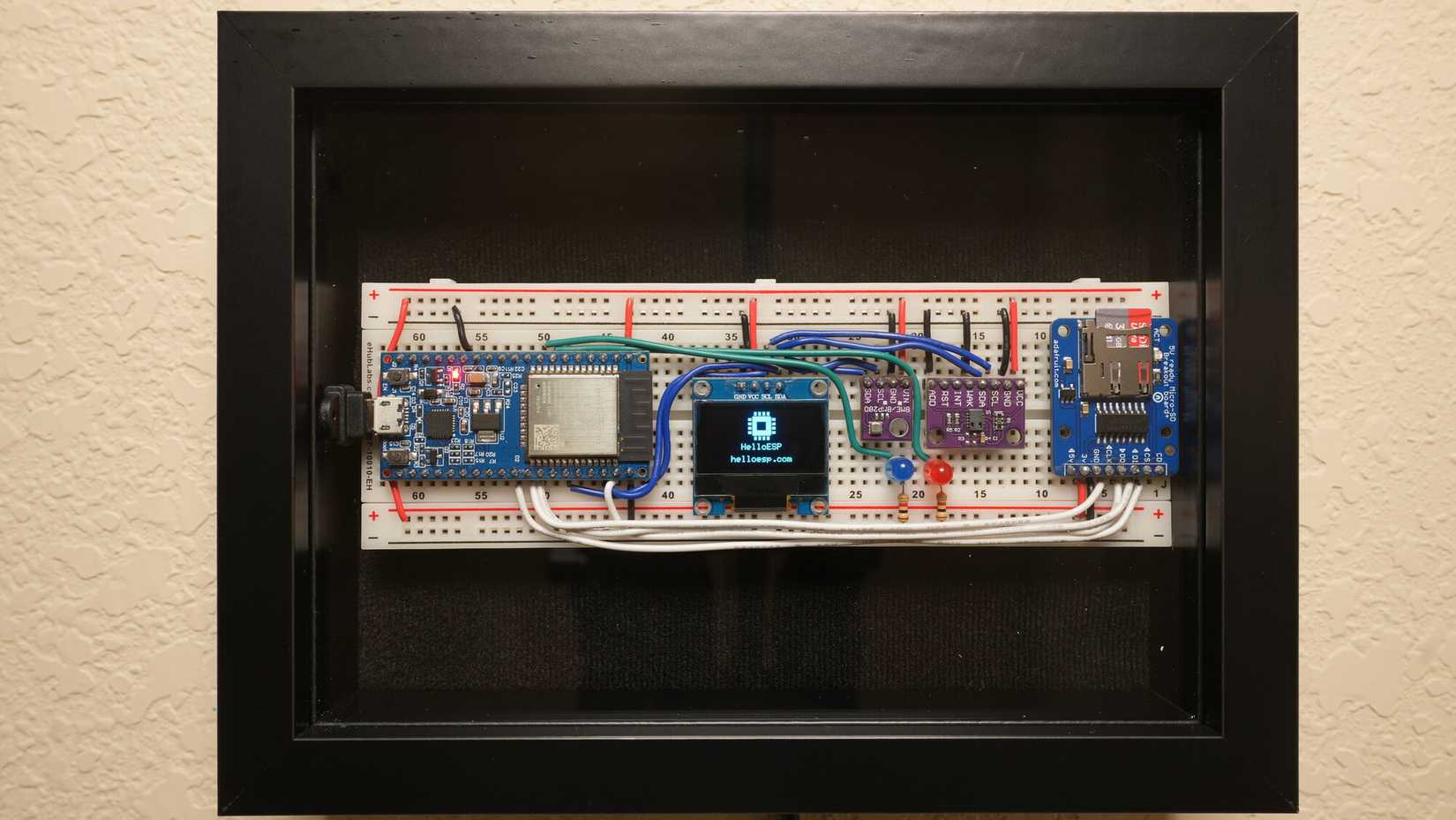

HelloESP is a website hosted on a $10 ESP32 development board with a paltry 520KB of RAM. Initially deployed in 2022, the project exists to see how far a cheap microcontroller can be pushed. The original design lasted 500 days before burning out, but in mid-2026, the project author rebuilt the project, and it’s now up for all to see.

You can check out the brief on the project’s GitHub page. The full bill of materials includes the ESP32 DOIT DevKit V1, BME280 and CCS811 sensor arrays that gather environmental data, a 128×64 OLED panel that displays the server’s status, a microSD card, and two LEDs.

The project is completely open source, and you can build something similar yourself. This feels like a project you’d undertake for the sake of it rather than a serious attempt to host a website, but it’s still an impressive feat. The author has implemented some form of redundancy via a Cloudflare Worker that shows an offline page if the server goes down.

A polyphonic audio synthesizer with 80 voices

You don’t need to know a lot about synthesizers to understand that using an ESP32 to power an 80-voice polyphonic synthesizer with crystal clear audio is an achievement. The ESP32Synth project is capable of rendering more than 364 voices, but the documentation refers to this mode as “The Abyss” in that it results in poor latency, audio jitter, and other problems. Hence, 80 is the safe limit before things start falling apart.

For this project, you’ll need a dual-core ESP32 like the classic or S3 model (single-core C3s and S2s aren’t recommended), an external digital-to-audio converter (DAC) that can interface with the board’s I2C pin, and some understanding of how a synthesizer works in order to make music.

There are plenty more ESP32-powered synthesizers out there, like MothSynth and esp32_basic_synth, but none that go quite as hard as this one (that I could find).

Responsive radar-powered predictive lighting

The ESP32 is often used to power mmWave presence sensors in the smart home, which are essentially small radar scanners that track the position of people with a high degree of accuracy. This is good for feeding back into a smart home platform like Home Assistant, to prevent the lights from going out while you’re still in the room (among other things).

So what if you could take data from a sensor, process it, and then use it to turn on nearby LED lights like some sort of magic trick? It turns out you can, and you can use a single ESP32 board for both sides of the equation, with a response time in the milliseconds.

Perhaps the most impressive of these examples is LightTrack-VISION thanks to the author’s impressive video demo posted on Reddit. This particular project used an ESP32-C3 SuperMini and an LD2410B radar sensor, while the similar AmbiSense project (which has better documentation) can also be set up to use multiple ESP32s for added accuracy.

Run local AI models with image recognition

\One of the most interesting ESP32-powered smart home projects that I’ve seen recently is a magnetometer-based sensor that measures gas and water meters. It’s highly accurate and senses movement in the mechanism that your utility company uses, but it doesn’t exactly “read” the meter.

But thanks to lightweight AI frameworks like Tensorflow Lite, you can run an AI model on an ESP32 board with a camera that reads a meter and pushes this information back to a platform like Home Assistant via MQTT.

The meter reader project uses an ESP32-CAM module, but there are other ways you can use this technology. One Instructables tutorial focuses more generally on using an ESP32-S3 with an external camera module for more general image recognition tasks.

Install a “real” operating system with apps

Unlike the Raspberry Pi, ESP32 microcontrollers don’t run a “proper” OS that you can interact with in the traditional sense. Instead, you flash it with code, and it performs its job until you unplug it or flash it again. But thanks to the work of some very dedicated individuals, you can now install “proper” operating systems on an ESP32 complete with apps.

Tactility and MicroPythonOS are two examples of this in action. Each has a graphical user interface, built-in and external applications, app stores, over-the-air updates, and support for touchscreen input. You can install them on displays embedded with ESP32 microcontrollers like the Cheap Yellow Display family of devices and LilyGO smart watches, or build something yourself.

Each can be installed via a web installer using a supported browser like Chrome.

Looking for some more practical projects? Here are seven ESP32 projects you can do in an hour.