When a customer service representative says, “I totally get your frustration,” it feels natural. When a chatbot says the same thing, something feels deeply off. Now, researchers have confirmed that gut feeling with actual data.

As reported by Techxplore, a new study published in MIS Quarterly finds that when AI chatbots express empathy during a service failure, it can actually make things worse for customers, not better.

Why does chatbot empathy backfire?

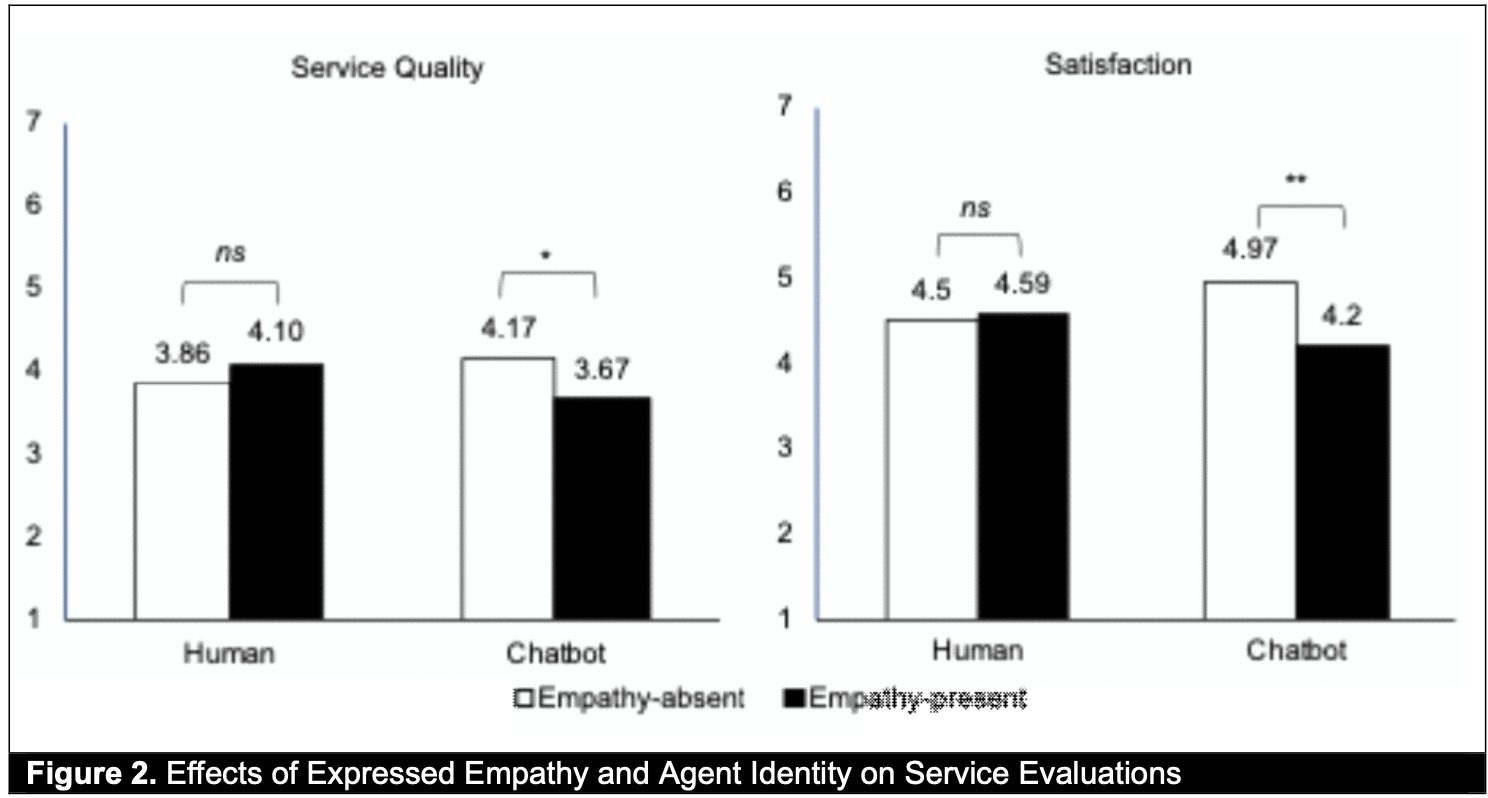

The research team from McGill University, University of South Florida, and Hong Kong Baptist University ran three separate experiments, where participants interacted with a service chatbot that made mistakes. In some cases, the chatbot responded with empathetic phrases such as “I really feel your frustration” after the errors. In others, it simply moved on without acknowledging the customer’s emotions.

The empathetic responses did not go over well. Instead of calming customers down, they triggered what researchers call “psychological reactance”, an instinctive negative response when people feel their sense of control or freedom is being threatened.

The idea that a machine had analyzed and responded to their emotional state felt invasive rather than comforting. This led customers feel less satisfied with the overall service.

This aligns with my personal experience. When chatbots like ChatGPT try to be too encouraging or understanding, it feels off. It’s akin to the uncanny valley effect I experience when watching AI-generated content. When you know you are chatting with AI, false emotional support irks you more than straightforward responses.

So what should chatbots do instead?

The researchers suggest that companies should not automatically equip chatbots with empathy features, especially when handling service failures. The benefits of human empathy do not simply transfer to AI.

Chatbots can explore other approaches, like humor, compliments, or a straightforward apology, that don’t carry the same invasive undertone.

The takeaway is clear. Making a chatbot sound more human isn’t always the right move. Sometimes, it is best to let a bot be a bot.