Over the past decade, AMD has fallen into NVIDIA’s shadow, especially as DLSS and NVIDIA’s push into AI and machine learning reshaped what gamers expect from a GPU.

Despite the market shift, I’ve mostly stayed loyal to AMD’s GPUs because of the value they offer, which is why I picked up the RX 9070 XT. While I’ve been happy with the raw performance, the real reason I bought it was that I believed in AMD’s promising new FSR Redstone suite—and so far, it’s been pretty disappointing.

Redstone’s RDNA 4 exclusivity pushed me into a premature RX 9070 XT upgrade

A future bet that arrived too early

When I built my new PC a year ago, I opted for all-new components and a used AMD RX 6800 XT, simply because it was incredible value for the ~$350 that I paid for it. The card has a ton of raw performance and 16GB of VRAM, which is why it managed to stay relevant years after the RX 7000 series launched.

Quiz

The Legacy of 3dfx

From Glide to the grave — test your knowledge of the company that revolutionized PC gaming graphics forever.

HistoryHardwareTechnologyIndustryInnovation

In what year did 3dfx Interactive file for bankruptcy and sell its assets to NVIDIA?

Correct! 3dfx filed for bankruptcy in late 2000, and NVIDIA acquired its key assets — including patents and engineers — for around $70 million. It was a stunning fall for a company that had dominated the GPU market just years earlier.

Not quite. 3dfx filed for bankruptcy in 2000, not the year you chose. NVIDIA swooped in to purchase their intellectual property and hire their talent, effectively ending 3dfx as a competitive force and accelerating NVIDIA’s own dominance.

What was the name of 3dfx’s proprietary graphics API that gave their cards a major competitive advantage in the late 1990s?

Correct! Glide was 3dfx’s proprietary low-level graphics API that offered developers direct access to the hardware, resulting in superior performance and visual quality compared to rivals. Many iconic late-90s games were optimized specifically for Glide.

Not quite. The answer is Glide, 3dfx’s own proprietary API. Unlike Direct3D or OpenGL, Glide was hardware-specific, which gave 3dfx cards a stunning edge in compatible games — but also tied developers tightly to their ecosystem, a double-edged sword in the long run.

What was the groundbreaking product that launched 3dfx to fame in 1996 and is widely credited with beginning the 3D gaming revolution?

Correct! The original Voodoo Graphics card, often called Voodoo1, launched in 1996 and was a revelation. It brought arcade-quality 3D graphics to home PCs at a relatively affordable price, transforming games like Quake into breathtaking experiences.

Not quite. It was the original Voodoo Graphics (Voodoo1) released in 1996 that launched 3dfx’s legacy. The Voodoo2 came later and pushed things even further, but it was the original Voodoo that first made consumers and developers take notice of dedicated 3D acceleration.

What controversial business decision did 3dfx make in 1998 that many analysts believe contributed to their downfall?

Correct! 3dfx acquired STB Technologies to manufacture their own graphics cards, cutting out third-party board makers. This alienated partners, reduced their retail presence, and stretched their resources thin — a strategic mistake that helped NVIDIA and ATI capture the market.

Not quite. The pivotal mistake was acquiring STB Technologies in 1998 to become their own board manufacturer. This cut off crucial relationships with third-party add-in board partners, who quickly turned to NVIDIA instead — dramatically expanding NVIDIA’s market reach at 3dfx’s expense.

3dfx pioneered which multi-GPU technology that allowed users to link two Voodoo2 cards together for higher performance?

Correct! 3dfx introduced SLI — Scan-Line Interleave — with the Voodoo2, allowing two cards to split rendering duties line by line. This concept was so influential that NVIDIA later revived the SLI branding (as Scalable Link Interface) for their own multi-GPU technology decades later.

Not quite. The answer is SLI, which stood for Scan-Line Interleave in 3dfx’s implementation. NVIDIA later borrowed the SLI name — rebranding it as Scalable Link Interface — for their own multi-GPU solution, a clear nod to how influential 3dfx’s original concept truly was.

Which visual quality feature, heavily associated with 3dfx Voodoo cards, became a must-have benchmark for 3D graphics quality in the late 1990s?

Correct! 3dfx’s Voodoo5 was a pioneer in Full-Screen Anti-Aliasing (FSAA), which smoothed jagged edges on 3D objects. While the Voodoo5 launched too late and too expensively to save the company, FSAA became a foundational feature that every modern GPU still implements in various forms.

Not quite. Full-Screen Anti-Aliasing (FSAA) was the standout visual feature 3dfx championed, particularly with the Voodoo5. Though the Voodoo5 arrived too late to turn the tide for the company, FSAA itself became one of the most enduring features in GPU development, refined and expanded by every graphics card maker since.

Which company primarily benefited from 3dfx’s collapse by absorbing their talent, patents, and market share to become the dominant GPU manufacturer?

Correct! NVIDIA was the primary beneficiary of 3dfx’s collapse. By purchasing their patents and hiring key engineers, NVIDIA gained crucial intellectual property and expertise that helped cement their position as the world’s leading discrete GPU manufacturer — a position they still hold today.

Not quite. NVIDIA was the company that truly capitalized on 3dfx’s downfall. While ATI did gain some market share as well, it was NVIDIA that bought 3dfx’s assets and absorbed their engineers, using that foundation to build an almost unassailable lead in the discrete GPU market.

What is considered one of 3dfx’s most lasting legacies in the modern GPU industry?

Correct! 3dfx’s greatest legacy is democratizing 3D acceleration for consumers and pioneering multi-GPU technology with SLI. Their work forced the entire industry to prioritize real-time 3D performance, directly shaping the competitive GPU landscape that produced the powerful graphics cards we rely on today.

Not quite. 3dfx’s most enduring legacy is their role in making consumer 3D acceleration mainstream and inventing the SLI multi-GPU concept. These contributions forced every competitor — especially NVIDIA and ATI — to innovate rapidly, establishing the fiercely competitive GPU industry that still drives graphics technology forward today.

Your Score

/ 8

Thanks for playing!

When AMD decided to discontinue full driver support for the card late last year—a move they quickly backtracked on—along with the new FSR Redstone launch that was exclusive to the newer RDNA 4 (RX 9000 series) cards, I started thinking about an upgrade.

The DRAM shortage causing a massive spike in graphics card prices was the final straw that pushed me over the edge to splurge on a new 9070 XT.

- Brand

-

GIGABYTE

- Cooling Method

-

Active

The GIGABYTE Gaming Radeon RX 9070 XT OC is a high-performance RDNA 4 graphics card with 16GB of GDDR6 VRAM and a boost clock up to 3060 MHz, delivering excellent raw gaming performance. With PCIe 5.0 support and a triple-fan cooling design, it’s built to handle modern AAA titles while staying cool and relatively quiet under load.

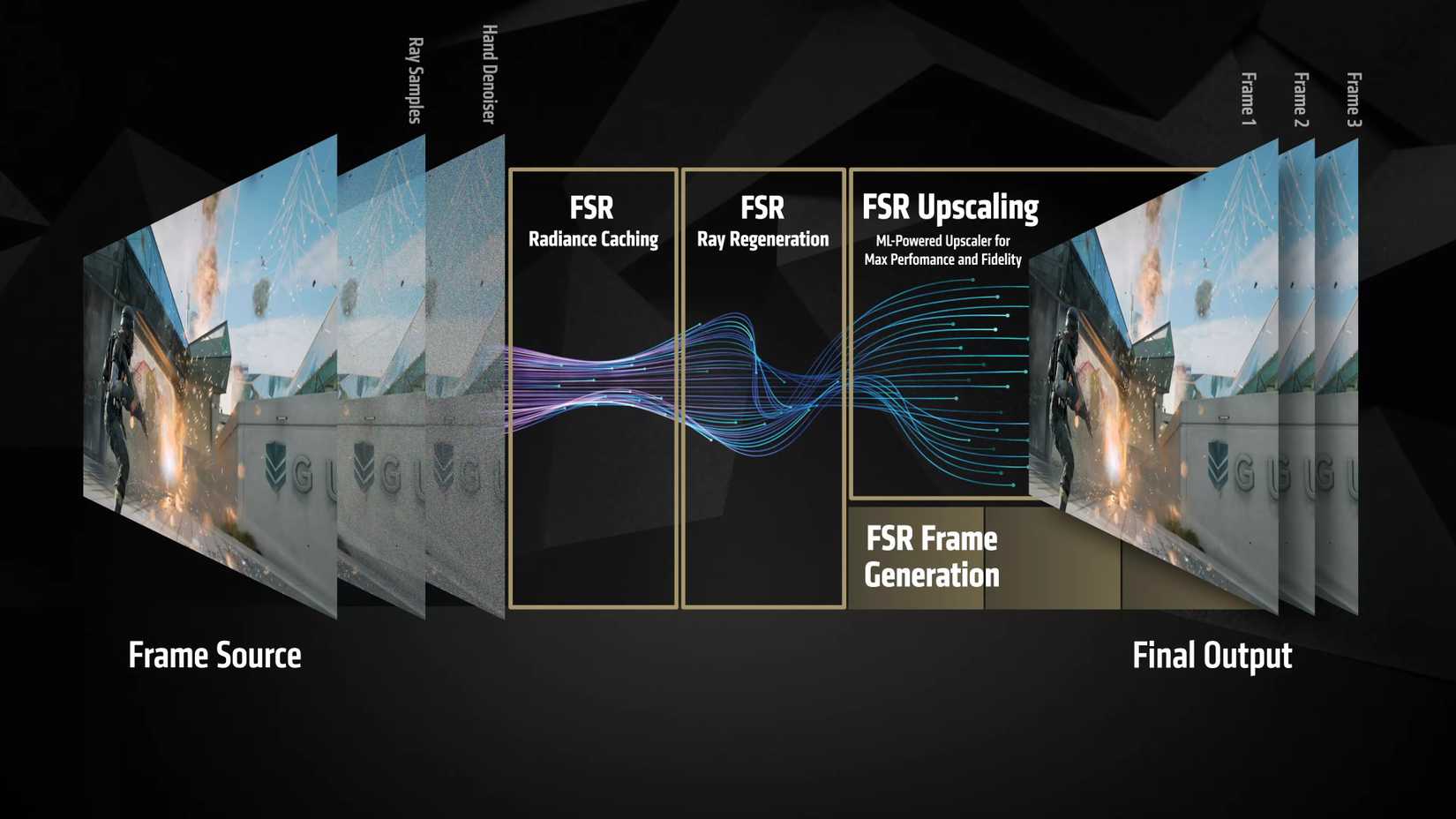

The raw performance boost was instantly noticeable, but the real reason I upgraded was to unlock all those new RDNA 4 and Redstone features, including the new FSR 4 Upscaling algorithm, ML-based FSR Frame Generation, Ray Regeneration (AMD’s answer to NVIDIA’s ray reconstruction), and Radiance Caching, which promises to significantly enhance real-time global illumination.

When the whole suite works together, it can deliver near-native image quality while more than tripling your FPS—and it also makes ray tracing far more feasible.

After Using NVIDIA for a Decade, I Just Bought an AMD GPU

After a decade, I’m switching back to Team Red.

Months later, Redstone still feels like a paper launch dressed up as wide support

Compared to NVIDIA’s DLSS, AMD’s Redstone support feels almost nonexistent

When you open up AMD’s website and check which games support Redstone, you’re met with a surprisingly lengthy list of games. However, when you look at it more closely, it states that the list contains games that support one or more Redstone technology.

As long as a game supports FSR 3.1 or newer and runs on DirectX 12 (rather than Vulkan), Adrenalin software can automatically upgrade it to the more advanced FSR 4 ML-based upscaler, and it’ll technically support Redstone.

The number of games that support Redstone’s Frame Generation (which is where the real magic happens) is much lower. I counted only 46, and several of these titles are competitive multiplayer games like Marvel Rivals, where most players will never enable frame gen because the extra input lag and artifacts outweigh any potential benefits of more frames.

Ray Regeneration, the ML-accelerated denoiser that’s supposed to make ray tracing look better while improving performance, is only available in two games: Call of Duty: Black Ops 7 and Crimson Desert. It’s a very humble offering, and chances are, you won’t even see it in action until some new games with the technology release.

Now, compare that to DLSS Ray Reconstruction, which is available in a bunch of popular games that millions play, such as Cyberpunk 2077, Marvel’s Spider-Man 2, Hogwarts Legacy, and Avatar: Frontiers of Pandora.

Radiance Caching, which will finally make high FPS with path tracing enabled possible, isn’t available yet, but to be fair, NVIDIA’s equivalent, Neural Radiance Caching, is practically only available as a fun tech demo in Portal with RTX.

What I’m particularly disappointed about is the general lack of game support for the new FSR Redstone Upscaling and Frame Generation in many titles that launched a few years ago and could use the performance bump. Even Starfield, which was technically an AMD-supported game at launch, didn’t receive any Redstone updates. Meanwhile, NVIDIA has already showcased DLSS 5 in Starfield (whether you will actually want to use it is an entirely different topic).

A big part of the problem is that Redstone requires developers to rework their lighting engines to enable Redstone’s technologies, while NVIDIA’s Neural Rendering only needs some basic game data to output fully redesigned, AI-generated lighting and materials. Neural Rendering aside, we’ve already seen an impressively long list of games that supported DLSS 4’s Multi-Frame Generation and now DLSS 4.5’s Dynamic Multi-Frame Generation, even in titles that released years ago, such as Diablo IV and Hellblade II Enhanced: Senua’s Saga.

Raw raster isn’t enough anymore—AMD needs to compete in the features people actually use

AMD remains the value king, but what you gain in performance you lose in advanced features

When I bought AMD’s current most powerful graphics card, I was honestly expecting more than just good raw rasterization performance for the money. While I can’t complain about the AMD 9070 XT’s performance—which matches the much more expensive NVIDIA RTX 5070 Ti—AMD needs to make it easier to inject new technologies into older games.

I don’t want to have to use OptiScaler to add FSR 4 upscaling in unsupported titles; I want AMD to provide the option directly in the drivers and polish the experience the way NVIDIA has polished DLSS. That’s still just the bare minimum, though. Maybe one day we’ll also see an answer to dynamic multi-frame generation as well!

AMD Is Finally Taking the Fight to NVIDIA, but These 5 Problems Still Need Solving

AMD is back in the fray but has to improve its GPUs to truly challenge NVIDIA.