You can run a local LLM on your computer that can do many of the same things as AI assistants such as ChatGPT, Gemini, and Claude. A local LLM can do a lot, but most still lag behind proprietary cloud-based LLMs in many ways. That’s why I use both.

Local LLMs can do a lot

You don’t need the most powerful hardware to use them

If you’ve never tried running a local LLM because you’re worried about lacking the necessary hardware, you may be pleasantly surprised. You don’t necessarily need an expensive GPU with a huge amount of VRAM. You can run smaller models on fairly modest hardware and still get reasonable results.

My M2 MacBook Air only has 8GB of RAM, for example, but I’m able to run models that can do useful tasks such as summarizing or rewriting text, analyzing uploaded images, and even helping with coding. The more powerful your computer, the larger the models you can run, but smaller models can still do a job.

If you’re not sure what LLMs your computer can handle, there are some useful tools that can help. Utilities such as llm-checker and llmfit can scan your hardware and list models that are compatible. You can then choose the best model that your hardware can run.

- Graphics RAM Size

-

12GB

- Brand

-

ASUS

The ASUS TUF Gaming GeForce RTX 5070 12GB graphics card is designed to take your gaming setup to the next level. As the latest from NVIDIA, you’re getting PCIe 5.0 compatibility, HDMI and DisplayPort 2.1 ports, NVIDIA Blackwell architecture, and DLSS 4 technologies packed into this mid-range GPU.

Local LLMs still can’t match the big players

It’s no surprise that the major models usually win

A local LLM can sometimes be faster for simple tasks, thanks to the reduced latency of a local model. No matter how powerful your hardware is, however, for large, complex tasks, local LLMs currently can’t match the speed of a proprietary cloud-based LLM such as ChatGPT, Claude, and Gemini. Even if you have the most powerful GPU you can get, these companies can spread their models over a cluster of thousands of GPUs, all working together, effectively giving them terabytes of pooled VRAM.

It means that a proprietary LLM will often be able to outperform your local LLM. While your local LLM may be a sports car, proprietary LLMs are Formula 1 cars. These companies have spent billions on the infrastructure behind their platforms, and in a race, there’s only going to be one winner.

You can have the best of both worlds

Use a local LLM alongside ChatGPT, Claude, or Gemini

That’s not to say that local LLMs don’t have their place. Even if a local LLM can’t quite match the performance of a proprietary model, it can still perform many of the same tasks well.

If you want to summarize documents, for example, this is something that a good local LLM can often do well, as long as the text fits in the local LLM’s context window. Local LLMs are also very good for creative writing tasks, extracting data from images, and standard logic or math problems.

Where proprietary LLMs win is in more complex tasks. If you want a cross-document analysis of 100 different documents, a local LLM may not be able to handle the context, but some proprietary LLMs can. While local LLMs can do logic and math, proprietary LLMs can usually handle much more complex tasks.

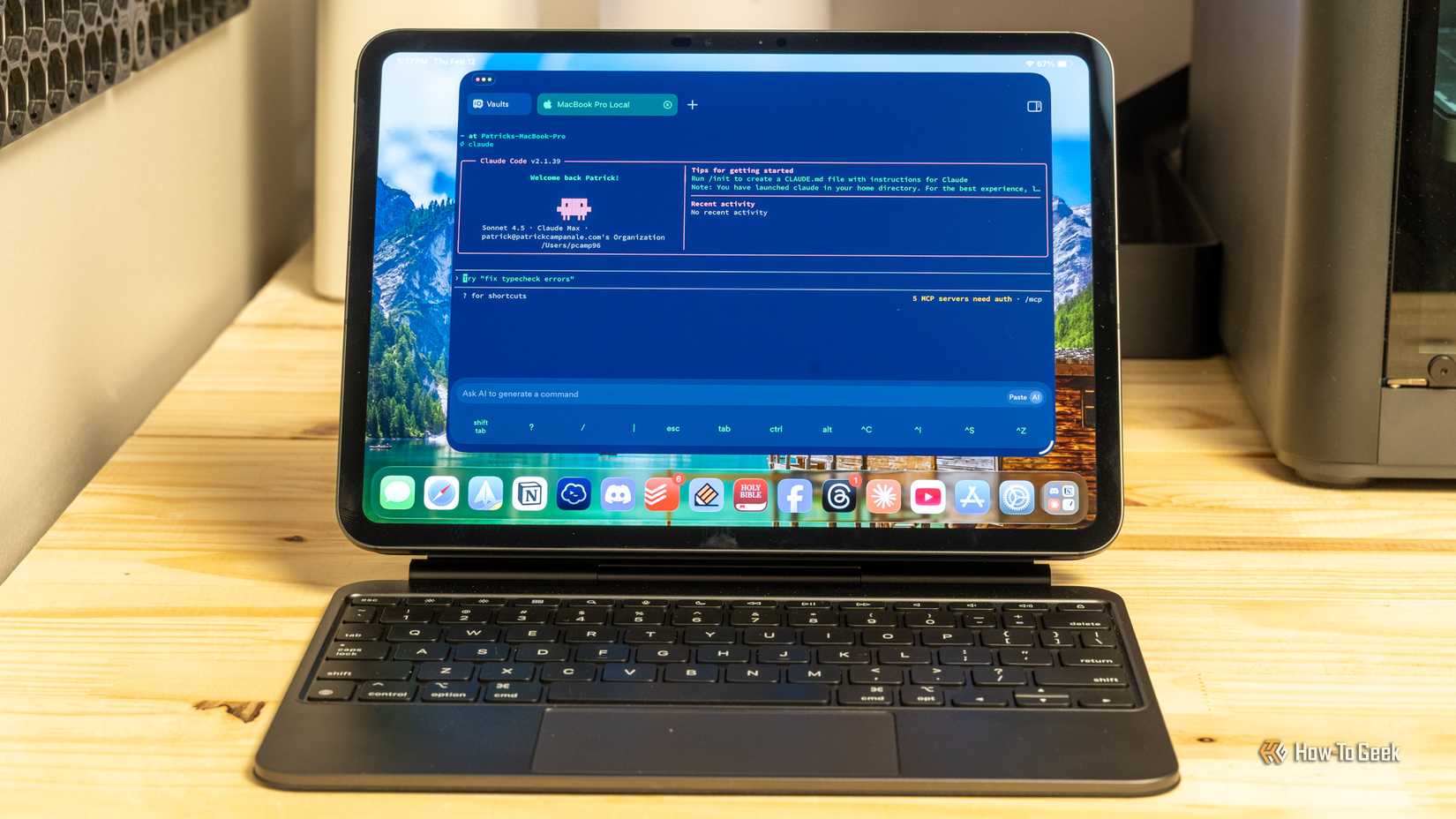

Some local LLMs can handle coding tasks almost as well as proprietary models. Proprietary LLMs tend to pull ahead when you need to do something more involved, such as redesigning an app’s entire architecture.

While a local LLM can’t yet match a proprietary LLM at complex tasks, there’s a lot you can do locally. That’s why I use both a local LLM and cloud-based proprietary models and choose which one to use based on the task I want to complete.

The benefits of local LLMs

Keep your data local and private

If proprietary LLMs are superior, why bother using a local LLM at all? There are a number of reasons why using a local LLM can be a better option.

One of the biggest benefits of using a local LLM is privacy. When you use a proprietary LLM, everything you type and every file you upload is sent to the LLM’s servers. If you don’t want to share private information, a local LLM can help you avoid sending data to third-party servers.

For example, I used a local LLM to find sensitive information in bank statements such as account numbers, people’s names, or addresses. I was then able to redact this information before I uploaded the files to a proprietary LLM that could analyze my spending habits based on the bank statements.

Local LLMs can also be useful for cases where proprietary LLMs are overly strict and refuse to give answers. You can find local models that don’t include such strict filters. For example, if you’re using an LLM for a role-playing game, a proprietary model might refuse to describe a sword fight, while some local models would have no such qualms.

I also use a local LLM for tasks that don’t require the power of a proprietary LLM. I have a Claude subscription, which comes with usage limits. Rather than wasting this usage on simple tasks, I can use the local LLM instead and save my Claude usage for more complex stuff, such as coding.

A local LLM can be a helpful tool

Local LLMs may not be able to match the power of proprietary models just yet, but there are multiple ways you can use them. Combining a local LLM with a cloud-based model such as Claude or ChatGPT gives you the best of both worlds: privacy when you want it and power when you need it.