A cyberattack on Canvas could not have come at a worse time. The learning platform, used by schools and universities for assignments, exams, grades, lecture materials, and class communication, went down during finals week, leaving students and instructors scrambling for alternatives.

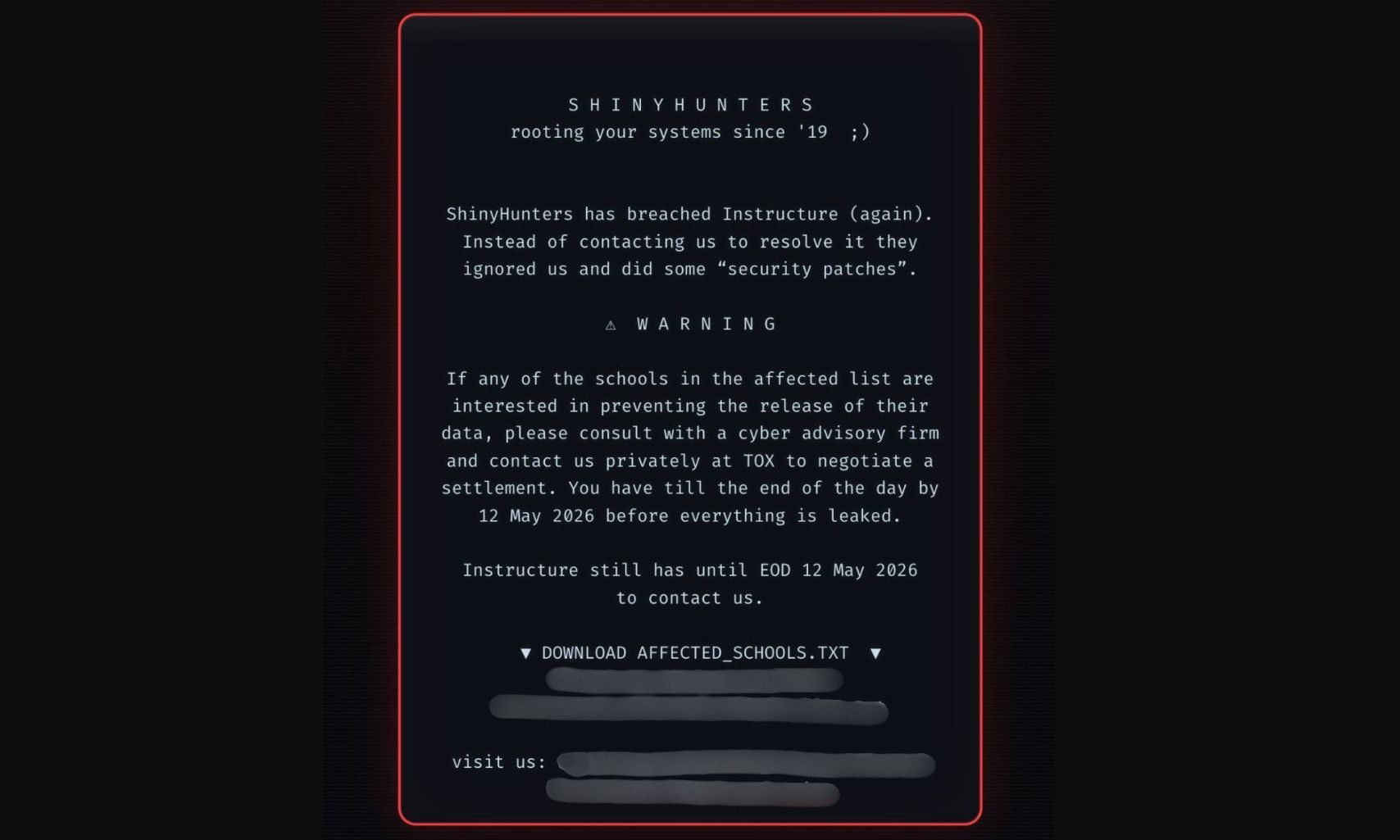

The incident has been linked to ShinyHunters, a hacking group known for data theft and extortion. According to BleepingComputer, Canvas login portals at hundreds of institutions were defaced with a ransom-style message warning that stolen student data would be leaked unless the attackers were contacted. The group claimed to have obtained data tied to millions of students, teachers, and staff across thousands of schools.

What went wrong inside Canvas?

Instructure, the company behind Canvas, said hackers exploited an issue related to its Free-for-Teacher accounts, forcing it to temporarily shut down the platform to investigate the matter. This outage caused major chaos during the ongoing finals season as students and teachers were suddenly locked out of a platform.

During the initial outage, the Canvas login screen reportedly displayed a message from ShinyHunters claiming it had breached Instructure “again” and warning schools to make contact before a May 12, 2026, deadline to prevent stolen data from being published. The message also included a list of affected schools, making it clear the attack was part of an extortion attempt.

Why did this hit students so hard?

This hack resulted in some institutions postponing exams, while others asked faculty to be flexible with deadlines and course requirements. For students already in the middle of finals, the outage created more stress around study materials, submissions, and exam schedules.

While Instructure has claimed that passwords or financial details were not compromised in this attack, the hackers did get access to millions of user names, email addresses, student IDs, and internal messages. This information could easily be used for phishing attacks that mention real classes, schools, or instructors.

Haven’t we seen ShinyHunters before?

ShinyHunters has been connected to several major breaches in the past, including incidents involving Ticketmaster and Rockstar. Even Instructure has had previous run-ins with the hacker group. In September 2025, ShinyHunters targeted Instructure’s Salesforce environment through social engineering to access business systems, but Instructure said no Canvas product data was accessed and that the exposed information was mainly public business contact details.

What now?

Canvas coming back online does not end the problem. Hackers are still holding data from millions of users for ransom, which means the risk remains. That said, ShinyHunters has reportedly removed Instructure from its “Pay or Leak” portal, suggesting negotiations may be underway.

The attack should be a wake-up call for every school that relies on a few digital platforms to run classes, exams, and communication. These tools are now essential to how schools operate, which means they need stronger cybersecurity to protect student data and backup plans in case another outage or attack happens.