For years, Apple has been rumored to be bringing native touch functionality to the Mac. A new display gives us an early look at what that may be like, for better and worse.

Recently, I got an early look at the Aspekt Touch, a new monitor from Alogic. This isn’t the first touchscreen monitor from the brand, but the tilt functionality combined with macOS Tahoe gives early impressions about how a first-party solution could be implemented.

Feel free to check out Mike’s initial hands-on of the Aspekt Touch, but here’s the high-level. It’s a 32-inch 4K display that is also able to house a Mac mini in the bottom of the stand, and it can tilt nearly flat to be used more comfortably as a touchscreen.

A skeptic, early

I’m conflicted about the idea of a touchscreen Mac. I’ve been a detractor for ages, agreeing with Apple’s official stance that the iPad is the best touchscreen computer.

By and large, I haven’t felt the need for a touch interface on my Mac. Recently, though, that’s started to change.

I’ve gone from using my iPad and the Magic Keyboard over to my Mac and inadvertently kept poking at the display. If the iPad can get trackpad functionality, why can’t we get supplemental touch functionality on the Mac?

After a lot of thought, I think what matters is the approach. Based on current rumors, that approach could be what sets it apart.

Over on iPad, it is designed as touch first, with cursor support added for certain users when it makes sense. On the Mac, it will still be cursor first, with touch added as a supplemental interaction method.

During Windows’ push toward touch-first design, the UI was watered down to accommodate it. The result was a hodgepodge interface that felt smashed together, inconsistent, and didn’t seem to work well.

As long as macOS remains macOS, I think there could be some potential here, and it has been fun to explore with the Aspekt Touch.

Aspekt Touch monitor brings touch to macOS, with caveats

Alogic provides a simple driver package to add touch support to the Mac. You download the installer and grant it a few permissions, and you’re ready to go.

Once done, I could just start tapping away on the large 32-inch screen. I could scroll through Safari, zoom by pinching in and out, and even initiate a right-click.

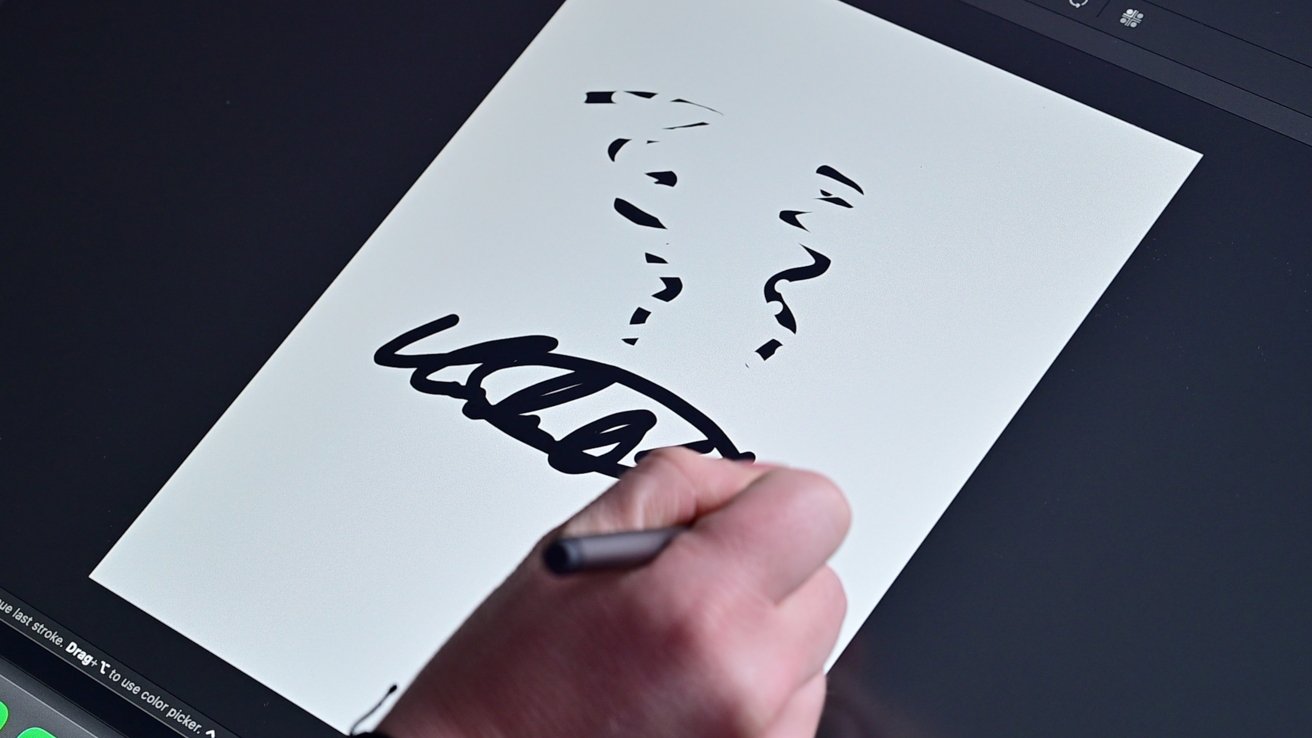

Using the stylus was a solid experience, too. Any capacitive stylus will work, but Alogic has its own with some extra features.

The stylus supports hover effects, so as I work in Affinity, I can see tooltips appear over the different tools. When you flip the stylus around, you can use the other side as an eraser to erase what you’ve drawn.

The stylus supports varying degrees of pressure, and I didn’t seem to have any issues with palm rejection.

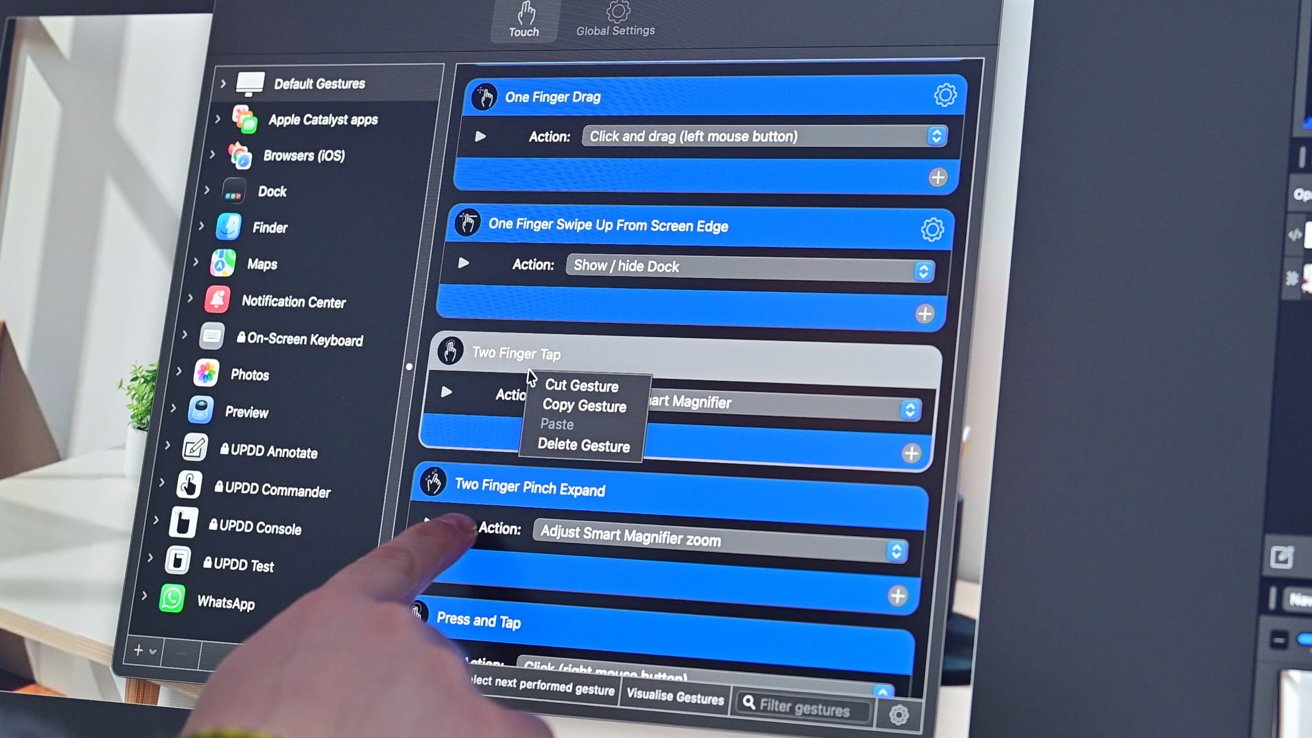

When you dig into the Alogic app, you can fine-tune your experience. You can customize the touch effects on a per-app basis to get very granular in how you interact with macOS.

Overall, it’s a solid experience for being added with a third-party plugin. However, a native experience would need to go even further.

Liquid Glass arrives in macOS Tahoe

The problem with the non-native experience is that it’s cobbled together piecemeal from different existing macOS features. There’s no native touch layer that works universally, and many UI elements aren’t designed for touch.

For example, when using the zoom feature, many times it just initiates the magnifier from the accessibility settings. This gets the job done, sure, but not in the way I expected.

I also struggled tapping certain elements, like when editing in Affinity or Pixelmator. The sheer number and size of items made it hard to tap the exact one, especially if I was trying to move with any degree of urgency.

My biggest takeaway was how great Liquid Glass was. The UI elements that were skinned with Liquid Glass were easier to tap and felt more natural.

This offers an early glimpse of how touch functionality might be implemented. Liquid Glass may be divisive, but it suggests a more consistent cross-platform experience, and that includes touch support.

I’d also love to see native support for Apple Pencil. I don’t draw, but I do edit a lot of photos, and Apple Pencil is great for getting that done on iPad.

The Alogic stylus has noticeable lag, which is hardly ideal. I’d like to think that an Apple first-party implementation wouldn’t have that issue, just as it doesn’t on iPad.

Other Apple Pencil features could also debut, like the squeeze gesture to open up a tool palette or maybe something specific for Mac. I’d love it if I could squeeze the Apple Pencil Pro and see a collection of my favorite shortcuts to quickly run.

Probably not a 2026 experience

My biggest concern is that if touch is added, it needs to complement macOS. It shouldn’t try to replace what’s already there.

At the moment, it appears the first touchscreen Mac will be the redesigned M6 OLED MacBook Pro. Clearly, a keyboard and trackpad will be front and center to that experience.

That means we won’t be getting an iPad form factor running macOS, at least any time soon. I think that’s a good thing, but opinions vary amongst the AppleInsider staff, given that the Magic Keyboard with trackpad exists.

We’ll likely have to wait a bit longer to see though, as recent rumors say Apple’s redesigned MacBook Pro, first slated for the end of 2026, is now likely going to ship in early 2027. This isn’t because of developmental reasons, but rather component shortages that are plaguing the industry.

I’m still a bit skeptical here on whether macOS needs touch support or not. After using it for the last couple of weeks, I’m at least leaning towards more “for” than “against” at the moment.

Maybe we’ll get a clearer picture come WWDC with the preview of macOS 27. We’ll find out soon.