The ESP32 is a hobbyist’s dream that comes in a broad range of configurations. It can be hard to know where to begin, but there are some simple rules you can follow to match the intended use with a classic, C3, C6, S3, or other variants.

ESP32 variants explained

The “stock” ESP32 was released in 2016 as a follow-up to the ESP8266. It’s a microcontroller onto which firmware can be flashed to perform a variety of tasks. These boards are commonly found in smart home sensors (both DIY and retail), attached to small displays and E-Ink panels as dashboards, used to power retro handheld consoles, and in other projects where size and power efficiency are more important than raw performance.

This original model features Wi-Fi, Bluetooth 4.2, 520KiB of SRAM, 34 GPIO pins, a temperature sensor, and 448KiB on-board storage. It was something of a revolution when it arrived, but the ESP32 family has since seen a flurry of new arrivals with a few models that really stand out.

“KiB” refers to “kibibyte,” which translates to 1024 kilobytes (KB).

Consider the C3, S3, and C6 first

The ESP32-C3 stands out for its ultra-low power design. It only has a single core processor that runs at 160MHz (down from 240MHz on the original), with fewer GPIO pins than the base model. But its efficiency means it’s perfect for battery-powered projects, like sensors that won’t be plugged into the wall or mobile handheld devices.

On the contrary, the ESP32-S3 features a dual-core processor, a whopping 45 GPIO pins, support for more intensive processes like machine learning and AI projects, and camera support. It’s one of the most feature-packed versions of the chip, but it comes at the cost of the C3’s unbeatable power efficiency, and it’s a little more expensive than its siblings.

For smart home use, consider the ESP32-C6. This board was released in 2025 and introduces Zigbee and Thread support, perfect for building sensors and other devices that communicate with low-power mesh networks. The C5, C61, and H2 also feature Zigbee and Thread support (with the H2 being a real outlier that lacks Wi-Fi altogether).

C2 and S2 offer cost savings

The C2 and S2 variants are cheaper, lower-cost versions of the C3 and S3 boards.

The C2 has a slower clock speed (120MHz), less SRAM and ROM (272KiB and 128KiB respectively), only 20 GPIO pins, and no temperature sensor. The S2 drops the S3 down to a single-core 240MHz CPU, 320KiB and 128KiB of RAM and ROM respectively, and lacks Bluetooth altogether.

Predictably, these boards should cost even less than their already cheaper “full-fat” variants. They could be worth considering if you’re building a whole fleet of devices that suit their specs and want to keep costs as low as possible.

The P4 is a powerful outlier

If you need as much power as an ESP32 can get, the P4 is the one to go for. It packs a 400MHz dual-core processor, more SRAM and ROM than most other variants, support for Ethernet but no built-in Wi-Fi, and improved audio capabilities. It’s often favored in projects that use Power-over-Ethernet.

Many of these chips end up in pricier embedded offerings, like the Seeed Studio reTerminal D1001, which includes a separate ESP32-C6 for wireless connectivity.

Picking official or third-party boards

You might be tempted to stick to “official” ESP32 offerings that are manufactured by the company that designs and manufactures the chip, Espressif Systems. The company produces reference boards that include the chip on a circuit board and a USB connector, but at the same time, they release the schematics and other materials necessary for other companies to copy their work and build upon it.

The company then sells the core ESP32 chips directly to other companies, which is a win for everyone. When you choose a third-party ESP32 development board, you’re not buying a cheaper product. In fact, you’ll usually pay a bit more than you would with an “official” development board, but you’ll get some nice bonus features along the way.

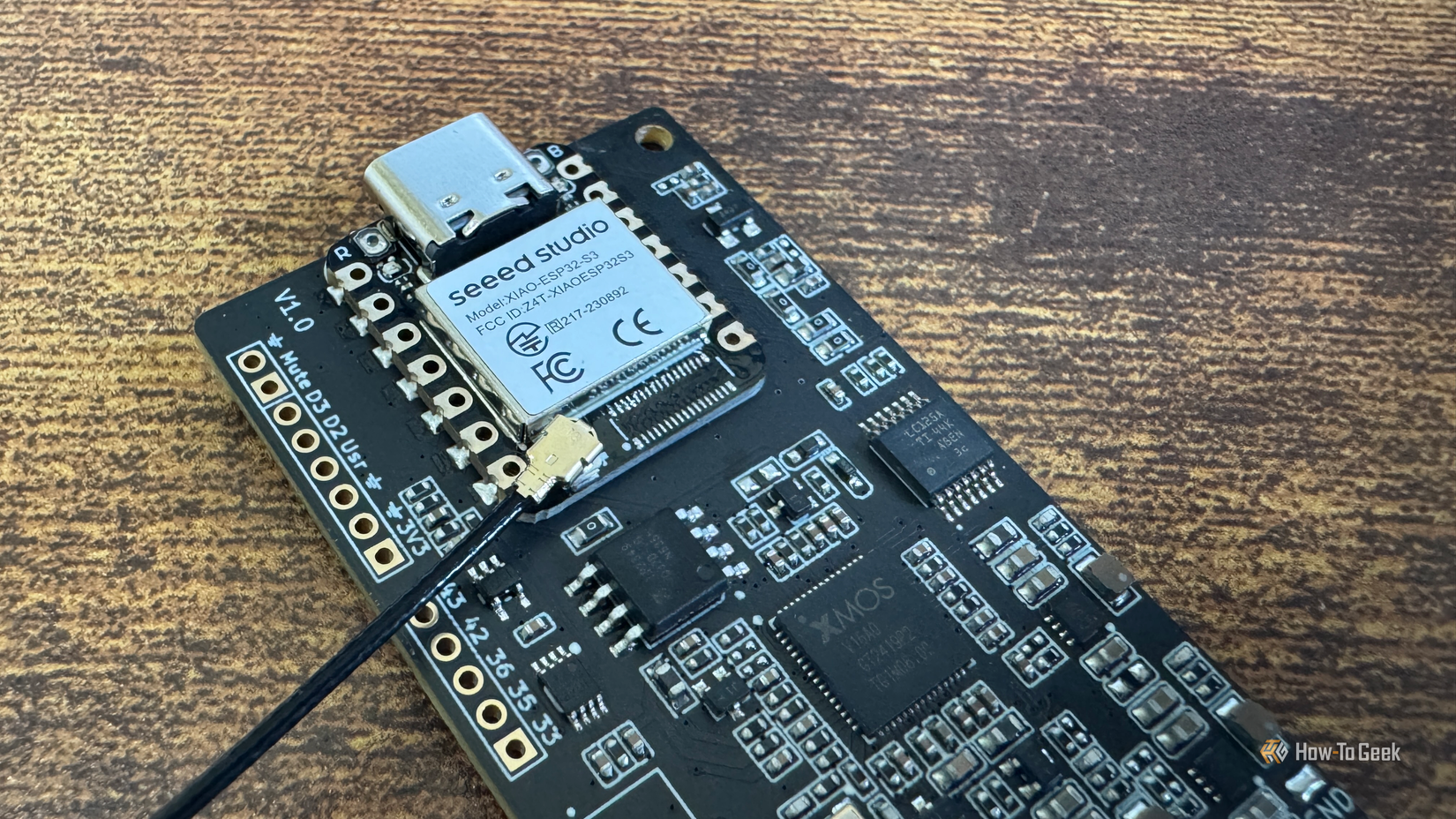

These improvements include USB-C ports (instead of microUSB), better antennas for improved wireless performance, charging circuitry, and smaller, often purpose-built form factors. For example, Seeed Studio’s XIAO lineup is popular among How-To Geek writers since it’s smaller than the reference and ditches microUSB for the superior USB-C.

Confused? Match the board with the project

If you’re buying a board for a specific project, stick to the brief. Though the S3 is something of a jack-of-all-trades, for scaling up projects (like deploying a house full of Bluetooth proxies for presence detection), the savings can really add up if you opt for a cheaper chip.

This point stands with regard to third-party boards. The cost soon adds up when you start to spend a bit more on “nicer” development boards, but that cost is often worth it, particularly if you want a specific form factor like an ESP32 and a touchscreen display in one.

-

- Brand

-

MakerHawk

- Operating System

-

Meshtastic

This ESP32 kit includes everything you need for connecting to your local Meshtastic network, or any other LoRa-based tech project. There’s an LED display, a 1100mAH battery, and multiple antennas.

Alternatively, buy the lot

The fact of the matter is that ESP32 boards are cheap, and there’s usually only a couple of dollars separating the C2 and S2 from the C6 and S3. The outlier here is the P4, which is often found in much pricier configurations.

If you’re keen to experiment, why not buy a few of each and see where the wind takes you? You can start with some ESP32 projects that take less than an hour.