Well, it’s time for some more fun homelab projects to try out this weekend! Today, I’m showing you how to replace Google Analytics with your own self-hosted tool, a better homelab dashboard, and even how to build out your own wiki (because your homelab really does need one).

Self-host your own private Google Analytics alternative with Umami

Don’t let Google control your website analytics

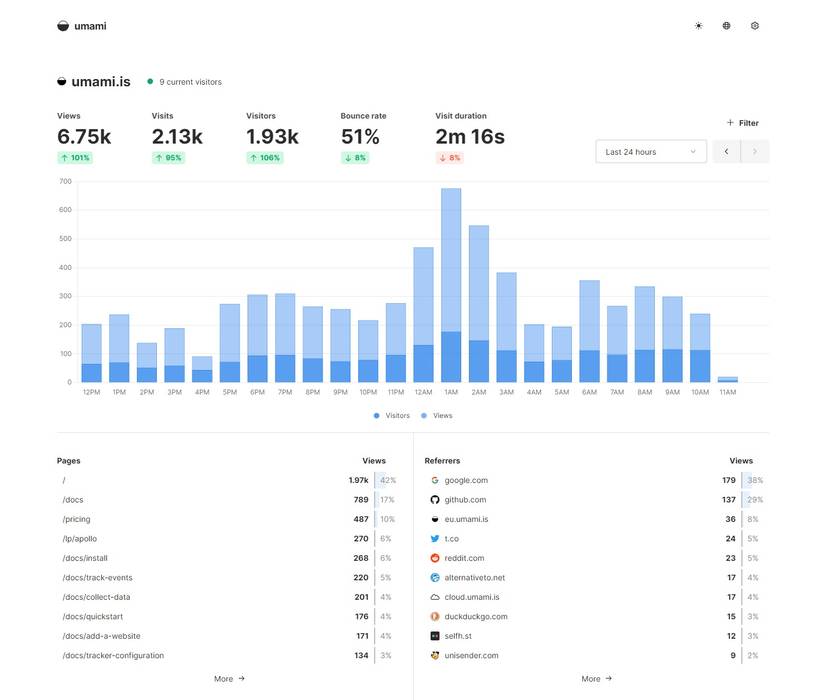

Whether you’re sick and tired of Google controlling everything, or you just want a simpler analytics interface, Umami is definitely worth checking out. I’ve personally used both self-hosted and hosted versions of Umami for analytics, and I love both methods—but self-hosting gives you the utmost control.

With Umami, you’re able to track all the relevant data you could want for a website. From page view count to visitor count, sources, environments (operating system), locations, regions, and much more, Umami really does deliver everything you need in a cleaner interface than Google Analytics.

One of my favorite features of Umami is the traffic system. It shows you a seven-day view broken down into all 24 hours. Each hour has a dot that corresponds to how many visitors you get within that timeframe. A larger dot means more visitors; no dot means no visitors. This is a simple thing that can technically be achieved with Google Analytics, it’s just not very easy to do so.

Umami is super simple to run, and a single instance can handle multiple websites for analytics tracking. You can install Umami in a number of ways, including through Docker Compose (with a sample file provided on the Umami GitHub) or by installing it through npm with instructions provided in the GitHub’s readme.

Build a homepage for your homelab with Dashy

Stop remembering IP addresses and ports

Once your homelab starts to grow beyond a few services or servers, it can become difficult to remember where everything is located. At this point, I have over half a dozen servers in my homelab and probably 50 or more services running—remembering how to access each one can be a nightmare. That’s where a homepage dashboard comes in, and this time around I’m specifically talking about Dashy.

I’ve used a lot of homelab dashboards over the years, but I’ve never really found one that I loved. Dashy might change that for me. The UI is simple and intuitive, allowing for web-based editing of bookmarks. My last homepage dashboard, Homepage, required manual editing of a YAML file, which sounds simple (and is), but it meant I had to SSH into the server and hand-write the code to add (or remove) an entry.

With Dashy, it’s all done through the web interface. Installing Dashy is simple and can be done through Docker with ease. However, I decided to go a completely different route for hosting my Dashy—Vercel. I already use Vercel for a few production websites, and hosting Dashy on Vercel means that my dashboard will stay up even if my homelab goes down.

Why is that important for me? Well, if I host my dashboard on a specific server, and that server is offline for any reason, then the map of my entire homelab is also offline. High availability helps with this, but hosting it off-site is even better. There are some downsides to hosting it on Vercel, but Dashy’s docs outline all the pros and cons for each deployment option.

Really, host Dashy however you want in your homelab, just make sure you do host it somewhere so you can stop memorizing IP addresses and ports for where your services live.

Deploy your own self-hosted wiki and knowledge base with Outline

Your homelab needs its own wiki

While a homelab dashboard is great for quick links to services, there’s a lot more that goes into properly documenting a homelab than some bookmarks, and that’s where a proper knowledge base or wiki comes in. Outline is a great way to run your homelab’s knowledge base, and it deploys easily through Docker.

Why does your homelab need a knowledge base or wiki? Well, it’s the perfect place to document things like what servers get what IP addresses. As my homelab has grown, I’ve grown from just one reserved IP address to dozens. I now have blocks of 10 or 20 addresses that are reserved for VMs on specific machines.

I also have all of my control panels in blocks. For example, I have all of my Proxmox control panels on .11-.20 for IP addresses. Likewise, .21-.30 are reserved for storage servers. I have .31-.50 set aside for VMs running on a specific server, and so on.

I’ve memorized most of this by now, but I still have to reference my homelab documentation sometimes if I’m dealing with an edge case. Another time when I access my homelab documentation is when I have to re-deploy a service that was difficult to deploy in the first place. I typically try to document things as I go if it takes a while to configure, so if I ever have to reconfigure, it’s not as hard the second time.

The uses for a homelab wiki are endless, and you’ll find quite a few ways to use it that I’ve not even described here. You could also use Outline as a family knowledge base, storing important contacts, addresses, and more there for the entire family to access.

If you’re still writing things down on paper in your homelab, then give it a rest and deploy Outline today.

- Brand

-

ACEMAGIC

- CPU

-

i7-14650HX

The ACEMAGIC M5 mini PC is perfect for setups that need a high-performance desktop with a small footprint. It boasts the Intel i7-14650HX 16-core 24-thread processor and 32GB DDR4 RAM (which is upgradable to 64GB). The pre-installed 1TB NVMe drive can be swapped out for a larger one though, and there’s a second NVMe slot for extra storage if needed.

Stop putting off starting your homelab

Running a homelab has been one of the best things I’ve done in my adult life. I got started with this hobby when I was a teenager without realizing it, but once I became an adult, it became evident that I really loved homelabbing. Spinning up servers, running services, learning networking, it’s all great fun.

I’ve learned so much that I’m able to apply it in both my personal and professional life now. I never dreamed that I would be writing about homelabbing like I am one day. Nor did I ever think I would be overseeing the rollout of a massive campus-wide new networking setup for a church as I am now.

I started homelabbing with just a few old computer parts, and now I have enterprise-level experience with both hardware and software, and it’s changed my life for the better. So, if you’ve been putting off starting your homelab, pull that old computer out of storage, fire it up, and get started. It’s more fun than you think.