I clearly remember the first time I walked into someone’s house in the 1990s and saw a rear-projection TV. At the time, we had just upgraded to a 20-inch Panasonic TV, which was a big step up from the 11-inch TV we’d had up to that point.

So imagine how it felt to walk into someone’s house and see a TV with a screen at least 50 inches in size. And remember this was a TV with a 4:3 aspect ratio, so that’s even more surface area than a modern widescreen with the same diagonal measurement. The big picture impressed me, even if my dad’s friends only wanted to watch boring sports on them, but the truth is that these big 1990s TVs were actually pretty awful.

Why rear-projection TVs felt like the future

It still looks the part

Looking at a 1990s RPTV, at least while turned off, it wouldn’t look too out of place in a modern home. At least not from the front. I would not even see a flat-screen CRT TV until the early 2000s, so seeing these big flat TVs in the 1990s definitely felt like the future.

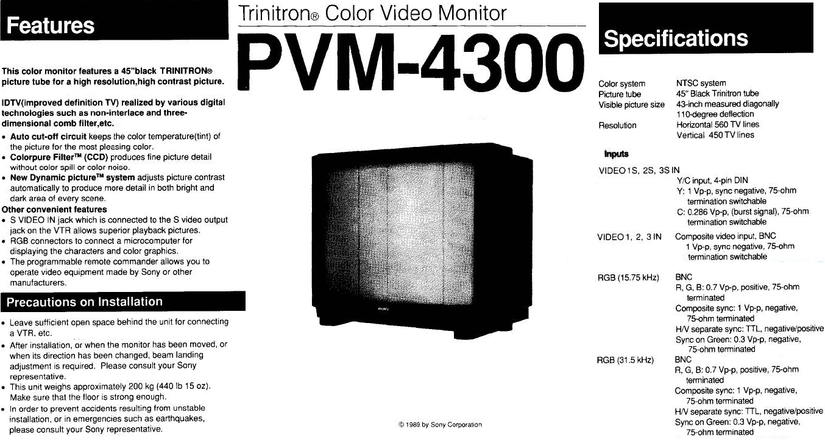

RPTVs were typically between 40 and 60 inches in size. To put that in perspective, the absolutely largest CRT TV ever made is the Sony Trinitron PVM-4300. This TV weighs 440 pounds!

The biggest CRT TVs you’d typically see were about 30 inches, and the biggest CRT my parents ever owned clocked in at 29 inches.

Today, I am the proud owner of a 34-inch Trinitron, coming in at a back-breaking 165 pounds. That’s a 12.9-inch iPad on top, for reference.

RPTVs allowed screen sizes to scale way beyond this limit without the enormous weight. Of course, virtually all 1990s and earlier RPTVs use CRT projection technology on the inside. In the early 2000s, we’d get models with DLP projection technology, and by the late 2000s, they were using laser projection, but none of that is relevant to the 1990s. This was the final hurrah of the CRT RPTV.

The illusion of quality people remember today

Rose-tinted TVs

If you look up forum posts or just ask people about their memories of these TVs, they can be surprisingly positive. I had the pleasure of watching a few LaserDisc movies on an RPTV back in the 1990s, and I remember it looking as good as DVD does today. Now, I actually own a LaserDisc player and high-end CRT TV in the here and now and know that it looks significantly worse than DVD.

It’s all about reference points. LaserDisc had twice the resolution of VHS, so obviously I was going to be blown away by it, having never seen anything sharper back then, but despite using CRT technology on the inside, RPTVs had worse picture quality.

The picture quality was objectively terrible

Too many moving parts

Typically, a CRT RPTV would have three small CRT tubes on the inside, one for each primary color in the RGB system. Red, green, and blue.

If these were not perfectly aligned, the image would have chromatic aberrations and look fuzzy. Moreover, brightness compared to a normal CRT TV was a huge issue. You have to watch these in a darkened room, and even then, I clearly remember the picture being uneven.

These TVs needed frequent servicing to keep them aligned and operating as intended, but most of the people I knew who owned them clearly didn’t do this. As long as they got a nice big picture on game night, that was good enough.

If you actually cared about picture quality, then an RPTV was not the technology of choice; its only real advantage was size.

7/10

- Brand

-

TCL

- Display Size

-

85-inches

The 2025 model TCL QM6K Google TV delivers a stunningly clear and bright picture with a new Mini-LED panel, improved local dimming zones, Dolby Vision IQ, and a neat new Halo Control system for improved visuals. Get this TV and elevate your living room.

The real reason they disappeared almost overnight

The moment that flat panel technology was ready, RPTVs were dead in the water. While there were some wall-mountable DLP RPTVs toward the end of the 2000s, even heavy plasma TVs were orders of magnitude less bulky or heavy.

Flat panel technology also blew the doors off screen-size limits. While a 55-inch RPTV is considered a “big screen TV” these days, a 55-inch TV is just an entry-level, mundane size. Funnily enough, when I bought my first plasma TV at 51 inches, I remember thinking that I finally had a TV as big as those RPTVs from my youth. It just had a much, much better picture.