Linux has been used as a catch-all term for any free, open-source operating system that prioritizes user control over systems like Windows or macOS. Although many share command-line interfaces and a philosophy of freedom, calling every open-source project a Linux distribution ignores important details about computing history and engineering. There are distinctions you need to understand if you want to appreciate the complexity and variety of open-source software. Just remember, not everything that is an outsider is Linux, even though distros can be very different.

FreeBSD

It’s a complete package, not just a kernel

FreeBSD is a free, open-source operating system that comes from the Berkeley Software Distribution created at UC Berkeley that you can try. It looks like Linux and shares many tools, but it is a distinct system. It comes with a compatibility layer for running many Linux programs without modifications, even though the internal architecture is different.

The primary difference lies in how the system is developed. Linux is technically just a kernel which other groups bundle with various tools to create a complete OS. FreeBSD develops the kernel and the core tools as one cohesive unit. Since a single group manages everything, the components are designed to work together, which makes the system predictable.

FreeBSD is known for its networking performance and stability. The networking stack is tuned for low latency, so it stays efficient under a heavy load. It also includes features like the Z File System for managing data and Jails for virtualization. These tools help the system run for long periods without crashing or needing a reboot.

You generally won’t find FreeBSD on a standard home desktop, although you can configure it that way. It is mostly used for heavy infrastructure. Large companies like Netflix, Apple, and Sony use it for their web servers, firewalls, and storage systems. They choose it because it is fast, scales well, and has a license that is friendly for businesses.

Haiku

A fresh start for your desktop

Haiku is an open-source operating system designed for personal computers. It is not a Linux distribution or a Unix clone, and a good way to save a netbook. Instead, it was inspired by BeOS. While Linux uses a monolithic kernel where everything runs in one big, privileged space, Haiku uses a microkernel design that keeps the core code minimal.

The microkernel approach is helpful for reliability. In monolithic systems like Linux, a single bad driver can crash the whole computer. Haiku runs most of its services in a protected area, which helps prevent those kinds of total failures.

Haiku does not carry the history of old Unix systems. While FreeBSD and Linux are rooted in decades-old technology, Haiku was engineered from scratch to be fast and responsive for multimedia tasks. It is a labor of love, similar to how ReactOS was built to be a free alternative to Windows.

While Linux handles everything from phones to clouds and FreeBSD runs massive servers, Haiku stays focused on the desktop. It is designed to give you a streamlined experience that feels quick and user-centric. It is a unique choice if you want something that does not follow the traditional Unix path.

TempleOS

One man’s unique digital vision

TempleOS is a lightweight operating system with biblical themes, built entirely by Terry A. Davis. It shares no history with Linux. Davis wrote the OS himself, which includes a custom language called HolyC and a dedicated compiler. The system lacks networking and the type of multitasking found in modern versions.

The system is simple by design. It runs in a low-resolution, 16-color mode. Programs must voluntarily give up control to let other processes run, which differs from the way Windows or Linux handles tasks.

Privacy and simplicity are the primary goals. Davis intentionally excluded networking since he saw the internet as a risk. So, to keep the system fast and simple, it does not use memory protection. This gives you total control over the hardware, and you can type code directly into the command line to execute it immediately.

TempleOS is a lot more serious than you would be used to in terms of a general-purpose OS. It does not share any structure with Linux or Unix. It is an independent system that stands as a monument to one programmer’s specific vision.

ReactOS

Running Windows apps without the Windows price tag

ReactOS is a free project that tries to run Windows programs and drivers directly. Since it isn’t based on Linux and doesn’t use a Unix architecture, the developers are reverse-engineering the Windows NT design from the ground up. This lets it run native Windows software without needing a layer like Wine to translate the code.

The project started in the late 90s and uses a clean-room method to figure out how Windows works. This means the team writes its own code to match how Windows behaves without actually seeing the private source code from Microsoft. The project even completed a full audit of its own code to make sure it wasn’t violating any copyrights.

While ReactOS works with the Wine project to share some libraries, they are built differently. Wine is just a layer that sits on top of Linux or macOS. ReactOS is the actual operating system itself, including the kernel and the parts that talk to your hardware. This lets it use real Windows hardware drivers, which Wine can’t do.

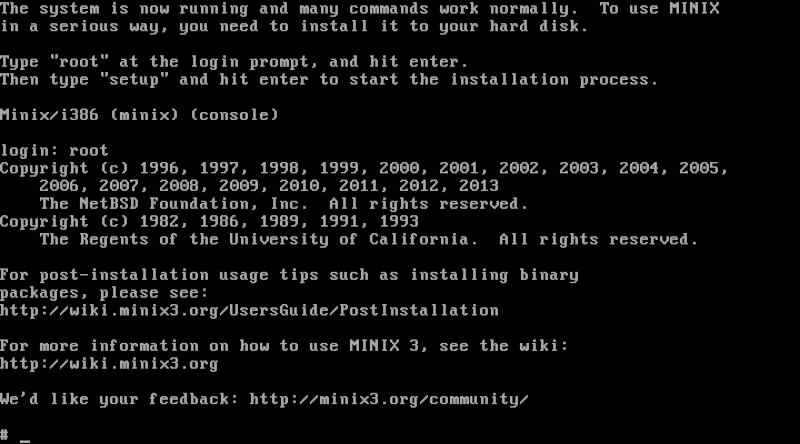

Minix

The secret system inside your computer

Andrew Tanenbaum created Minix in 1987 as a small, Unix-like operating system. He built it as a teaching tool to help students study a functional OS without needing to navigate the complex, restricted code found in professional Unix systems at the time. Linus Torvalds eventually used Minix as a reference and inspiration when he developed Linux.

Minix and Linux follow different design paths. Minix uses a microkernel where the kernel manages only basic tasks. Other components, like file systems and drivers, operate in isolated spaces. If a driver fails, a reincarnation server restarts it, which makes the system reliable and capable of self-healing.

You are likely using Minix without knowing it. Intel includes a version of Minix 3 in most modern processors. It runs within the Intel Management Engine, a hidden environment that operates independently from your main operating system.

It maintains its own drivers and networking so IT staff can manage computers remotely. Since it is embedded in billions of Intel chips, Minix is one of the most widely used operating systems in the world.

Linux doesn’t mean “other”

Linux gets treated like a general term that just means an OS is not PC, Android, or Mac. This is a dangerous precedent because it is based on a misunderstanding of how computers work. When you recognize that these systems are more than just Linux derivatives, you get a better appreciation for an ecosystem where unique visions continue to shape the future of what an OS can achieve. So don’t just say any OS outside of the well-known trio is Linux.

- Operating System

-

macOS

- Display (Size, Resolution)

-

13.6-inch Liquid Retina display

- Camera

-

1080p FaceTime HD

- Ports

-

Thunderbolt/USB 4, MagSafe, Headphone jack