Cybersecurity was once viewed as a technology issue that was addressed behind the scenes by the IT department. This is no longer the case. In fact, by 2026, cyber risk will be something that every organisation must think about at a leadership level. A cybersecurity incident has the ability to disrupt business operations, compromise sensitive data, destroy customer loyalty, and create regulatory issues that last far longer than the underlying technology issue.

But, due to AI transformation, many organisations are exploring modern AI tools to improve their security capabilities. Their concern is not the number of cyber attacks; it is the rate at which they are being executed.

This is also challenging for security teams that are heavily dependent on cloud technology, digital supply chains, and technology that utilises automated systems. This has created a world where teams are dealing with cyber incidents that they never expected.

The Expanding Cyber Threat Landscape

A few years ago, the main concerns of the majority of firms were monitoring network activity and implementing security software. The rationale behind this was quite simple: if we build a robust digital boundary, attackers will remain outside that boundary.

The world has changed a great deal since then. Employees work from home networks; partners have integrated their systems into our own via APIs; our business operations utilise a number of different cloud services. Each additional connection into our digital landscape is another surface area for attackers to aim at.

In response to these changes, cyber threats have moved from being a technology issue alone to a business issue. Senior management, compliance groups, legal groups, and communications groups all have a role to play in the event of a significant threat. If we are not prepared to face a threat, then we are essentially risking making the problem worse.

In addition to this, security groups are exploring the use of new AI technology to aid them in the fight to detect cyber attacks more quickly. With the aid of machine learning technology, we can analyze enormous amounts of network data to identify patterns that might have gone unnoticed. This technology is just as useful to hackers as it is to security groups.

Cyber Risk as a Leadership and Business Issue

The digital systems become an integral part of day-to-day activities, and cyber threats are no longer the concern of IT groups only. Today, cyber threats are an organizational issue.

The role of senior management groups, compliance groups, legal groups, and even communication groups is also important while dealing with any serious cyber threat. If companies are not prepared to handle such situations properly, the unavailability of coordination can lead to an even worse cyber threat.

Teams can seek structured advice on how to handle cyber threats with expert guidance from Cyber Management Alliance.

The Role of Artificial Intelligence in Modern Cybersecurity

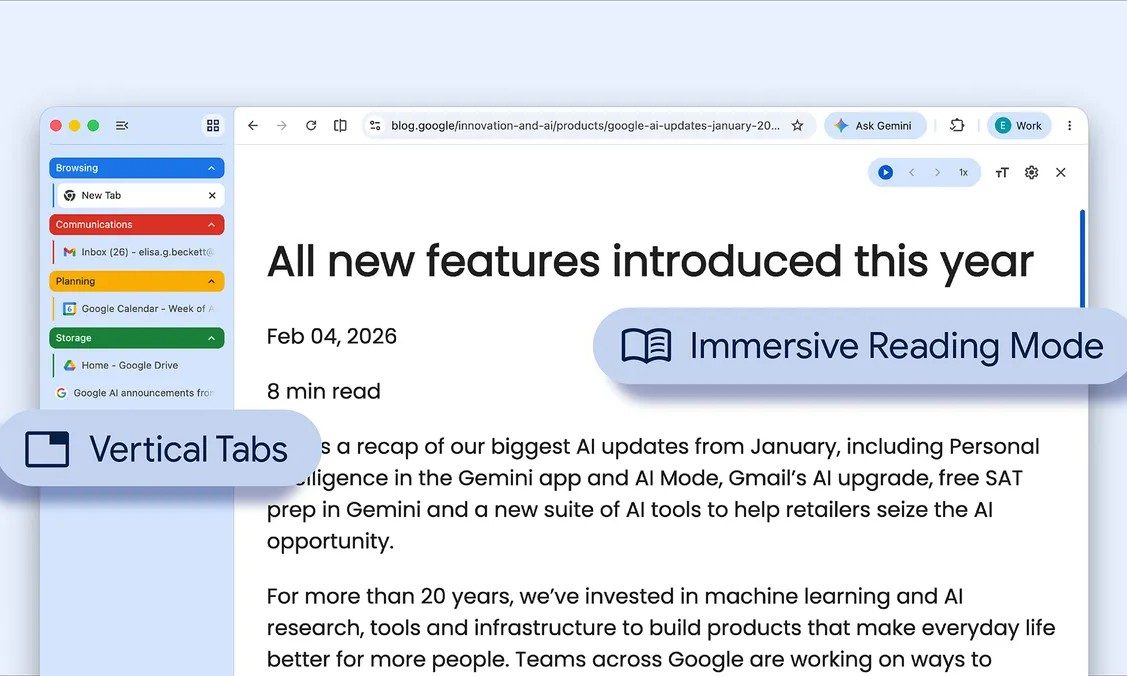

Many companies are using AI capabilities to enhance their entire cybersecurity operations due to the continuous rise of new cyberthreats. Security teams are also looking into ways to employ AI skills to detect threats more effectively. For instance, millions of network data points can be examined and analyed by AI-driven machine learning. So, businesses can identify values or patterns that can point to a potential threat.

The usefulness of AI capabilities keeps rising as the digital surroundings get more complicated. But, platforms like AIChief are showcasing several new types of AI tools that may help to understand the cybersecurity issues, threat detection, and much more.

Unfortunately, in addition to providing benefits for businesses, AI capabilities are also providing new opportunities for cybercriminals. The use of automated scripts, adaptive AI techniques, and intelligence-based reconnaissance methods will make it even easier for attackers and criminals to quickly carry out an attack against specified organizations while increasing the effectiveness of their efforts. Because of the technological capabilities of artificial intelligence, many businesses are evolving their security strategy.

Why Traditional Cybersecurity Defences Are No Longer Enough

For a long time, traditional cybersecurity methods have concentrated on stopping assaults before they start. Firewalls, endpoint protection software, and intrusion detection systems are some of the measures that are commonly found within a company’s infrastructure.

However, depending on these measures alone can lead to a false sense of security. Modern cyber attacks are often carried out by targeting people rather than machines.

The Importance of Cyber Incident Preparedness

In this digital era, cybersecurity planning is necessary. This is mainly due to difficulties in understanding who to make decisions with, how to communicate information internally, and what to do in such situations.

These delays often lead to more harm from a cyber incident and extend the period required for recovery. Therefore, businesses should be aware of how to create an efficient plan for responding to cyber incidents in order to reduce future risks.

Preparing for Cyber Risk in 2026

AI is getting advanced and better every day, so is the risk. This is because every year, new technologies, tools, and systems will be incorporated into the operations of businesses, and as such, new risks will be introduced. It means that organizations are no longer able to view cybersecurity as an issue that only the IT department handles behind the scenes.

In other words, to manage risks today, security teams will need to involve everyone within the organization when something goes wrong. The leadership team, operations team, and communication team are involved when something goes wrong. The businesses that are better prepared to deal with these issues are those that are well-equipped to deal with them.

As we continue into 2026, the resilience of enterprises will not be measured by the quality of tools they use to deal with risks but by how well they are prepared to deal with these risks.