Like many people using Unix-like operating systems for the first time, I was introduced to the concept of the pipeline. Here’s how a single character on the command line changed everything.

What is the pipe (|) character?

Building programs from other programs

The pipleline character, or |, sends the output of one Linux command to the input of another. It was originally developed at Bell Labs in the early 1970s. I was introduced to this in a book on Unix on what was then called “Mac OS X.” This simple character changed everything.

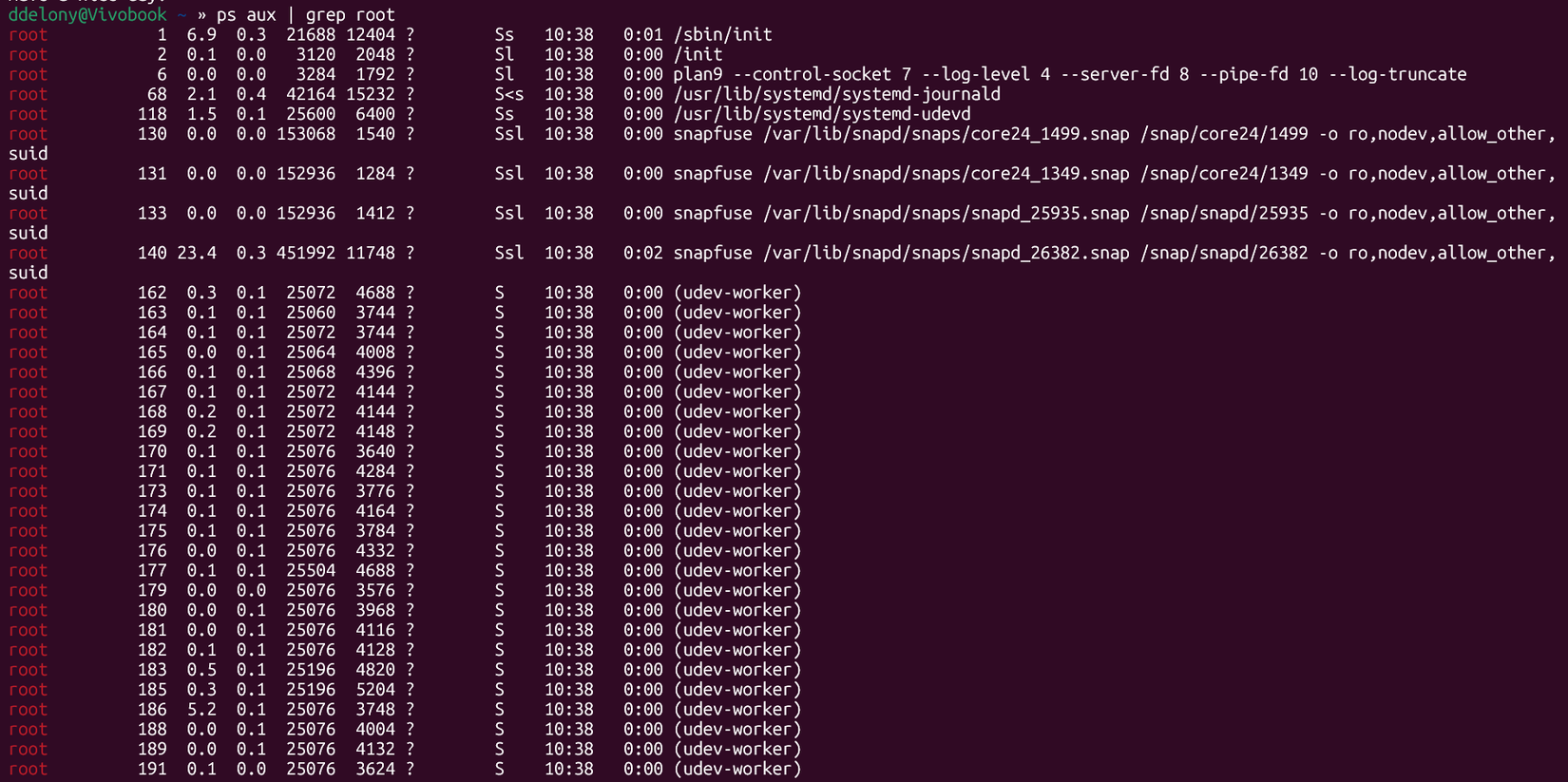

A good example of a pipeline is searching the output of a program for some string. Suppose I wanted to find out which processes on the system belonged to root. I would display all of the processes running on the system with the “ps aux” command and send that output to grep, searching for “root”

ps aux | grep root

The concept was invented by Doug McIllroy. At the time, Unix was actually considered user-friendly because you typed commands into a terminal instead of punching up a deck of computer cards to hand to an operator in the computer center and wait to receive your results (and your inevitable errors).

The latter was called “batch mode,” and it was how mainframe computers were used since their inception.

Pipelines make use of a concept in Unix and modern Linux systems of “standard input/output.” The standard input is the keyboard, and the standard output is the terminal. There’s also a “standard error” for error messages. This is also the terminal. You can “redirect” standard input. I’d seen this concept when trying to follow along with a C++ book, but it made a lot more sense to me when I started using the Linux command line.

You can save the output to a file for later with the > (greater than) symbol:

ps aux > allprocsOr you can feed the contents of a file to another program with the

cat < some_text.py

The vibe coding of the ’70s?

This approach might be simple, but it was a kind of manifesto. Instead of building monolithic programs, the ideal was now to build programs out of smaller components. This approach was called “software tools.” It’s similar how you can use the same hammers, nails, saws, and drills to create different woodworking projects.

One effect of designing programs that can be used as filters is that traditional Unix utilities don’t produce a lot of output. They tend to give only the minimum necessary, unless you use command-line switches to add extra input.

You can see a master in action. Brian Kernighan, who was a Bell Labs researcher at the time and the “K” in “K&R”, or The C Programming Language, co-authored with Dennis Ritchie, whips up a spelling checker in a 1982 Bell Labs film promoting Unix as a programming platform. The whole thing is worth watching, but the segment of interest begins around the 5-minute mark.

Although the names of the utilities seem different from standard modern ones, you can see how powerful this approach was. Creating a small program right from the command line. must have seemed like magic. Unix shell programming might have been like the vibe coding of the late ’70s and early ’80s. Both movements are touted as a method for professional programmers to manage complexity.

With vibe coding or the use of agents, it’s getting an AI to handle coding. The software tools method was an attempt to make software easier to change by breaking big programs into smaller chunks. This was a key component of the “Unix Philosophy” of building a program that does one thing well instead of a large program that tries to be all things to all people.

Practical examples of pipelining

Changing case with ease

Many shell pipelines process plain text. This includes searching for text, such as with the grep command I demonstrated earlier, but also making modifications to text streams.

One good example would be changing text. In researching this piece, I found that there weren’t any built-in commands for changing uppercase to lowercase and vice versa. Fortunately, it was easy to create my own pipelines.

For example, if I wanted to change an input to lowercase, I would use the tr command:

tr '[:upper:]' '[:lower:]'This will change every uppercase character to a lowercase one, using the GNU coreutils version of tr. By default, tr will use standard input, so I can just type this at the shell. I can then type text, and tr will lowercase it.

This can be useful, but I don’t want to type all that out every time. I have options for saving this for later. The simplest option would be to save a shell alias in my .zshrc file, since zsh is my shell of choice, so that it would be available every time. Since this function seems so small and being able to change case as a writer is useful, that’s what I’ve decided to do.

alias lc="tr '[:upper:]' '[:lower:]'" # Set standard input to lowercase

I could also define a shell function:

lc ()

{

tr '[:upper:]' '[:lower:]'

}I could also reverse the changes to the text to capitalize it as well:

alias uc="tr '[:lower:]' '[:upper:]'" # Uppercase standard input

I can also pipe output from other programs into these new commands I’ve just created. I can convert the text from the fortune program into uppercase and then make it colorful with lolcat:

fortune | uc | lolcat

In this case, it happened to be a passage from the Tao Te Ching.

The power of pipelining

These examples should show why Linux has such a hold on the programming community and among hobbyist developers like myself. It’s because you can get productive with simple pipelines quickly.

- Operating System

-

Ubuntu Linux 22.04 LTS

- CPU

-

13th Gen Intel Core i7-1360P

- GPU

-

Intel Iris Xe Graphics

- RAM

-

16GB DDR5

- Storage

-

512GB SSD

- Weight

-

2.71 lbs