Downtime is the enemy of any homelab—mine especially. So, I finally decided to do something about it and made my homelab highly available. I only was able to do that because I already knew about it, so, if you’ve never heard of high availability, here’s why you should know about this unique trick.

There’s always maintenance to do in a homelab

And I always push maintenance off to have as little downtime as possible

When I first started homelabbing, I was performing maintenance on my server quite often. From RAM changes to fixing operating system issues, swapping storage, or installing new hardware, my server was down more than it was online.

Having my server be down so much early on made me hesitant to host critical services on my own hardware, so I initially pushed those thoughts down the road until things started to settle. Eventually, I got in the groove with my servers and maintenance became something that didn’t happen nearly as often.

The problem is, there still is always something to maintain in a homelab. It could be relocating servers, putting in a new networking card, adding more RAM, installing a graphics card, or simply changing the IP on your network. Heck, even operating system or security updates on the server count as maintenance—and I almost always push my maintenance off for as long as I can.

Why do I push my maintenance off? Because it still means downtime, and both I and the others in my household have come to rely on the server’s uptime. So, I have to schedule maintenance and downtime when there is nobody on the server, and that’s just a hassle—so I finally deployed a high availability cluster in my homelab to solve the problem.

High availability makes maintenance seamless

Services automatically move to the next available node

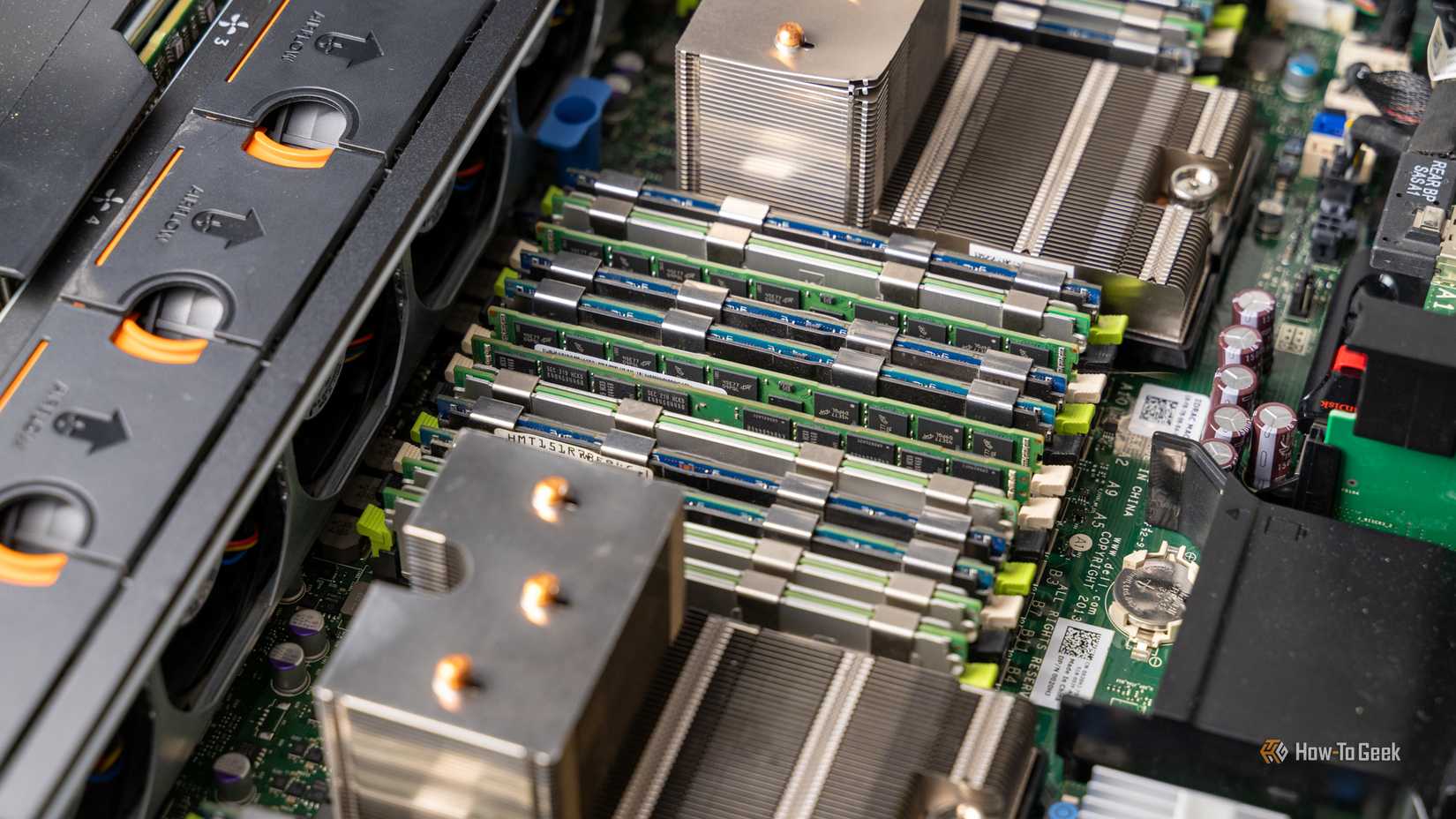

If you’ve never heard of high availability, it’s the one trick that every homelabber should at least know about. Essentially, you have to have three or more servers (it works best with an odd number of servers) that are joined together in a cluster. These servers have to have one central storage location that they all share, and a NAS works perfect for that.

It’s best to distribute the services that you self-host across all the nodes so that way no one node is running everything—that defeats the purpose of high availability. Whenever one node goes offline, the services that were running on that node simply get spun up on another node in the stack.

This happens through a process called quorum. Basically, when a system goes offline, the other systems in the cluster will “vote” to see who gets the services that still need to be online. Then, that virtual machine or container which is no longer accessible as its host is offline, goes online on whichever node won the vote.

You probably don’t need a NAS: Why a DAS is better for most people

Not sold on a NAS? Get a DAS instead

Eventually, when the node you’re doing maintenance on comes back online, the virtual machines or containers that were on it are migrated back and nothing misses a beat.

Depending on your hardware (and what operating systems or services you’re running), downtime here can be anywhere from a few seconds to a minute. Basically, however long it takes the virtual machine or container to boot up.

High availability doesn’t really kick in for simple things like a VM reboot, but it’s perfect for when you need to swap hardware or if you’re moving the location of your homelab from one area to another.

Your homelab acts as one big server, but there’s one big catch

Not every service should be made highly available

With high availability, your homelab essentially acts as one big system that just passes virtual machines or containers back and forth. However, it’s not without its faults.

I run Plex in my homelab, and that’s one service that I won’t make highly available. While it would be the perfect service to have highly available, it just doesn’t work well when in an HA cluster.

Plex relies heavily on metadata and hardware transcoding. As such, it’s constantly writing or rewriting files, and it needs dedicated hardware passed through to it.

While possible, it can be quite difficult to set up PCIe passthrough of a graphics card (either internal graphics or dedicated) to a virtual machine and have that same hardware be available on another system.

Let’s say you have three old office PCs which all have slightly different specs and generations of processors. The passthrough hardware IDs of the integrated graphics of those PCs is going to be different, which makes the Plex and VM configuration hard to have highly available.

Plus, the Plex Docker configuration can sometimes require UUIDs of hardware to be passed through from the host to work, or even configured in the Plex settings UI. Both of these things make high availability setups pretty difficult to configure.

However, high availability is perfect for services that don’t require hardware passthrough. Think Audiobookshelf, Pi-hole, FreshRSS, Minecraft servers, websites, and more—basically anything that doesn’t rely on dedicated hardware being passed through to a VM, and then to a container.

-

- Brand

-

ACEMAGIC

- CPU

-

i7-14650HX

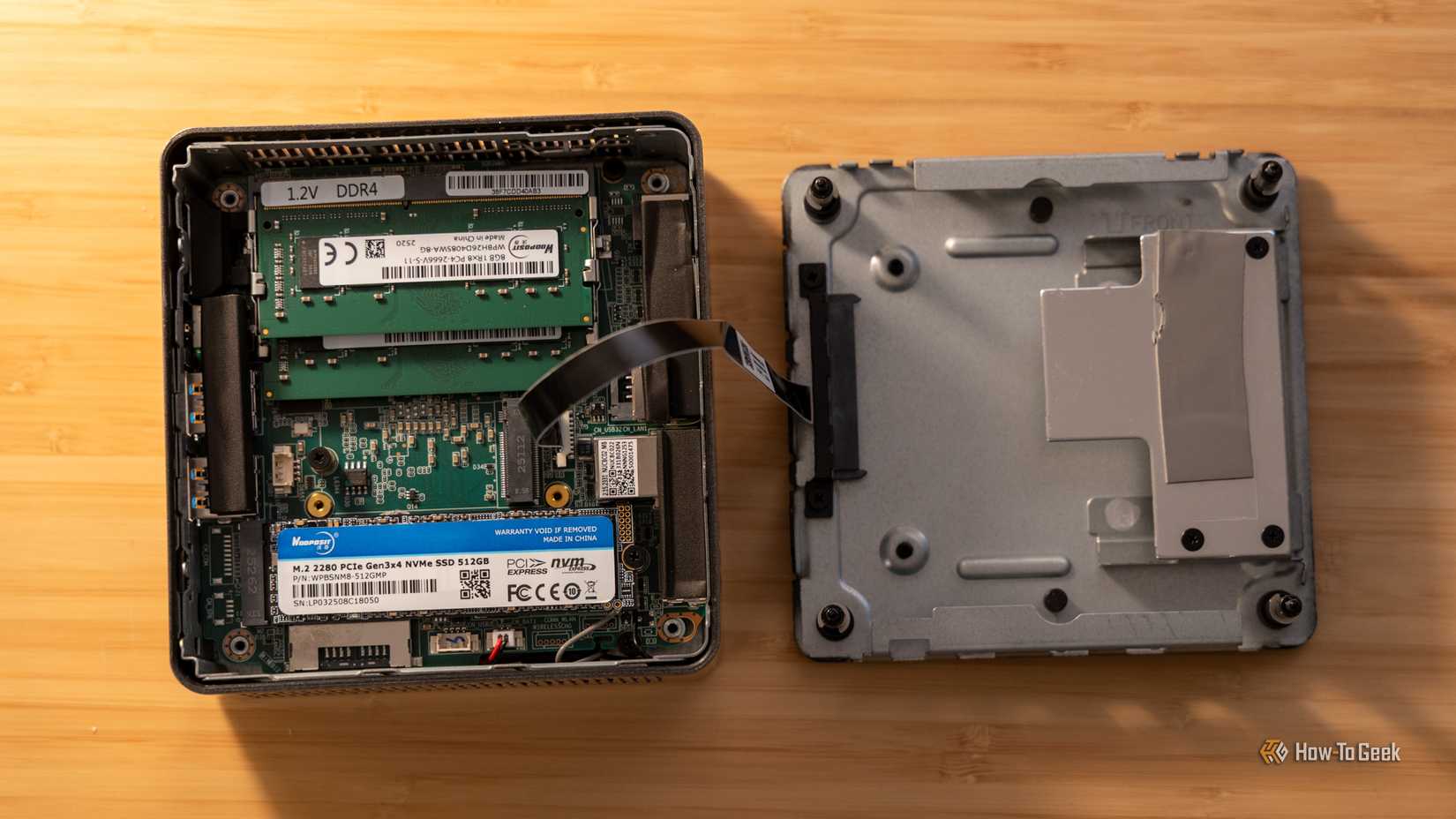

The ACEMAGIC M5 mini PC is perfect for setups that need a high-performance desktop with a small footprint. It boasts the Intel i7-14650HX 16-core 24-thread processor and 32GB DDR4 RAM (which is upgradable to 64GB). The pre-installed 1TB NVMe drive can be swapped out for a larger one though, and there’s a second NVMe slot for extra storage if needed.

-

- Brand

-

KAMURI

- CPU

-

i5-14450HX

The KAMRUI Hyper H2 Mini PC features an Intel Core i5-14450HX 10-core 16-thread processor and 16GB of DDR4 RAM. The included 512GB NVMe SSD comes with Windows 11 pre-installed so the system is ready to go out of the box.

-

- Brand

-

GEEKOM

- CPU

-

AMD Ryzen 5 7430U

- Graphics

-

AMD Vega 7

- Memory

-

16GB DDR4 SO-DIMM

- Storage

-

512GB NVMe (expandable)

The GEEKOM A5 mini PC packs 16GB of user-replaceable RAM, a user-swappable NVMe SSD, plus two other storage slots, giving you plenty of user-upgradability in this compact system. The Ryzen 5 processor packs plenty of power for general tasks, and it’s even great at lightweight gaming and CAD work too.

High availability isn’t for everyone, but it’s worth knowing about

Running a highly available setup in a homelab isn’t for the faint of heart. You really need to have at least three similar computers that you plan to keep on 24/7/365 for it to work well. This is a bit out of reach for those just getting started in homelabbing, and that’s okay.

I ran my homelab without high availability for over half a decade before I finally had the hardware to get a three node cluster online. Even then, I didn’t have every virtual machine set up for high availability, only the ones that I really couldn’t stand going down.

You might not deploy high availability in your homelab right now, but you definitely should know about it and at least have it in your back pocket for when you do have a setup capable of handling it.